欢迎阅读iOS探索系列(按序阅读食用效果更加)

- iOS探索 alloc流程

- iOS探索 内存对齐&malloc源码

- iOS探索 isa初始化&指向分析

- iOS探索 类的结构分析

- iOS探索 cache_t分析

- iOS探索 方法的本质和方法查找流程

- iOS探索 动态方法解析和消息转发机制

- iOS探索 浅尝辄止dyld加载流程

- iOS探索 类的加载过程

- iOS探索 分类、类拓展的加载过程

- iOS探索 isa面试题分析

- iOS探索 runtime面试题分析

- iOS探索 KVC原理及自定义

- iOS探索 KVO原理及自定义

- iOS探索 多线程原理

- iOS探索 多线程之GCD应用

- iOS探索 多线程之GCD底层分析

- iOS探索 多线程之NSOperation

- iOS探索 多线程面试题分析

- iOS探索 细数iOS中的那些锁

- iOS探索 全方位解读Block

写在前面

由于源码的篇幅较大、逻辑分支、宏定义较多,使得源码变得晦涩难懂,让开发者们望而却步。但如果带着疑问、有目的性的去看源码,就能减少难度,忽略无关的代码。首先提出本文分析的几个问题:

- 底层队列是如何创建的

- 死锁的产生

- dispatch_block任务的执行

- 同步函数

- 异步函数

- 信号量的原理

- 调度组的原理

- 单例的原理

本文篇幅会比较大,函数之间的跳转也比较多,但只对核心流程代码做了研究,相信看下来应该会有所收获

源码的选择判断

分析源码首先得获取到GCD源码,之前已经分析过objc、malloc、dyld源码,那么GCD内容是在哪份源码中呢?

这里分享一个小技巧,由于已知要研究GCD,所以有以下几种选择源码的方法

- Baidu/Google

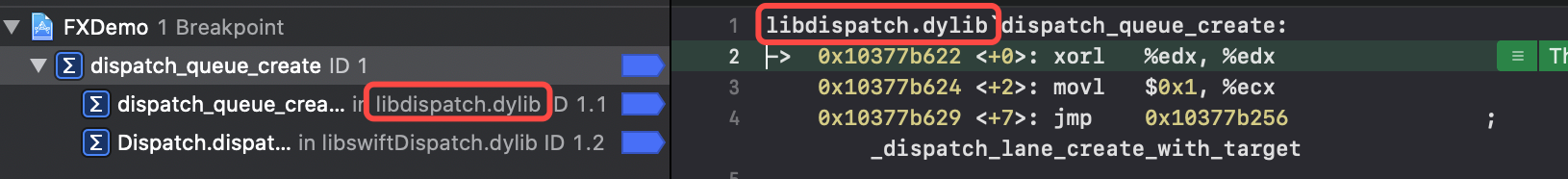

- 下符号断点

dispatch_queue_create - 仅使用

Debug->Debug Workflow->Always show Disassembly查看汇编也能看到

这样子就找到了我们需要的libdispatch源码

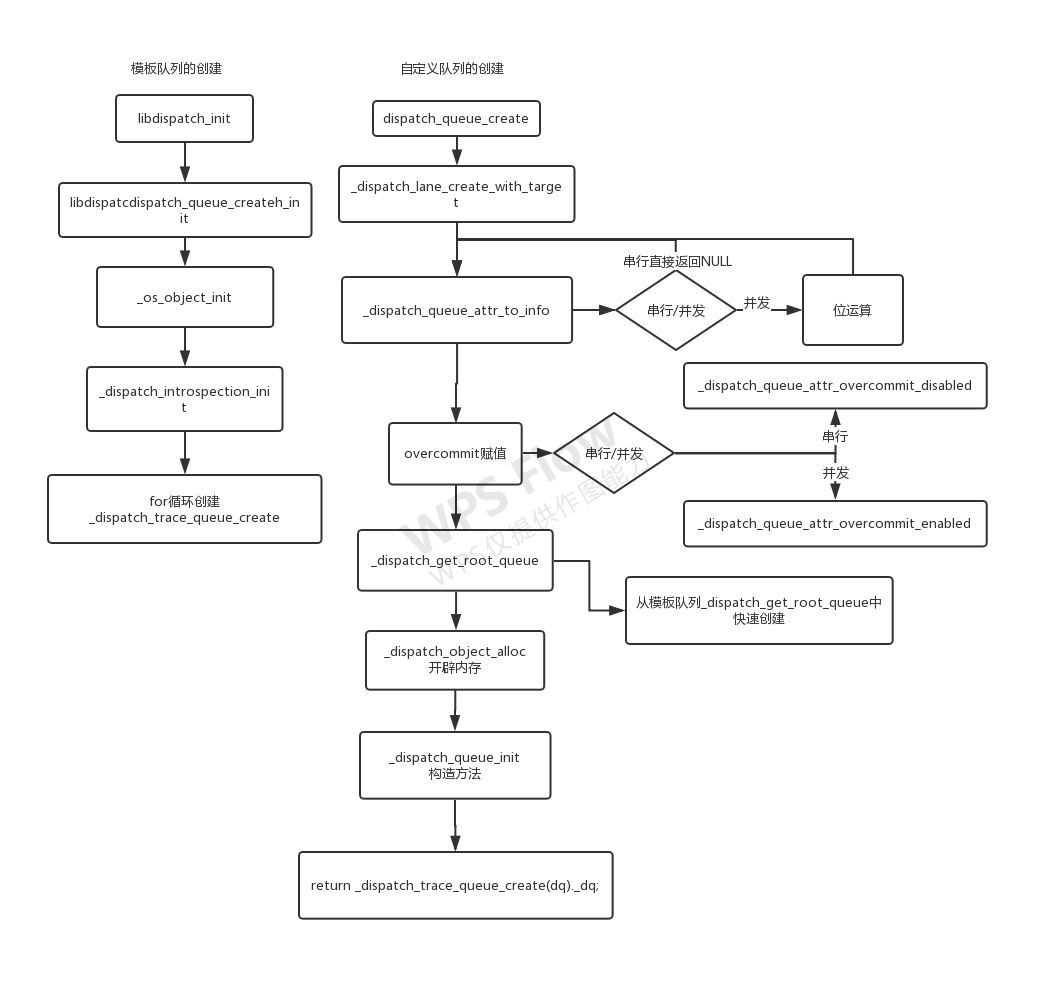

一、底层队列是如何创建的

上层使用dispatch_queue_create,全局进行搜索。但是会出现搜索结果众多的情况(66 results in 17 files),这时候就考验一个开发者阅读源码的经验了

- 新手会一个个找过去,宁可错杀一千不可放过一个

- 老司机则会根据上层使用修改搜索条件

- 由于创建队列代码为

dispatch_queue_create("", NULL),所以搜索dispatch_queue_create(——将筛选结果降至(21 results in 6 files) - 由于第一个参数为字符串,在c语言中用

const修饰,所以搜索dispatch_queue_create(const——将筛选结果降至(2 results in 2 files)

- 由于创建队列代码为

1.dispatch_queue_create

常规中间层封装——便于代码迭代不改变上层使用

dispatch_queue_t

dispatch_queue_create(const char *label, dispatch_queue_attr_t attr)

{

return _dispatch_lane_create_with_target(label, attr,

DISPATCH_TARGET_QUEUE_DEFAULT, true);

}

有时候也需要注意下源码中函数中的传参:

- 此时

label是上层的逆序全程域名,主要用在崩溃调试 attr是NULL/DISPATCH_QUEUE_SERIAL、DISPATCH_QUEUE_CONCURRENT,用于区分队列是异步还是同步的

#define DISPATCH_QUEUE_SERIAL NULL 串行队列的宏定义其实是个NULL

2._dispatch_lane_create_with_target

DISPATCH_NOINLINE

static dispatch_queue_t

_dispatch_lane_create_with_target(const char *label, dispatch_queue_attr_t dqa,

dispatch_queue_t tq, bool legacy)

{

dispatch_queue_attr_info_t dqai = _dispatch_queue_attr_to_info(dqa);

...

}

dqa是这个函数中的第二个参数,即dispatch_queue_create中的attr

用到了串行/并发的区分符,我们就跟进去瞧瞧

3._dispatch_queue_attr_to_info

dispatch_queue_attr_info_t

_dispatch_queue_attr_to_info(dispatch_queue_attr_t dqa)

{

dispatch_queue_attr_info_t dqai = { };

if (!dqa) return dqai;

#if DISPATCH_VARIANT_STATIC

if (dqa == &_dispatch_queue_attr_concurrent) {

dqai.dqai_concurrent = true;

return dqai;

}

#endif

if (dqa < _dispatch_queue_attrs ||

dqa >= &_dispatch_queue_attrs[DISPATCH_QUEUE_ATTR_COUNT]) {

DISPATCH_CLIENT_CRASH(dqa->do_vtable, "Invalid queue attribute");

}

size_t idx = (size_t)(dqa - _dispatch_queue_attrs);

dqai.dqai_inactive = (idx % DISPATCH_QUEUE_ATTR_INACTIVE_COUNT);

idx /= DISPATCH_QUEUE_ATTR_INACTIVE_COUNT;

dqai.dqai_concurrent = !(idx % DISPATCH_QUEUE_ATTR_CONCURRENCY_COUNT);

idx /= DISPATCH_QUEUE_ATTR_CONCURRENCY_COUNT;

dqai.dqai_relpri = -(idx % DISPATCH_QUEUE_ATTR_PRIO_COUNT);

idx /= DISPATCH_QUEUE_ATTR_PRIO_COUNT;

dqai.dqai_qos = idx % DISPATCH_QUEUE_ATTR_QOS_COUNT;

idx /= DISPATCH_QUEUE_ATTR_QOS_COUNT;

dqai.dqai_autorelease_frequency =

idx % DISPATCH_QUEUE_ATTR_AUTORELEASE_FREQUENCY_COUNT;

idx /= DISPATCH_QUEUE_ATTR_AUTORELEASE_FREQUENCY_COUNT;

dqai.dqai_overcommit = idx % DISPATCH_QUEUE_ATTR_OVERCOMMIT_COUNT;

idx /= DISPATCH_QUEUE_ATTR_OVERCOMMIT_COUNT;

return dqai;

}

dispatch_queue_attr_info_t dqai = { };进行初始化dispatch_queue_attr_info_t与isa一样,是个位域结构

typedef struct dispatch_queue_attr_info_s {

dispatch_qos_t dqai_qos : 8;

int dqai_relpri : 8;

uint16_t dqai_overcommit:2;

uint16_t dqai_autorelease_frequency:2;

uint16_t dqai_concurrent:1;

uint16_t dqai_inactive:1;

} dispatch_queue_attr_info_t;

- 接下来把目光放到这句代码

if (!dqa) return dqai;- 串行队列的

dqa为NULL,直接返回NULL - 异步队列往下继续走

- 串行队列的

size_t idx = (size_t)(dqa - _dispatch_queue_attrs);- 使用

DISPATCH_QUEUE_CONCURRENT的宏定义来进行位运算 - 并发队列的并发数

dqai.dqai_concurrent与串行队列不同

- 使用

#define DISPATCH_QUEUE_CONCURRENT \

DISPATCH_GLOBAL_OBJECT(dispatch_queue_attr_t, \

_dispatch_queue_attr_concurrent)

4.回到_dispatch_lane_create_with_target

我们要研究的是队列的创建,所以可以忽略源码中的细节,专注查找alloc之类的代码

DISPATCH_NOINLINE

static dispatch_queue_t

_dispatch_lane_create_with_target(const char *label, dispatch_queue_attr_t dqa,

dispatch_queue_t tq, bool legacy)

{

dispatch_queue_attr_info_t dqai = _dispatch_queue_attr_to_info(dqa);

dispatch_qos_t qos = dqai.dqai_qos;

...

// 这是部分的逻辑分支

if (tq->dq_priority & DISPATCH_PRIORITY_FLAG_OVERCOMMIT) {

overcommit = _dispatch_queue_attr_overcommit_enabled;

} else {

overcommit = _dispatch_queue_attr_overcommit_disabled;

}

if (!tq) {

tq = _dispatch_get_root_queue(

qos == DISPATCH_QOS_UNSPECIFIED ? DISPATCH_QOS_DEFAULT : qos, // 4

overcommit == _dispatch_queue_attr_overcommit_enabled)->_as_dq; // 0 1

if (unlikely(!tq)) {

DISPATCH_CLIENT_CRASH(qos, "Invalid queue attribute");

}

}

...

// 开辟内存 - 生成响应的对象 queue

dispatch_lane_t dq = _dispatch_object_alloc(vtable,

sizeof(struct dispatch_lane_s));

// 构造方法

_dispatch_queue_init(dq, dqf, dqai.dqai_concurrent ?

DISPATCH_QUEUE_WIDTH_MAX : 1, DISPATCH_QUEUE_ROLE_INNER |

(dqai.dqai_inactive ? DISPATCH_QUEUE_INACTIVE : 0));

// 标签

dq->dq_label = label;

// 优先级

dq->dq_priority = _dispatch_priority_make((dispatch_qos_t)dqai.dqai_qos,

dqai.dqai_relpri);

if (overcommit == _dispatch_queue_attr_overcommit_enabled) {

dq->dq_priority |= DISPATCH_PRIORITY_FLAG_OVERCOMMIT;

}

if (!dqai.dqai_inactive) {

_dispatch_queue_priority_inherit_from_target(dq, tq);

_dispatch_lane_inherit_wlh_from_target(dq, tq);

}

_dispatch_retain(tq);

dq->do_targetq = tq;

_dispatch_object_debug(dq, "%s", __func__);

return _dispatch_trace_queue_create(dq)._dq;

}

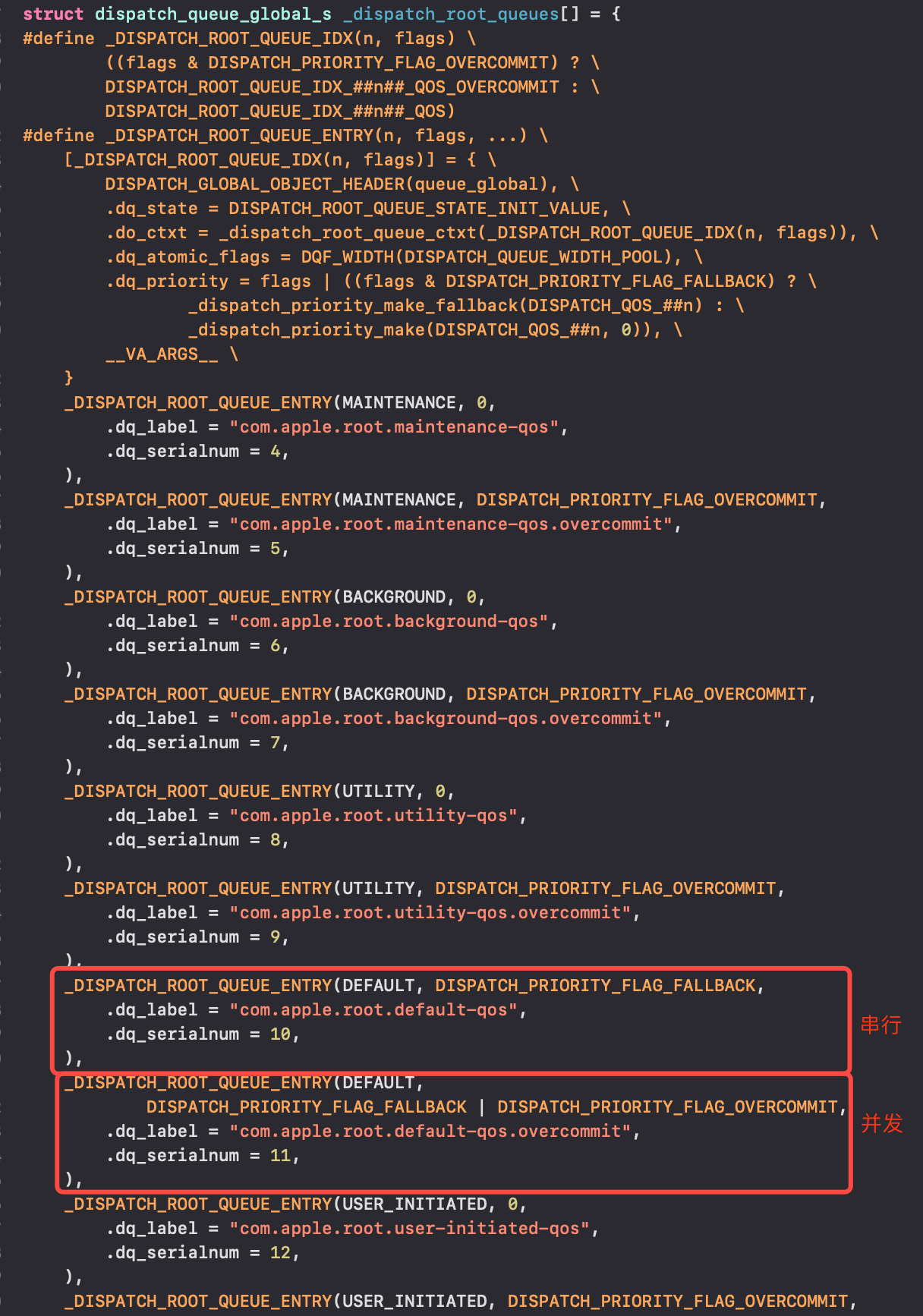

4.1 _dispatch_get_root_queue创建

tq是当前函数_dispatch_lane_create_with_target中的参数,该函数被调用时传了DISPATCH_TARGET_QUEUE_DEFAULT,所以if (!tq)一定为真

#define DISPATCH_TARGET_QUEUE_DEFAULT NULL

_dispatch_get_root_queue两个传参分别为4和0/1qos == DISPATCH_QOS_UNSPECIFIED ? DISPATCH_QOS_DEFAULT : qos由于没有对qos进行过赋值,所以默认为0overcommit == _dispatch_queue_attr_overcommit_enabled)->_as_dq;在代码区有备注——“这是部分的逻辑分支”,串行队列为_dispatch_queue_attr_overcommit_disabled,并发队列是_dispatch_queue_attr_overcommit_enabled

#define DISPATCH_QOS_UNSPECIFIED ((dispatch_qos_t)0)

#define DISPATCH_QOS_DEFAULT ((dispatch_qos_t)4)

DISPATCH_ALWAYS_INLINE DISPATCH_CONST

static inline dispatch_queue_global_t

_dispatch_get_root_queue(dispatch_qos_t qos, bool overcommit)

{

if (unlikely(qos < DISPATCH_QOS_MIN || qos > DISPATCH_QOS_MAX)) {

DISPATCH_CLIENT_CRASH(qos, "Corrupted priority");

}

return &_dispatch_root_queues[2 * (qos - 1) + overcommit];

}

- 串行队列、并发队列分别renturn

&_dispatch_root_queues[6]、&_dispatch_root_queues[7]

全局搜索_dispatch_root_queues[](因为是个数组)可以看到数组中第7个和第8个分别是串行队列和并发队列

猜想:自定义队列是从_dispatch_root_queues模板中拿出来创建的

4.2 _dispatch_object_alloc开辟内存

其实GCD对象多为dispatch_object_t创建而来:苹果源码注释中有提到——它是所有分派对象的抽象基类型;dispatch_object_t作为一个联合体,只要一创建,就可以使用你想要的类型(联合体的“有我没他”属性)

联合体的思想属于多态,跟objc_object的继承思想略有不同

/*!

* @typedef dispatch_object_t

*

* @abstract

* Abstract base type for all dispatch objects.

* The details of the type definition are language-specific.

*

* @discussion

* Dispatch objects are reference counted via calls to dispatch_retain() and

* dispatch_release().

*/

typedef union {

struct _os_object_s *_os_obj;

struct dispatch_object_s *_do;

struct dispatch_queue_s *_dq;

struct dispatch_queue_attr_s *_dqa;

struct dispatch_group_s *_dg;

struct dispatch_source_s *_ds;

struct dispatch_mach_s *_dm;

struct dispatch_mach_msg_s *_dmsg;

struct dispatch_semaphore_s *_dsema;

struct dispatch_data_s *_ddata;

struct dispatch_io_s *_dchannel;

} dispatch_object_t DISPATCH_TRANSPARENT_UNION;

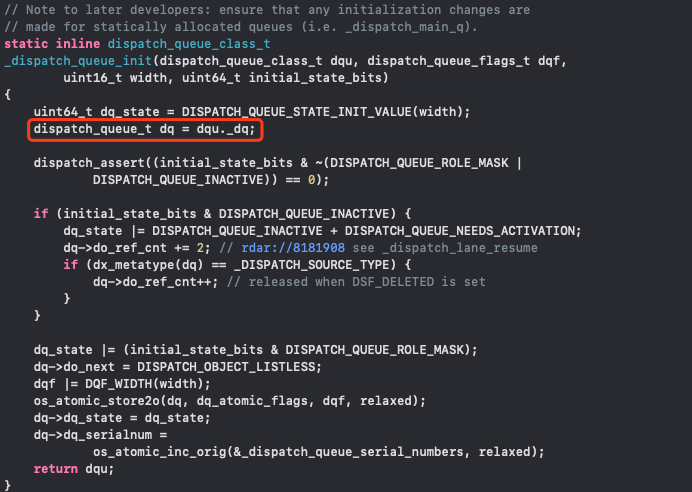

4.3 _dispatch_queue_init进行构造

- 前文中提到了并发队列的

dqai.dqai_concurrent进行了设置,所以用dqai.dqai_concurrent进行区分并发队列和串行队列 - 串行队列的

width为1,并发队列的width为DISPATCH_QUEUE_WIDTH_MAX - 咔咔一顿操作之后返回

dispatch_queue_class_t,即对传参dqu进行了赋值修改等操作

4.4 返回dispatch_queue_t

_dispatch_retain(tq);

dq->do_targetq = tq;

_dispatch_object_debug(dq, "%s", __func__);

return _dispatch_trace_queue_create(dq)._dq;

dq是dispatch_lane_t类型tq是dispatch_queue_t类型_dispatch_trace_queue_create(dq)返回类型为dispatch_queue_class_t,它是个联合体dispatch_queue_class_t中的dq就是最终返回的dispatch_queue_t

typedef struct dispatch_queue_s *dispatch_queue_t;

typedef union {

struct dispatch_queue_s *_dq;

struct dispatch_workloop_s *_dwl;

struct dispatch_lane_s *_dl;

struct dispatch_queue_static_s *_dsq;

struct dispatch_queue_global_s *_dgq;

struct dispatch_queue_pthread_root_s *_dpq;

struct dispatch_source_s *_ds;

struct dispatch_mach_s *_dm;

dispatch_lane_class_t _dlu;

#ifdef __OBJC__

id<OS_dispatch_queue> _objc_dq;

#endif

} dispatch_queue_class_t DISPATCH_TRANSPARENT_UNION;

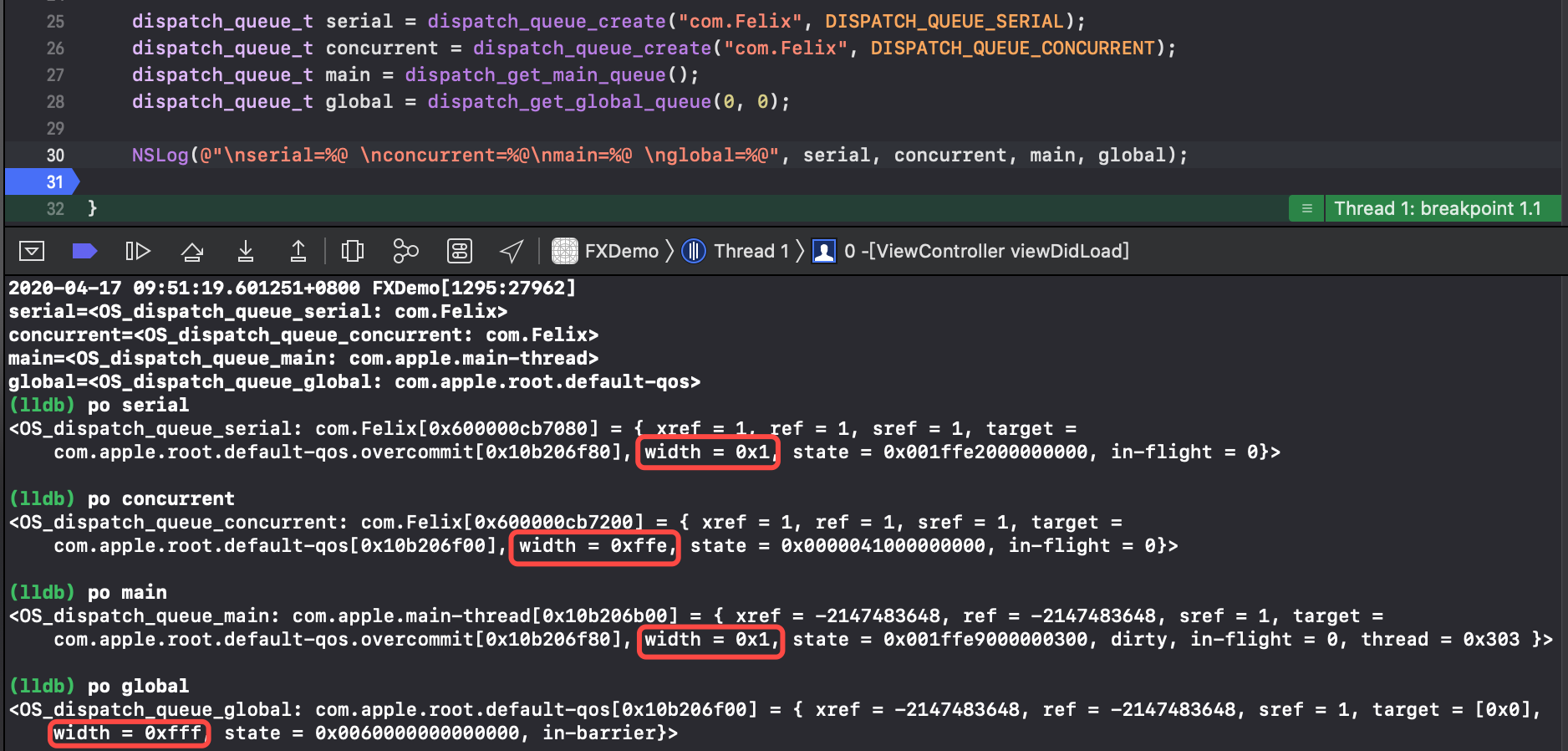

5.验证猜想

NSLog会调用对象的describtion方法,而LLDB可以打印底层的指针

- 可以看到串行队列、并发队列的

target与模板中对应的一模一样 - 同样的,串行队列、并发队列、主队列、全局队列的

width各不相同(width表示队列中能调度的最大任务数)- 串行队列和主队列的

width没有疑问 - 并发队列的

width为DISPATCH_QUEUE_WIDTH_MAX是满值-2 - 全局队列的

width为DISPATCH_QUEUE_WIDTH_POOL是满值-1

- 串行队列和主队列的

#define DISPATCH_QUEUE_WIDTH_FULL_BIT 0x0020000000000000ull

#define DISPATCH_QUEUE_WIDTH_FULL 0x1000ull

#define DISPATCH_QUEUE_WIDTH_POOL (DISPATCH_QUEUE_WIDTH_FULL - 1)

#define DISPATCH_QUEUE_WIDTH_MAX (DISPATCH_QUEUE_WIDTH_FULL - 2)

struct dispatch_queue_static_s _dispatch_main_q = {

DISPATCH_GLOBAL_OBJECT_HEADER(queue_main),

#if !DISPATCH_USE_RESOLVERS

.do_targetq = _dispatch_get_default_queue(true),

#endif

.dq_state = DISPATCH_QUEUE_STATE_INIT_VALUE(1) |

DISPATCH_QUEUE_ROLE_BASE_ANON,

.dq_label = "com.apple.main-thread",

.dq_atomic_flags = DQF_THREAD_BOUND | DQF_WIDTH(1),

.dq_serialnum = 1,

};

struct dispatch_queue_global_s _dispatch_mgr_root_queue = {

DISPATCH_GLOBAL_OBJECT_HEADER(queue_global),

.dq_state = DISPATCH_ROOT_QUEUE_STATE_INIT_VALUE,

.do_ctxt = &_dispatch_mgr_root_queue_pthread_context,

.dq_label = "com.apple.root.libdispatch-manager",

.dq_atomic_flags = DQF_WIDTH(DISPATCH_QUEUE_WIDTH_POOL),

.dq_priority = DISPATCH_PRIORITY_FLAG_MANAGER |

DISPATCH_PRIORITY_SATURATED_OVERRIDE,

.dq_serialnum = 3,

.dgq_thread_pool_size = 1,

};

解决了自定义队列的创建流程,那么问题又来了,

_dispatch_root_queues的创建又是怎么创建的呢?

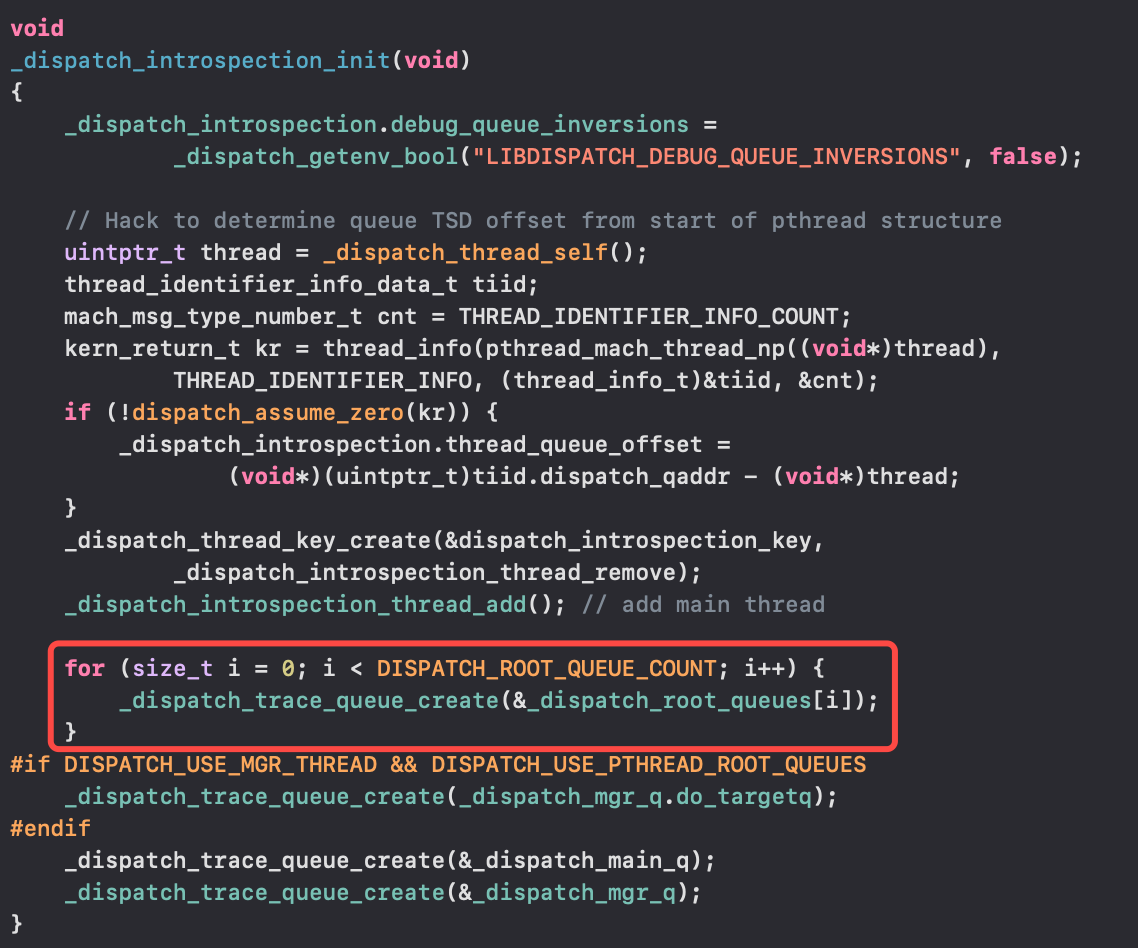

6._dispatch_root_queues的创建

除了dispatch_get_main_queue,其他队列都是通过_dispatch_root_queues创建的

libdispatch_init之后调用_dispatch_introspection_init,通过 for 循环,调用_dispatch_trace_queue_create,再取出_dispatch_root_queues里的地址指针一个个创建出来的

7.一图看懂自定义队列创建流程

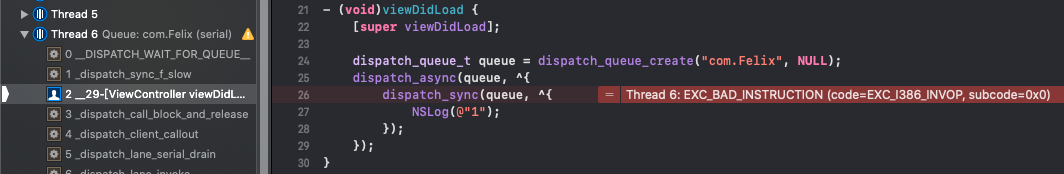

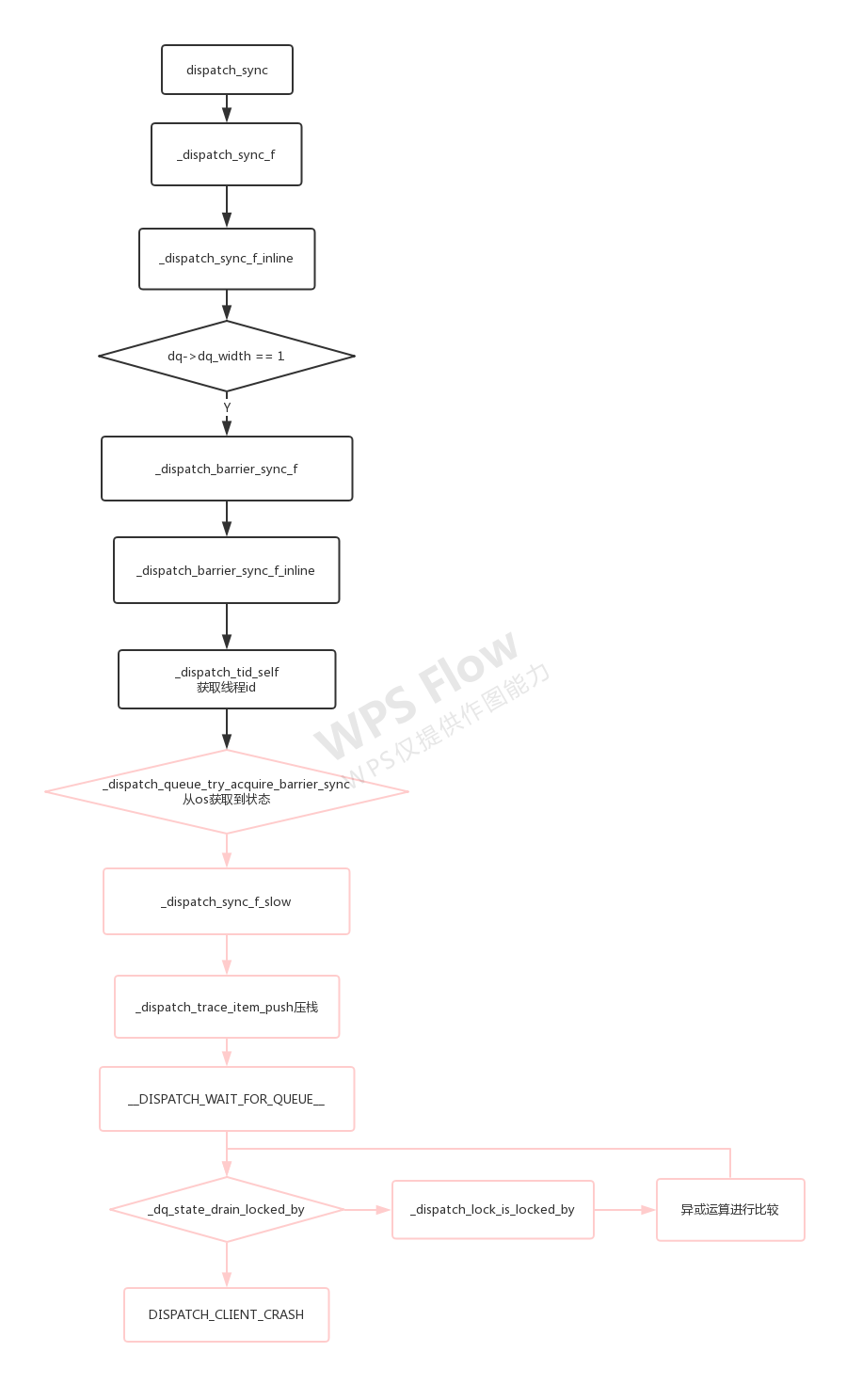

二、死锁的产生

死锁的产生是由于任务的相互等待,那么底层又是怎么实现死锁的呢?

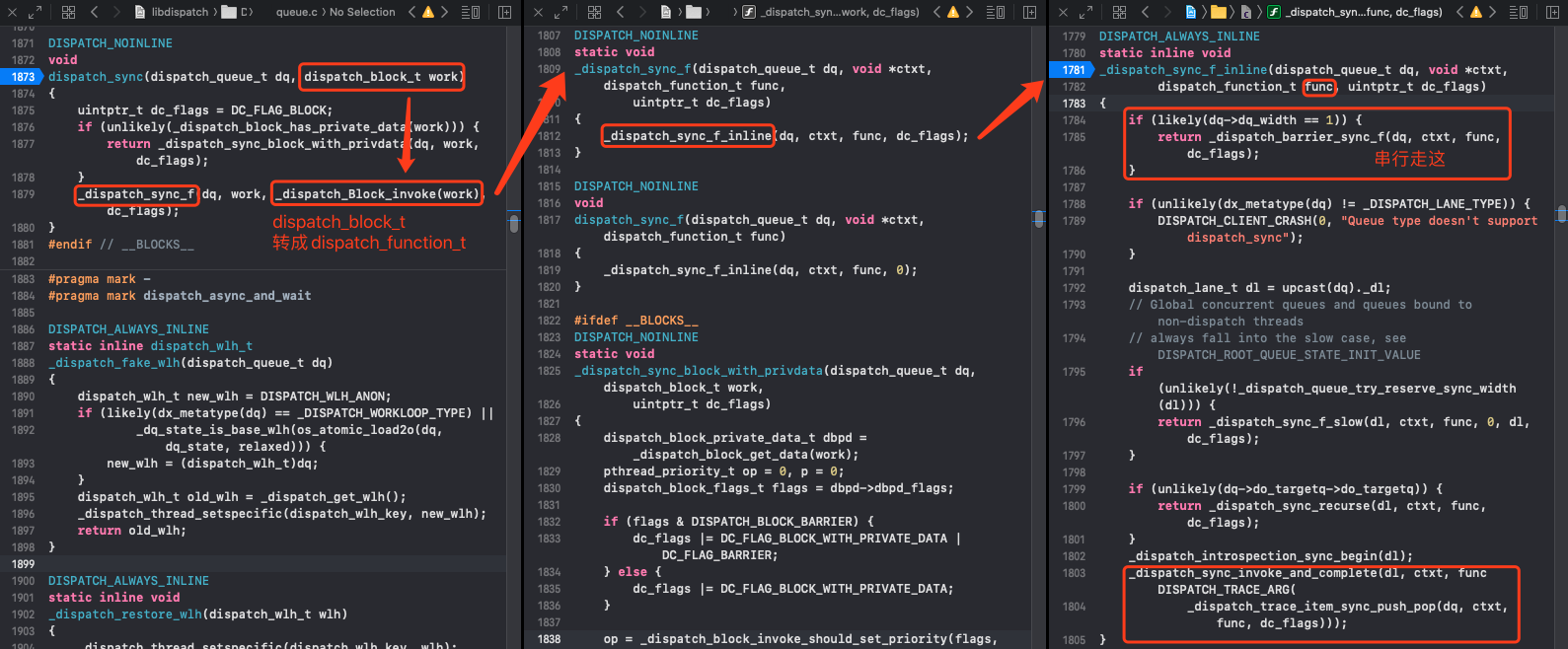

1.dispatch_sync

全局搜索dispatch_sync(dispatch,忽略unlikely小概率事件

DISPATCH_NOINLINE

void

dispatch_sync(dispatch_queue_t dq, dispatch_block_t work)

{

uintptr_t dc_flags = DC_FLAG_BLOCK;

if (unlikely(_dispatch_block_has_private_data(work))) {

return _dispatch_sync_block_with_privdata(dq, work, dc_flags);

}

_dispatch_sync_f(dq, work, _dispatch_Block_invoke(work), dc_flags);

}

#endif // __BLOCKS__

2._dispatch_sync_f

还是常规的中间层封装

DISPATCH_NOINLINE

static void

_dispatch_sync_f(dispatch_queue_t dq, void *ctxt, dispatch_function_t func,

uintptr_t dc_flags)

{

_dispatch_sync_f_inline(dq, ctxt, func, dc_flags);

}

3._dispatch_sync_f_inline

- 已知

串行队列的width是1,所以串行队列满足dq->dq_width == 1return _dispatch_barrier_sync_f(dq, ctxt, func, dc_flags);

并发队列会继续往下走

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_sync_f_inline(dispatch_queue_t dq, void *ctxt,

dispatch_function_t func, uintptr_t dc_flags)

{

if (likely(dq->dq_width == 1)) {

return _dispatch_barrier_sync_f(dq, ctxt, func, dc_flags);

}

if (unlikely(dx_metatype(dq) != _DISPATCH_LANE_TYPE)) {

DISPATCH_CLIENT_CRASH(0, "Queue type doesn't support dispatch_sync");

}

dispatch_lane_t dl = upcast(dq)._dl;

// Global concurrent queues and queues bound to non-dispatch threads

// always fall into the slow case, see DISPATCH_ROOT_QUEUE_STATE_INIT_VALUE

if (unlikely(!_dispatch_queue_try_reserve_sync_width(dl))) {

return _dispatch_sync_f_slow(dl, ctxt, func, 0, dl, dc_flags);

}

if (unlikely(dq->do_targetq->do_targetq)) {

return _dispatch_sync_recurse(dl, ctxt, func, dc_flags);

}

_dispatch_introspection_sync_begin(dl);

_dispatch_sync_invoke_and_complete(dl, ctxt, func DISPATCH_TRACE_ARG(

_dispatch_trace_item_sync_push_pop(dq, ctxt, func, dc_flags)));

}

4._dispatch_barrier_sync_f

串行队列和栅栏函数的比较相似,所以跳转到这里,还是中间层封装

DISPATCH_NOINLINE

static void

_dispatch_barrier_sync_f(dispatch_queue_t dq, void *ctxt,

dispatch_function_t func, uintptr_t dc_flags)

{

_dispatch_barrier_sync_f_inline(dq, ctxt, func, dc_flags);

}

5._dispatch_barrier_sync_f_inline

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_barrier_sync_f_inline(dispatch_queue_t dq, void *ctxt,

dispatch_function_t func, uintptr_t dc_flags)

{

dispatch_tid tid = _dispatch_tid_self();

if (unlikely(dx_metatype(dq) != _DISPATCH_LANE_TYPE)) {

DISPATCH_CLIENT_CRASH(0, "Queue type doesn't support dispatch_sync");

}

dispatch_lane_t dl = upcast(dq)._dl;

// The more correct thing to do would be to merge the qos of the thread

// that just acquired the barrier lock into the queue state.

//

// However this is too expensive for the fast path, so skip doing it.

// The chosen tradeoff is that if an enqueue on a lower priority thread

// contends with this fast path, this thread may receive a useless override.

//

// Global concurrent queues and queues bound to non-dispatch threads

// always fall into the slow case, see DISPATCH_ROOT_QUEUE_STATE_INIT_VALUE

// 死锁

if (unlikely(!_dispatch_queue_try_acquire_barrier_sync(dl, tid))) {

return _dispatch_sync_f_slow(dl, ctxt, func, DC_FLAG_BARRIER, dl,

DC_FLAG_BARRIER | dc_flags);

}

if (unlikely(dl->do_targetq->do_targetq)) {

return _dispatch_sync_recurse(dl, ctxt, func,

DC_FLAG_BARRIER | dc_flags);

}

_dispatch_introspection_sync_begin(dl);

_dispatch_lane_barrier_sync_invoke_and_complete(dl, ctxt, func

DISPATCH_TRACE_ARG(_dispatch_trace_item_sync_push_pop(

dq, ctxt, func, dc_flags | DC_FLAG_BARRIER)));

}

5.1 _dispatch_tid_self

_dispatch_tid_self是个宏定义,最终调用_dispatch_thread_getspecific来获取当前线程id(线程是以key-value的形式存在的)

#define _dispatch_tid_self() ((dispatch_tid)_dispatch_thread_port())

#if TARGET_OS_WIN32

#define _dispatch_thread_port() ((mach_port_t)0)

#elif !DISPATCH_USE_THREAD_LOCAL_STORAGE

#if DISPATCH_USE_DIRECT_TSD

#define _dispatch_thread_port() ((mach_port_t)(uintptr_t)\

_dispatch_thread_getspecific(_PTHREAD_TSD_SLOT_MACH_THREAD_SELF))

#else

#define _dispatch_thread_port() pthread_mach_thread_np(_dispatch_thread_self())

#endif

#endif

是时候表演真正的技术了!接下来就是死锁的核心分析!

5.2 _dispatch_queue_try_acquire_barrier_sync

_dispatch_queue_try_acquire_barrier_sync会从os底层获取到一个状态

DISPATCH_ALWAYS_INLINE DISPATCH_WARN_RESULT

static inline bool

_dispatch_queue_try_acquire_barrier_sync(dispatch_queue_class_t dq, uint32_t tid)

{

return _dispatch_queue_try_acquire_barrier_sync_and_suspend(dq._dl, tid, 0);

}

DISPATCH_ALWAYS_INLINE DISPATCH_WARN_RESULT

static inline bool

_dispatch_queue_try_acquire_barrier_sync_and_suspend(dispatch_lane_t dq,

uint32_t tid, uint64_t suspend_count)

{

uint64_t init = DISPATCH_QUEUE_STATE_INIT_VALUE(dq->dq_width);

uint64_t value = DISPATCH_QUEUE_WIDTH_FULL_BIT | DISPATCH_QUEUE_IN_BARRIER |

_dispatch_lock_value_from_tid(tid) |

(suspend_count * DISPATCH_QUEUE_SUSPEND_INTERVAL);

uint64_t old_state, new_state;

// 从底层获取信息 -- 状态信息 - 当前队列 - 线程

return os_atomic_rmw_loop2o(dq, dq_state, old_state, new_state, acquire, {

uint64_t role = old_state & DISPATCH_QUEUE_ROLE_MASK;

if (old_state != (init | role)) {

os_atomic_rmw_loop_give_up(break);

}

new_state = value | role;

});

}

5.3 _dispatch_sync_f_slow

在5.2获取到new_state就会来到这里(死锁崩溃时的调用栈中就有这个函数)

DISPATCH_NOINLINE

static void

_dispatch_sync_f_slow(dispatch_queue_class_t top_dqu, void *ctxt,

dispatch_function_t func, uintptr_t top_dc_flags,

dispatch_queue_class_t dqu, uintptr_t dc_flags)

{

dispatch_queue_t top_dq = top_dqu._dq;

dispatch_queue_t dq = dqu._dq;

if (unlikely(!dq->do_targetq)) {

return _dispatch_sync_function_invoke(dq, ctxt, func);

}

pthread_priority_t pp = _dispatch_get_priority();

struct dispatch_sync_context_s dsc = {

.dc_flags = DC_FLAG_SYNC_WAITER | dc_flags,

.dc_func = _dispatch_async_and_wait_invoke,

.dc_ctxt = &dsc,

.dc_other = top_dq,

.dc_priority = pp | _PTHREAD_PRIORITY_ENFORCE_FLAG,

.dc_voucher = _voucher_get(),

.dsc_func = func,

.dsc_ctxt = ctxt,

.dsc_waiter = _dispatch_tid_self(),

};

_dispatch_trace_item_push(top_dq, &dsc);

__DISPATCH_WAIT_FOR_QUEUE__(&dsc, dq);

if (dsc.dsc_func == NULL) {

dispatch_queue_t stop_dq = dsc.dc_other;

return _dispatch_sync_complete_recurse(top_dq, stop_dq, top_dc_flags);

}

_dispatch_introspection_sync_begin(top_dq);

_dispatch_trace_item_pop(top_dq, &dsc);

_dispatch_sync_invoke_and_complete_recurse(top_dq, ctxt, func,top_dc_flags

DISPATCH_TRACE_ARG(&dsc));

}

_dispatch_trace_item_push压栈操作,将执行任务push到队列中,按照FIFO执行__DISPATCH_WAIT_FOR_QUEUE__是崩溃栈的最后一个函数

DISPATCH_NOINLINE

static void

__DISPATCH_WAIT_FOR_QUEUE__(dispatch_sync_context_t dsc, dispatch_queue_t dq)

{

// 获取队列的状态,看是否是处于等待状态

uint64_t dq_state = _dispatch_wait_prepare(dq);

if (unlikely(_dq_state_drain_locked_by(dq_state, dsc->dsc_waiter))) {

DISPATCH_CLIENT_CRASH((uintptr_t)dq_state,

"dispatch_sync called on queue "

"already owned by current thread");

}

...

}

5.4 _dq_state_drain_locked_by

比较当前等待的value和线程tid,如果为YES就返回回去进行报错处理

DISPATCH_ALWAYS_INLINE

static inline bool

_dq_state_drain_locked_by(uint64_t dq_state, dispatch_tid tid)

{

return _dispatch_lock_is_locked_by((dispatch_lock)dq_state, tid);

}

DISPATCH_ALWAYS_INLINE

static inline bool

_dispatch_lock_is_locked_by(dispatch_lock lock_value, dispatch_tid tid)

{

// equivalent to _dispatch_lock_owner(lock_value) == tid

// ^ (异或运算法) 两个相同就会出现 0 否则为1

return ((lock_value ^ tid) & DLOCK_OWNER_MASK) == 0;

}

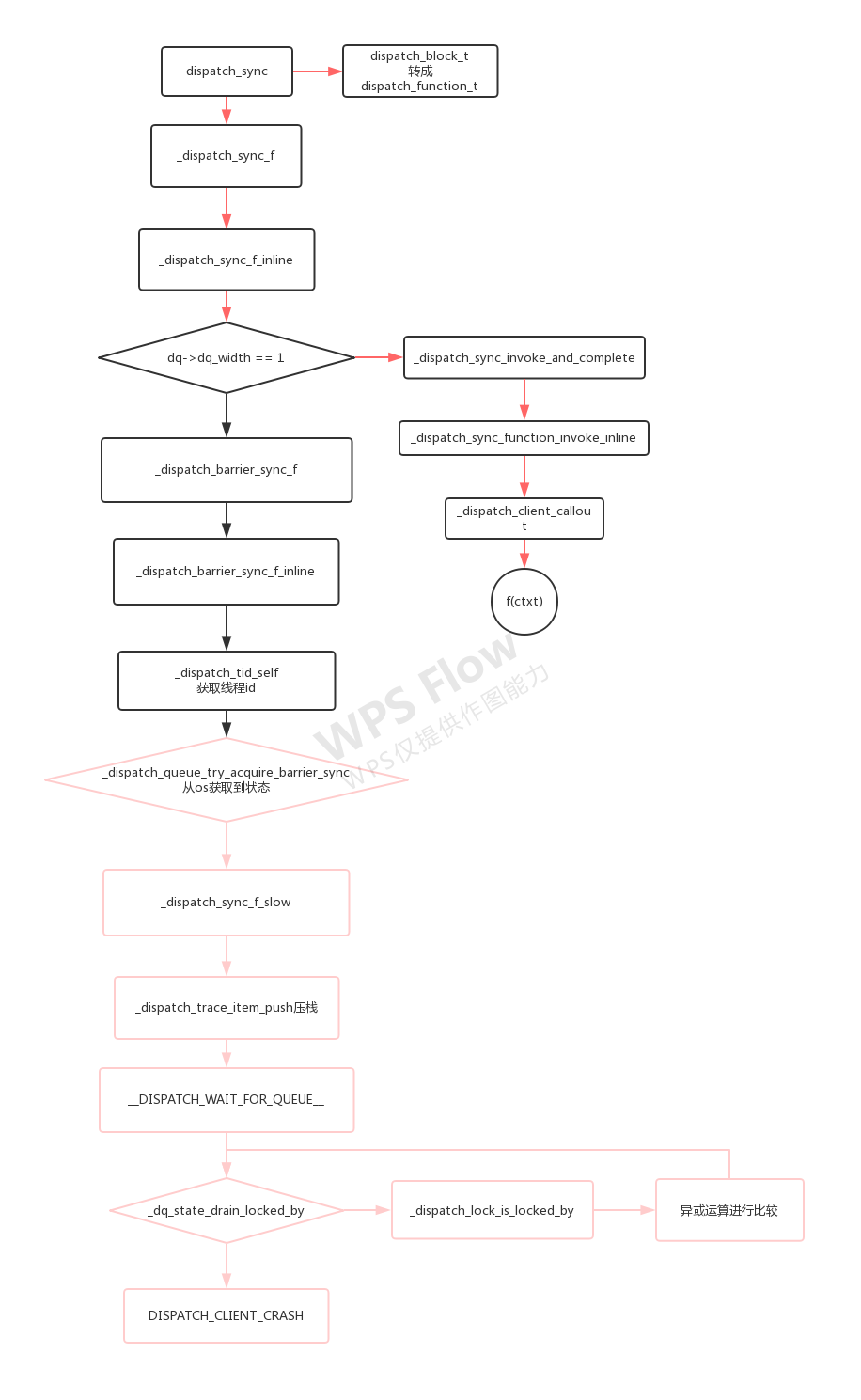

6.一图看懂死锁流程

- 死锁的产生是

线程tid和当前等待状态转换后的value作比较 - 同步执行

dispatch_sync会进行压栈操作,按照FIFO去执行 栅栏函数和同步执行是差不多的

三、dispatch_block任务的执行

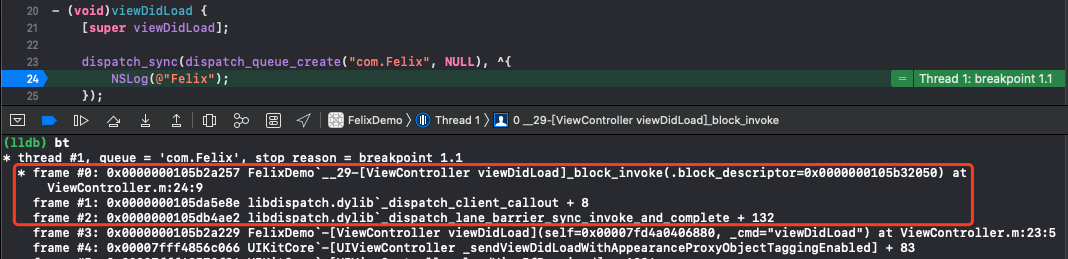

在dispatch_block处打下断点,LLDB调试打印出函数调用栈

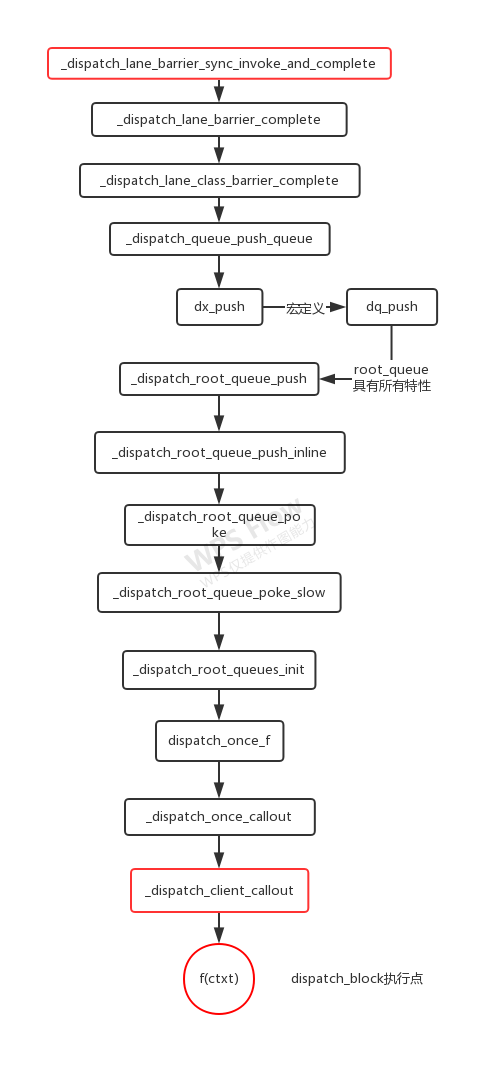

1._dispatch_lane_barrier_sync_invoke_and_complete

这里也采用了类似于上文中的os底层回调,至于为什么用回调——任务的执行依赖于线程的状态,如果线程状态不够良好的话任务不会执行

DISPATCH_NOINLINE

static void

_dispatch_lane_barrier_sync_invoke_and_complete(dispatch_lane_t dq,

void *ctxt, dispatch_function_t func DISPATCH_TRACE_ARG(void *dc))

{

...

// similar to _dispatch_queue_drain_try_unlock

os_atomic_rmw_loop2o(dq, dq_state, old_state, new_state, release, {

new_state = old_state - DISPATCH_QUEUE_SERIAL_DRAIN_OWNED;

new_state &= ~DISPATCH_QUEUE_DRAIN_UNLOCK_MASK;

new_state &= ~DISPATCH_QUEUE_MAX_QOS_MASK;

if (unlikely(old_state & fail_unlock_mask)) {

os_atomic_rmw_loop_give_up({

return _dispatch_lane_barrier_complete(dq, 0, 0);

});

}

});

if (_dq_state_is_base_wlh(old_state)) {

_dispatch_event_loop_assert_not_owned((dispatch_wlh_t)dq);

}

}

- _dispatch_lane_barrier_complete

直接跟到_dispatch_lane_class_barrier_complete

DISPATCH_NOINLINE

static void

_dispatch_lane_barrier_complete(dispatch_lane_class_t dqu, dispatch_qos_t qos,

dispatch_wakeup_flags_t flags)

{

...

uint64_t owned = DISPATCH_QUEUE_IN_BARRIER +

dq->dq_width * DISPATCH_QUEUE_WIDTH_INTERVAL;

return _dispatch_lane_class_barrier_complete(dq, qos, flags, target, owned);

}

- _dispatch_lane_class_barrier_complete

跟进_dispatch_queue_push_queue

DISPATCH_NOINLINE

static void

_dispatch_lane_class_barrier_complete(dispatch_lane_t dq, dispatch_qos_t qos,

dispatch_wakeup_flags_t flags, dispatch_queue_wakeup_target_t target,

uint64_t owned)

{

...

if (tq) {

if (likely((old_state ^ new_state) & enqueue)) {

dispatch_assert(_dq_state_is_enqueued(new_state));

dispatch_assert(flags & DISPATCH_WAKEUP_CONSUME_2);

return _dispatch_queue_push_queue(tq, dq, new_state);

}

...

}

}

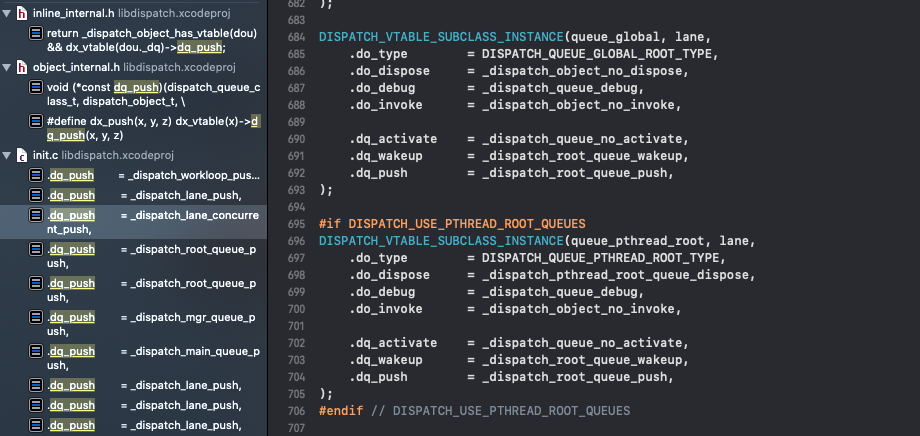

- _dispatch_queue_push_queue

而其中的dx_push是个宏定义

#define dx_push(x, y, z) dx_vtable(x)->dq_push(x, y, z)

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_queue_push_queue(dispatch_queue_t tq, dispatch_queue_class_t dq,

uint64_t dq_state)

{

_dispatch_trace_item_push(tq, dq);

return dx_push(tq, dq, _dq_state_max_qos(dq_state));

}

-

dq_push 全局搜索来到

dq_push,选择_dispatch_root_queue_push继续走下去

-

_dispatch_root_queue_push

大概率会走_dispatch_root_queue_push_inline

DISPATCH_NOINLINE

void

_dispatch_root_queue_push(dispatch_queue_global_t rq, dispatch_object_t dou,

dispatch_qos_t qos)

{

...

#if HAVE_PTHREAD_WORKQUEUE_QOS

if (_dispatch_root_queue_push_needs_override(rq, qos)) {

return _dispatch_root_queue_push_override(rq, dou, qos);

}

#else

(void)qos;

#endif

_dispatch_root_queue_push_inline(rq, dou, dou, 1);

}

- _dispatch_root_queue_push_inline

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_root_queue_push_inline(dispatch_queue_global_t dq,

dispatch_object_t _head, dispatch_object_t _tail, int n)

{

struct dispatch_object_s *hd = _head._do, *tl = _tail._do;

if (unlikely(os_mpsc_push_list(os_mpsc(dq, dq_items), hd, tl, do_next))) {

return _dispatch_root_queue_poke(dq, n, 0);

}

}

- _dispatch_root_queue_poke

DISPATCH_NOINLINE

void

_dispatch_root_queue_poke(dispatch_queue_global_t dq, int n, int floor)

{

...

return _dispatch_root_queue_poke_slow(dq, n, floor);

}

- _dispatch_root_queue_poke_slow

DISPATCH_NOINLINE

static void

_dispatch_root_queue_poke_slow(dispatch_queue_global_t dq, int n, int floor)

{

int remaining = n;

int r = ENOSYS;

_dispatch_root_queues_init();

_dispatch_debug_root_queue(dq, __func__);

_dispatch_trace_runtime_event(worker_request, dq, (uint64_t)n);

...

}

- _dispatch_root_queues_init

跟到核心方法dispatch_once_f

static inline void

_dispatch_root_queues_init(void)

{

dispatch_once_f(&_dispatch_root_queues_pred, NULL,

_dispatch_root_queues_init_once);

}

- dispatch_once_f

当你看到

_dispatch_once_callout函数就离成功不远了

DISPATCH_NOINLINE

void

dispatch_once_f(dispatch_once_t *val, void *ctxt, dispatch_function_t func)

{

// 如果你来过一次 -- 下次就不来

dispatch_once_gate_t l = (dispatch_once_gate_t)val;

//DLOCK_ONCE_DONE

#if !DISPATCH_ONCE_INLINE_FASTPATH || DISPATCH_ONCE_USE_QUIESCENT_COUNTER

uintptr_t v = os_atomic_load(&l->dgo_once, acquire);

if (likely(v == DLOCK_ONCE_DONE)) {

return;

}

#if DISPATCH_ONCE_USE_QUIESCENT_COUNTER

if (likely(DISPATCH_ONCE_IS_GEN(v))) {

return _dispatch_once_mark_done_if_quiesced(l, v);

}

#endif

#endif

// 满足条件 -- 试图进去

if (_dispatch_once_gate_tryenter(l)) {

// 单利调用 -- v->DLOCK_ONCE_DONE

return _dispatch_once_callout(l, ctxt, func);

}

return _dispatch_once_wait(l);

}

- _dispatch_once_callout

DISPATCH_NOINLINE

static void

_dispatch_once_callout(dispatch_once_gate_t l, void *ctxt,

dispatch_function_t func)

{

_dispatch_client_callout(ctxt, func);

_dispatch_once_gate_broadcast(l);

}

2._dispatch_client_callout

f(ctxt)调用执行dispatch_function_t——dispatch_block的执行点

DISPATCH_NOINLINE

void

_dispatch_client_callout(void *ctxt, dispatch_function_t f)

{

_dispatch_get_tsd_base();

void *u = _dispatch_get_unwind_tsd();

if (likely(!u)) return f(ctxt);

_dispatch_set_unwind_tsd(NULL);

f(ctxt);

_dispatch_free_unwind_tsd();

_dispatch_set_unwind_tsd(u);

}

3.一图看懂任务保存流程

最后的最后我们找到了任务执行点,但没有找到任务的保存点,接下来就要从同步函数和异步函数说起了

四、同步函数

前文中已经跟过dispatch_sync的实现了,这里上一张图再捋一捋(特别注意work和func的调用)

串行队列走dq->dq_width == 1分支_dispatch_barrier_sync_f->_dispatch_barrier_sync_f_inline->_dispatch_lane_barrier_sync_invoke_and_complete- 然后就是

三、dispatch_block任务的执行中的流程

- 其他情况大概率走

_dispatch_sync_invoke_and_complete

1._dispatch_sync_invoke_and_complete

保存func并调用_dispatch_sync_function_invoke_inline

DISPATCH_NOINLINE

static void

_dispatch_sync_invoke_and_complete(dispatch_lane_t dq, void *ctxt,

dispatch_function_t func DISPATCH_TRACE_ARG(void *dc))

{

_dispatch_sync_function_invoke_inline(dq, ctxt, func);

_dispatch_trace_item_complete(dc);

_dispatch_lane_non_barrier_complete(dq, 0);

}

2._dispatch_sync_function_invoke_inline

直接调用_dispatch_client_callout与三、dispatch_block任务的执行遥相呼应

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_sync_function_invoke_inline(dispatch_queue_class_t dq, void *ctxt,

dispatch_function_t func)

{

dispatch_thread_frame_s dtf;

_dispatch_thread_frame_push(&dtf, dq);

// f(ctxt) -- func(ctxt)

_dispatch_client_callout(ctxt, func);

_dispatch_perfmon_workitem_inc();

_dispatch_thread_frame_pop(&dtf);

}

3.一图看懂同步函数执行的部分流程

五、异步函数

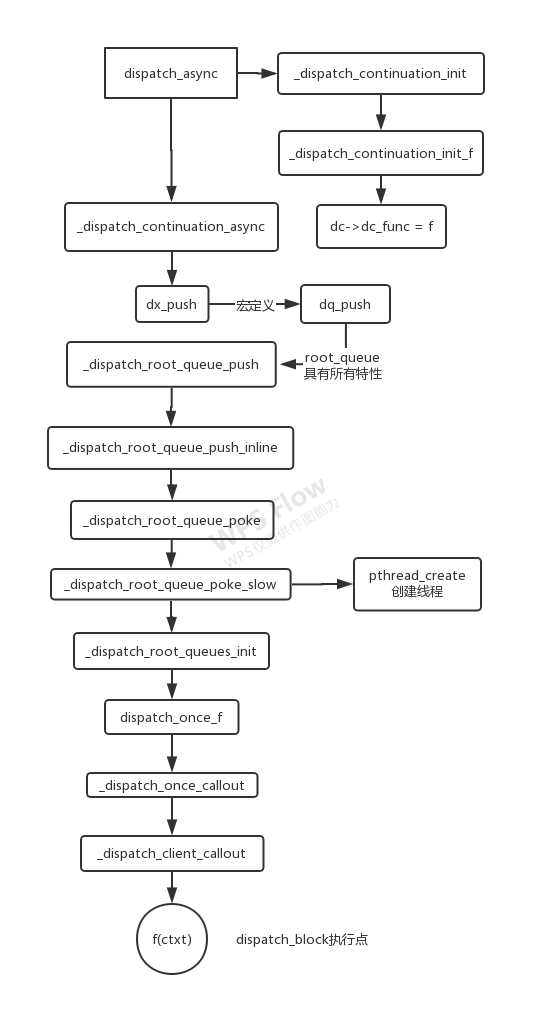

1. 任务的保存

还是一样的思路,跟到dispatch_async的源码实现中,关注dispatch_block_t

1.1 dispatch_async

void

dispatch_async(dispatch_queue_t dq, dispatch_block_t work)

{

dispatch_continuation_t dc = _dispatch_continuation_alloc();

uintptr_t dc_flags = DC_FLAG_CONSUME;

dispatch_qos_t qos;

qos = _dispatch_continuation_init(dc, dq, work, 0, dc_flags);

_dispatch_continuation_async(dq, dc, qos, dc->dc_flags);

}

1.2 _dispatch_continuation_init

_dispatch_Block_invoke将任务进行统一格式化

DISPATCH_ALWAYS_INLINE

static inline dispatch_qos_t

_dispatch_continuation_init(dispatch_continuation_t dc,

dispatch_queue_class_t dqu, dispatch_block_t work,

dispatch_block_flags_t flags, uintptr_t dc_flags)

{

void *ctxt = _dispatch_Block_copy(work);

dc_flags |= DC_FLAG_BLOCK | DC_FLAG_ALLOCATED;

if (unlikely(_dispatch_block_has_private_data(work))) {

dc->dc_flags = dc_flags;

dc->dc_ctxt = ctxt;

// will initialize all fields but requires dc_flags & dc_ctxt to be set

return _dispatch_continuation_init_slow(dc, dqu, flags);

}

dispatch_function_t func = _dispatch_Block_invoke(work);

if (dc_flags & DC_FLAG_CONSUME) {

func = _dispatch_call_block_and_release;

}

return _dispatch_continuation_init_f(dc, dqu, ctxt, func, flags, dc_flags);

}

1.3 _dispatch_continuation_init_f

dc->dc_func = f将block任务 保存下来

DISPATCH_ALWAYS_INLINE

static inline dispatch_qos_t

_dispatch_continuation_init_f(dispatch_continuation_t dc,

dispatch_queue_class_t dqu, void *ctxt, dispatch_function_t f,

dispatch_block_flags_t flags, uintptr_t dc_flags)

{

pthread_priority_t pp = 0;

dc->dc_flags = dc_flags | DC_FLAG_ALLOCATED;

dc->dc_func = f;

dc->dc_ctxt = ctxt;

// in this context DISPATCH_BLOCK_HAS_PRIORITY means that the priority

// should not be propagated, only taken from the handler if it has one

if (!(flags & DISPATCH_BLOCK_HAS_PRIORITY)) {

pp = _dispatch_priority_propagate();

}

_dispatch_continuation_voucher_set(dc, flags);

return _dispatch_continuation_priority_set(dc, dqu, pp, flags);

}

异步函数的任务保存找到了,那它的任务又是何时执行的呢?以及线程是何时创建的?

2. 线程的创建

2.1 _dispatch_continuation_async

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_continuation_async(dispatch_queue_class_t dqu,

dispatch_continuation_t dc, dispatch_qos_t qos, uintptr_t dc_flags)

{

#if DISPATCH_INTROSPECTION

if (!(dc_flags & DC_FLAG_NO_INTROSPECTION)) {

_dispatch_trace_item_push(dqu, dc);

}

#else

(void)dc_flags;

#endif

return dx_push(dqu._dq, dc, qos);

}

2.1 dx_push...

之前已经分析过了,至于为什么要用_dispatch_root_queue_push研究——因为它最基本,省去了旁枝末节

dx_push->dq_push->_dispatch_root_queue_push->_dispatch_root_queue_push_inline->_dispatch_root_queue_poke->_dispatch_root_queue_poke_slow

2.2 _dispatch_root_queue_poke_slow

static void

_dispatch_root_queue_poke_slow(dispatch_queue_global_t dq, int n, int floor)

{

...

// floor 为 0,remaining 是根据队列任务的情况处理的

int can_request, t_count;

// 获取线程池的大小

t_count = os_atomic_load2o(dq, dgq_thread_pool_size, ordered);

do {

// 计算可以请求的数量

can_request = t_count < floor ? 0 : t_count - floor;

if (remaining > can_request) {

_dispatch_root_queue_debug("pthread pool reducing request from %d to %d",

remaining, can_request);

os_atomic_sub2o(dq, dgq_pending, remaining - can_request, relaxed);

remaining = can_request;

}

if (remaining == 0) {

// 线程池满了,就会报出异常的情况

_dispatch_root_queue_debug("pthread pool is full for root queue: "

"%p", dq);

return;

}

} while (!os_atomic_cmpxchgvw2o(dq, dgq_thread_pool_size, t_count,

t_count - remaining, &t_count, acquire));

pthread_attr_t *attr = &pqc->dpq_thread_attr;

pthread_t tid, *pthr = &tid;

#if DISPATCH_USE_MGR_THREAD && DISPATCH_USE_PTHREAD_ROOT_QUEUES

if (unlikely(dq == &_dispatch_mgr_root_queue)) {

pthr = _dispatch_mgr_root_queue_init();

}

#endif

do {

_dispatch_retain(dq);

// 开辟线程

while ((r = pthread_create(pthr, attr, _dispatch_worker_thread, dq))) {

if (r != EAGAIN) {

(void)dispatch_assume_zero(r);

}

_dispatch_temporary_resource_shortage();

}

} while (--remaining);

#else

(void)floor;

#endif // DISPATCH_USE_PTHREAD_POOL

}

-

第一个

do-while是对核心线程数的判断、操作等等 -

第二个

do-while调用pthread_create创建线程(底层还是用了pthread)

3.任务的执行

任务的执行其实刚才已经讲过了

_dispatch_root_queues_init->dispatch_once_f->_dispatch_once_callout->_dispatch_client_callout

只不过任务在等待线程的状态,而线程怎么执行任务就不得而知了

4.一图看懂异步函数的执行流程

六、信号量的原理

信号量的基本使用是这样的,底层又是怎么个原理呢?

dispatch_semaphore_t sem = dispatch_semaphore_create(0);

dispatch_semaphore_wait(sem, DISPATCH_TIME_FOREVER);

dispatch_semaphore_signal(sem);

1.dispatch_semaphore_create

只是初始化dispatch_semaphore_t,内部进行传值保存(value必须大于0)

dispatch_semaphore_t

dispatch_semaphore_create(long value)

{

dispatch_semaphore_t dsema;

// If the internal value is negative, then the absolute of the value is

// equal to the number of waiting threads. Therefore it is bogus to

// initialize the semaphore with a negative value.

if (value < 0) {

return DISPATCH_BAD_INPUT;

}

dsema = _dispatch_object_alloc(DISPATCH_VTABLE(semaphore),

sizeof(struct dispatch_semaphore_s));

dsema->do_next = DISPATCH_OBJECT_LISTLESS;

dsema->do_targetq = _dispatch_get_default_queue(false);

dsema->dsema_value = value;

_dispatch_sema4_init(&dsema->dsema_sema, _DSEMA4_POLICY_FIFO);

dsema->dsema_orig = value;

return dsema;

}

2.dispatch_semaphore_signal

类似KVC形式从底层取得当前信号量的value值,并且这个函数是有返回值的

long

dispatch_semaphore_signal(dispatch_semaphore_t dsema)

{

long value = os_atomic_inc2o(dsema, dsema_value, release);

if (likely(value > 0)) {

return 0;

}

if (unlikely(value == LONG_MIN)) {

DISPATCH_CLIENT_CRASH(value,

"Unbalanced call to dispatch_semaphore_signal()");

}

return _dispatch_semaphore_signal_slow(dsema);

}

DISPATCH_NOINLINE

long

_dispatch_semaphore_signal_slow(dispatch_semaphore_t dsema)

{

_dispatch_sema4_create(&dsema->dsema_sema, _DSEMA4_POLICY_FIFO);

_dispatch_sema4_signal(&dsema->dsema_sema, 1);

return 1;

}

其实最核心的点在于os_atomic_inc2o进行了++操作

#define os_atomic_inc2o(p, f, m) \

os_atomic_add2o(p, f, 1, m)

#define os_atomic_add2o(p, f, v, m) \

os_atomic_add(&(p)->f, (v), m)

#define os_atomic_add(p, v, m) \

_os_atomic_c11_op((p), (v), m, add, +)

3.dispatch_semaphore_wait

同理dispatch_semaphore_wait也是取value值,并返回对应结果

value>=0就立刻返回value<0根据等待时间timeout作出不同操作DISPATCH_TIME_NOW将value加一(也就是变为0)——为了抵消 wait 函数一开始的减一操作,并返回KERN_OPERATION_TIMED_OUT表示由于等待时间超时DISPATCH_TIME_FOREVER调用系统的semaphore_wait方法继续等待,直到收到signal调用默认情况与DISPATCH_TIME_FOREVER类似,不过需要指定一个等待时间

long

dispatch_semaphore_wait(dispatch_semaphore_t dsema, dispatch_time_t timeout)

{

long value = os_atomic_dec2o(dsema, dsema_value, acquire);

if (likely(value >= 0)) {

return 0;

}

return _dispatch_semaphore_wait_slow(dsema, timeout);

}

DISPATCH_NOINLINE

static long

_dispatch_semaphore_wait_slow(dispatch_semaphore_t dsema,

dispatch_time_t timeout)

{

long orig;

_dispatch_sema4_create(&dsema->dsema_sema, _DSEMA4_POLICY_FIFO);

switch (timeout) {

default:

if (!_dispatch_sema4_timedwait(&dsema->dsema_sema, timeout)) {

break;

}

// Fall through and try to undo what the fast path did to

// dsema->dsema_value

case DISPATCH_TIME_NOW:

orig = dsema->dsema_value;

while (orig < 0) {

if (os_atomic_cmpxchgvw2o(dsema, dsema_value, orig, orig + 1,

&orig, relaxed)) {

return _DSEMA4_TIMEOUT();

}

}

// Another thread called semaphore_signal().

// Fall through and drain the wakeup.

case DISPATCH_TIME_FOREVER:

_dispatch_sema4_wait(&dsema->dsema_sema);

break;

}

return 0;

}

os_atomic_dec2o进行了--操作

#define os_atomic_dec2o(p, f, m) \

os_atomic_sub2o(p, f, 1, m)

#define os_atomic_sub2o(p, f, v, m) \

os_atomic_sub(&(p)->f, (v), m)

#define os_atomic_sub(p, v, m) \

_os_atomic_c11_op((p), (v), m, sub, -)

七、调度组的原理

调度组的基本使用如下

dispatch_group_t group = dispatch_group_create();

dispatch_group_enter(group);

dispatch_group_leave(group);

dispatch_group_async(group, queue, ^{});

dispatch_group_notify(group, queue, ^{});

1.dispatch_group_create

跟其他GCD对象一样使用_dispatch_object_alloc生成dispatch_group_t

从os_atomic_store2o可以看出group底层也维护了一个value值

dispatch_group_t

dispatch_group_create(void)

{

return _dispatch_group_create_with_count(0);

}

DISPATCH_ALWAYS_INLINE

static inline dispatch_group_t

_dispatch_group_create_with_count(uint32_t n)

{

dispatch_group_t dg = _dispatch_object_alloc(DISPATCH_VTABLE(group),

sizeof(struct dispatch_group_s));

dg->do_next = DISPATCH_OBJECT_LISTLESS;

dg->do_targetq = _dispatch_get_default_queue(false);

if (n) {

os_atomic_store2o(dg, dg_bits,

-n * DISPATCH_GROUP_VALUE_INTERVAL, relaxed);

os_atomic_store2o(dg, do_ref_cnt, 1, relaxed); // <rdar://22318411>

}

return dg;

}

2.dispatch_group_enter & dispatch_group_leave

这两个API与信号量的使用大同小异,os_atomic_sub_orig2o、os_atomic_add_orig2o负责--、++操作,如果不成对使用则会出错

dispatch_group_leave出组会对state进行更新- 全部出组了会调用

_dispatch_group_wake

void

dispatch_group_enter(dispatch_group_t dg)

{

// The value is decremented on a 32bits wide atomic so that the carry

// for the 0 -> -1 transition is not propagated to the upper 32bits.

uint32_t old_bits = os_atomic_sub_orig2o(dg, dg_bits,

DISPATCH_GROUP_VALUE_INTERVAL, acquire);

uint32_t old_value = old_bits & DISPATCH_GROUP_VALUE_MASK;

if (unlikely(old_value == 0)) {

_dispatch_retain(dg); // <rdar://problem/22318411>

}

if (unlikely(old_value == DISPATCH_GROUP_VALUE_MAX)) {

DISPATCH_CLIENT_CRASH(old_bits,

"Too many nested calls to dispatch_group_enter()");

}

}

void

dispatch_group_leave(dispatch_group_t dg)

{

// The value is incremented on a 64bits wide atomic so that the carry for

// the -1 -> 0 transition increments the generation atomically.

uint64_t new_state, old_state = os_atomic_add_orig2o(dg, dg_state,

DISPATCH_GROUP_VALUE_INTERVAL, release);

uint32_t old_value = (uint32_t)(old_state & DISPATCH_GROUP_VALUE_MASK);

if (unlikely(old_value == DISPATCH_GROUP_VALUE_1)) {

old_state += DISPATCH_GROUP_VALUE_INTERVAL;

do {

new_state = old_state;

if ((old_state & DISPATCH_GROUP_VALUE_MASK) == 0) {

new_state &= ~DISPATCH_GROUP_HAS_WAITERS;

new_state &= ~DISPATCH_GROUP_HAS_NOTIFS;

} else {

// If the group was entered again since the atomic_add above,

// we can't clear the waiters bit anymore as we don't know for

// which generation the waiters are for

new_state &= ~DISPATCH_GROUP_HAS_NOTIFS;

}

if (old_state == new_state) break;

} while (unlikely(!os_atomic_cmpxchgv2o(dg, dg_state,

old_state, new_state, &old_state, relaxed)));

return _dispatch_group_wake(dg, old_state, true);

}

if (unlikely(old_value == 0)) {

DISPATCH_CLIENT_CRASH((uintptr_t)old_value,

"Unbalanced call to dispatch_group_leave()");

}

}

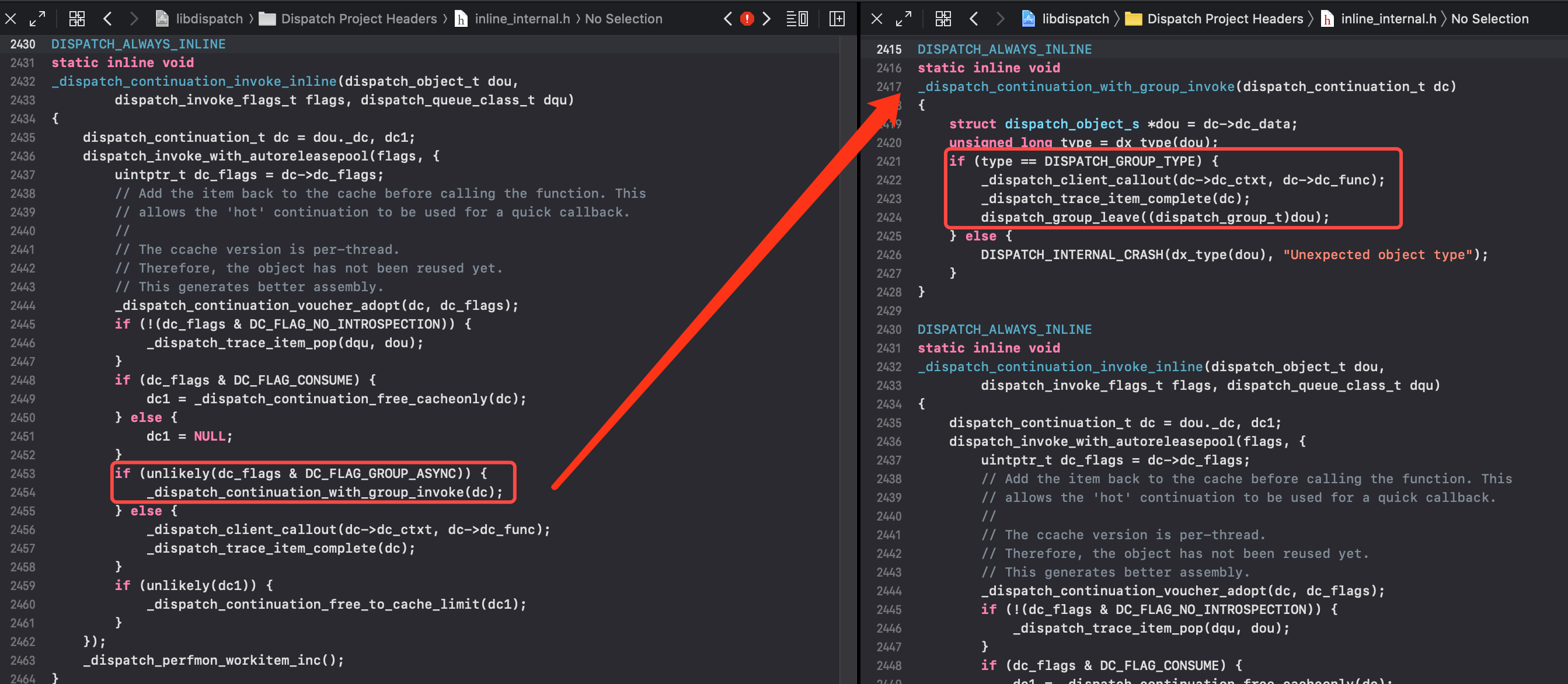

3.dispatch_group_async

_dispatch_continuation_init_f保存任务(类似异步函数)- 调用

_dispatch_continuation_group_async

void

dispatch_group_async_f(dispatch_group_t dg, dispatch_queue_t dq, void *ctxt,

dispatch_function_t func)

{

dispatch_continuation_t dc = _dispatch_continuation_alloc();

uintptr_t dc_flags = DC_FLAG_CONSUME | DC_FLAG_GROUP_ASYNC;

dispatch_qos_t qos;

qos = _dispatch_continuation_init_f(dc, dq, ctxt, func, 0, dc_flags);

_dispatch_continuation_group_async(dg, dq, dc, qos);

}

调用dispatch_group_enter进组

static inline void

_dispatch_continuation_group_async(dispatch_group_t dg, dispatch_queue_t dq,

dispatch_continuation_t dc, dispatch_qos_t qos)

{

dispatch_group_enter(dg);

dc->dc_data = dg;

_dispatch_continuation_async(dq, dc, qos, dc->dc_flags);

}

进组了之后需要调用出组,也就是执行完任务会出组

在_dispatch_continuation_invoke_inline如果是group形式就会调用_dispatch_continuation_with_group_invoke来出组

4.dispatch_group_wait

dispatch_group_wait与信号量也是异曲同工

dispatch_group_create创建调度组的时候保存了一个value

- 如果当前

value和原始value相同,表明任务已经全部完成,直接返回0 - 如果

timeout为 0 也会立刻返回,否则调用 _dispatch_group_wait_slow- 在

_dispatch_group_wait_slow会一直等到任务完成返回 0 - 一直没有完成会返回

timeout

- 在

long

dispatch_group_wait(dispatch_group_t dg, dispatch_time_t timeout)

{

uint64_t old_state, new_state;

os_atomic_rmw_loop2o(dg, dg_state, old_state, new_state, relaxed, {

if ((old_state & DISPATCH_GROUP_VALUE_MASK) == 0) {

os_atomic_rmw_loop_give_up_with_fence(acquire, return 0);

}

if (unlikely(timeout == 0)) {

os_atomic_rmw_loop_give_up(return _DSEMA4_TIMEOUT());

}

new_state = old_state | DISPATCH_GROUP_HAS_WAITERS;

if (unlikely(old_state & DISPATCH_GROUP_HAS_WAITERS)) {

os_atomic_rmw_loop_give_up(break);

}

});

return _dispatch_group_wait_slow(dg, _dg_state_gen(new_state), timeout);

}

5.dispatch_group_notify

等待_dispatch_group_wake回调(全部出组会调用)

DISPATCH_ALWAYS_INLINE

static inline void

_dispatch_group_notify(dispatch_group_t dg, dispatch_queue_t dq,

dispatch_continuation_t dsn)

{

uint64_t old_state, new_state;

dispatch_continuation_t prev;

dsn->dc_data = dq;

_dispatch_retain(dq);

prev = os_mpsc_push_update_tail(os_mpsc(dg, dg_notify), dsn, do_next);

if (os_mpsc_push_was_empty(prev)) _dispatch_retain(dg);

os_mpsc_push_update_prev(os_mpsc(dg, dg_notify), prev, dsn, do_next);

if (os_mpsc_push_was_empty(prev)) {

os_atomic_rmw_loop2o(dg, dg_state, old_state, new_state, release, {

new_state = old_state | DISPATCH_GROUP_HAS_NOTIFS;

if ((uint32_t)old_state == 0) {

os_atomic_rmw_loop_give_up({

return _dispatch_group_wake(dg, new_state, false);

});

}

});

}

}

static void

_dispatch_group_wake(dispatch_group_t dg, uint64_t dg_state, bool needs_release)

{

uint16_t refs = needs_release ? 1 : 0; // <rdar://problem/22318411>

if (dg_state & DISPATCH_GROUP_HAS_NOTIFS) {

dispatch_continuation_t dc, next_dc, tail;

// Snapshot before anything is notified/woken

dc = os_mpsc_capture_snapshot(os_mpsc(dg, dg_notify), &tail);

do {

dispatch_queue_t dsn_queue = (dispatch_queue_t)dc->dc_data;

next_dc = os_mpsc_pop_snapshot_head(dc, tail, do_next);

_dispatch_continuation_async(dsn_queue, dc,

_dispatch_qos_from_pp(dc->dc_priority), dc->dc_flags);

_dispatch_release(dsn_queue);

} while ((dc = next_dc));

refs++;

}

if (dg_state & DISPATCH_GROUP_HAS_WAITERS) {

_dispatch_wake_by_address(&dg->dg_gen);

}

if (refs) _dispatch_release_n(dg, refs);

}

七、单例的原理

#define DLOCK_ONCE_UNLOCKED ((uintptr_t)0)

void

dispatch_once_f(dispatch_once_t *val, void *ctxt, dispatch_function_t func)

{

dispatch_once_gate_t l = (dispatch_once_gate_t)val;

#if !DISPATCH_ONCE_INLINE_FASTPATH || DISPATCH_ONCE_USE_QUIESCENT_COUNTER

uintptr_t v = os_atomic_load(&l->dgo_once, acquire);

if (likely(v == DLOCK_ONCE_DONE)) {

return;

}

#if DISPATCH_ONCE_USE_QUIESCENT_COUNTER

if (likely(DISPATCH_ONCE_IS_GEN(v))) {

return _dispatch_once_mark_done_if_quiesced(l, v);

}

#endif

#endif

if (_dispatch_once_gate_tryenter(l)) {

return _dispatch_once_callout(l, ctxt, func);

}

return _dispatch_once_wait(l);

}

DISPATCH_ALWAYS_INLINE

static inline bool

_dispatch_once_gate_tryenter(dispatch_once_gate_t l)

{

return os_atomic_cmpxchg(&l->dgo_once, DLOCK_ONCE_UNLOCKED,

(uintptr_t)_dispatch_lock_value_for_self(), relaxed);

}

- 第一次调用时外部传进来的

onceToken为空,所以val为 NULL_dispatch_once_gate_tryenter(l)判断l->dgo_once是否标记为DLOCK_ONCE_UNLOCKED(是否存储过)DLOCK_ONCE_UNLOCKED=0,所以if 判断是成立的,就会进行block回调- 再通过

_dispatch_once_gate_broadcast将l->dgo_once标记为DLOCK_ONCE_DONE

- 第二次进来就会直接返回,保证代码只执行一次

写在后面

关于上一篇文章中的dispatch_barrier_async为什么使用全局队列无效可以看深入浅出 GCD 之 dispatch_queue

GCD源码还真不是一般的晦涩难懂,笔者水平有限,若有错误之处烦请指出