前言

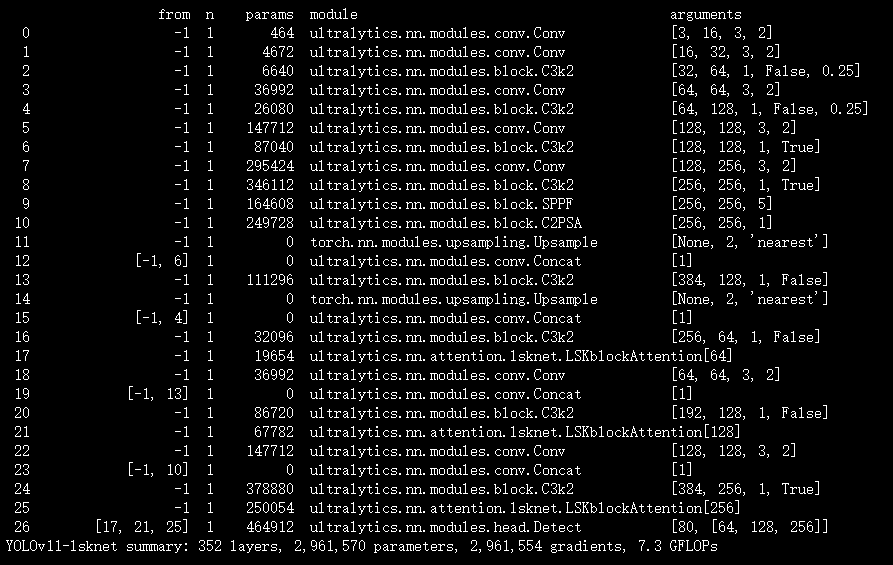

本文介绍了大型选择性核网络(LSKNet)及其在YOLOv11中的结合应用。LSKNet考虑遥感场景先验知识,能够动态调整大空间接收场,以模拟各种对象的范围上下文。它引入LSKblock Attention注意力机制,通过空间选择性机制动态调整感受野。其结构包含LSK module和LSK Block,通过大核卷积序列和空间选择机制,有效捕获长距离上下文信息。我们将LSKblockAttention模块集成进YOLOv11,替换部分原有模块。实验表明,LSKNet在多个检测基准测试中取得新的最优成绩,展现了其在目标检测领域的卓越性能。

文章目录: YOLOv11改进大全:卷积层、轻量化、注意力机制、损失函数、Backbone、SPPF、Neck、检测头全方位优化汇总

专栏链接: YOLOv11改进专栏

@[TOC]

介绍

摘要

当前遥感目标检测研究主要聚焦于定向边界框表示能力的提升,然而普遍忽视了遥感场景中特有的先验知识。此类先验知识具有重要价值,因为在缺乏充分长距离上下文信息参考的情况下,微小遥感目标易产生误检现象,且不同类别目标所需的长距离上下文范围存在显著差异。针对这一关键问题,本文充分考虑遥感场景先验特性,提出了大型选择性核网络(LSKNet)。该网络能够动态调整其大尺度空间感受野,从而更精确地模拟遥感场景中各类目标的上下文范围特征。据我们所知,本研究首次在遥感目标检测领域系统探索大型选择性核机制的应用。在不引入额外复杂设计的前提下,所提出的轻量级LSKNet在标准遥感图像分类、目标检测及语义分割基准测试中均取得了最先进的性能水平。

创新点

- LSKblock Attention:LSKNet引入了LSKblock Attention作为一种注意力机制,通过空间选择性机制动态调整感受野,以更有效地处理不同目标类型的广泛上下文。这种机制允许模型根据输入自适应地确定大型核的权重,从而在空间维度上调整每个目标的感受野。

- 大型选择性核网络:LSKNet是首个在遥感目标检测领域探索大型和选择性核机制的模型。它通过加权处理大型深度核的特征,并在空间上将它们合并,以适应不同目标类型的不同上下文细微差异。

- 适应性感受野调整:LSKNet能够动态调整感受野以更好地模拟远程感知场景中各种对象的范围上下文,从而更有效地处理不同目标类型的广泛上下文。

- 性能优越:LSKNet在标准基准数据集上取得了新的最先进成绩,如HRSC2016、DOTA-v1.0和FAIR1M-v1.0,证明了其在遥感目标检测任务中的卓越性能和有效性。

文章链接

论文地址:论文地址

代码地址:代码地址

基本原理

LSKNet的结构

LSKNet的结构包括以下几个关键组成部分:

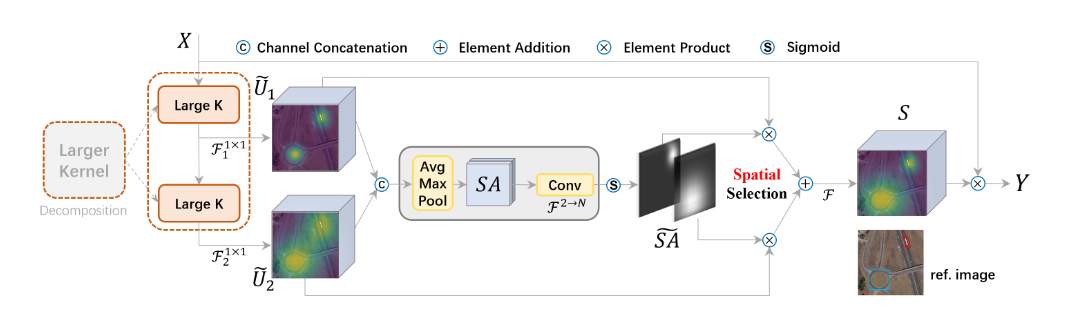

- LSK module:LSK module是LSKNet中的一个重要组件,由大核卷积序列和空间选择机制组成。大核卷积序列用于捕获长距离上下文信息,而空间选择机制则根据输入数据动态调整大核的权重,以适应不同目标类型的上下文特征。

- LSK Block:LSK Block是LSKNet的基本构建块,由LK Selection和FFN两个子块组成。LK Selection子块用于动态调整网络的感受野,而FFN子块用于通道混合和特征细化。每个LSK Block包含一个LSK module,用于处理特征提取和空间选择。

- LSKNet:LSKNet由多个LSK Block组成,每个LSK Block都包含一个LSK module。整个网络结构通过堆叠多个LSK Block来构建,以实现对不同目标类型的广泛上下文的有效建模和处理。LSKNet利用这种层级结构和空间选择机制,能够适应不同目标的特征和上下文需求,从而在遥感目标检测任务中取得优越性能。

3.2 大核卷积

因为不同类型的目标对背景信息的需求不同,这就需要模型能够自适应选择不同大小的背景范围。因此,作者通过解耦出一系列具有大卷积核、且不断扩张的Depth-wise 卷积,构建了一个更大感受野的网络。具体来说,序列中第i个深度卷积的核大小、膨胀率以及接收场的扩展定义如下:

核大小和膨胀率的增加确保了接收场足够快地扩展。我们设定膨胀率的上限以保证膨胀卷积不会在特征图之间引入间隙。例如,我们可以将一个大核分解为2个或3个深度卷积,如表2所示,它们分别具有23和29的理论接收场。

这种设计有两个优点。首先,它明确产生了具有不同大接收场的多个特征,这使得后来的核选择更加容易。其次,序列分解比简单应用一个更大的核更有效率。如表2所示,在相同的理论接收场下,我们的分解大大减少了与标准大核卷积相比的参数数量。为了从不同范围获取富含上下文信息的特征,对输入应用一系列不同接收场的分解深度卷积:

其中 是具有核和膨胀的深度卷积。假设有个分解核,每一个都通过一个卷积层进一步处理:

允许每个空间特征向量的通道混合。然后,提出了一种选择机制,基于获得的多尺度特征动态选择不同对象的核,接下来将介绍这一点。

3.3 空间核选择

为了增强网络关注最相关的空间上下文区域以检测目标的能力,我们使用一个空间选择机制,在不同尺度上从大核卷积中空间选择特征图。首先,我们将从不同核获得的具有不同接收范围的特征进行连接:

然后通过应用基于通道的平均和最大池化(表示为和)到上,高效提取空间关系:

其中和是平均和最大池化的空间特征描述符。为了允许不同空间描述符之间的信息交互,我们将空间池化特征进行连接,并使用一个卷积层将池化特征(有2个通道)转换为个空间注意力图:

对于每一个空间注意力图,应用sigmoid激活函数以获得每个分解大核对应的个体空间选择掩模: 其中表示sigmoid函数。然后,通过其相应的空间选择掩模加权分解大核序列的特征,并通过一个卷积层融合,以获得注意力特征:

LSK(大核选择)模块的最终输出是输入特征与的元素级乘积,与文献[17, 18, 25]中相似:

核心代码

import torch

import torch.nn as nn

from torch.nn.modules.utils import _pair as to_2tuple

from mmcv.cnn.utils.weight_init import (constant_init, normal_init,

trunc_normal_init)

from ..builder import ROTATED_BACKBONES

from mmcv.runner import BaseModule

from timm.models.layers import DropPath, to_2tuple, trunc_normal_

import math

from functools import partial

import warnings

from mmcv.cnn import build_norm_layer

class Mlp(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Conv2d(in_features, hidden_features, 1)

self.dwconv = DWConv(hidden_features)

self.act = act_layer()

self.fc2 = nn.Conv2d(hidden_features, out_features, 1)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.dwconv(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class LSKblock(nn.Module):

def __init__(self, dim):

super().__init__()

self.conv0 = nn.Conv2d(dim, dim, 5, padding=2, groups=dim)

self.conv_spatial = nn.Conv2d(dim, dim, 7, stride=1, padding=9, groups=dim, dilation=3)

self.conv1 = nn.Conv2d(dim, dim//2, 1)

self.conv2 = nn.Conv2d(dim, dim//2, 1)

self.conv_squeeze = nn.Conv2d(2, 2, 7, padding=3)

self.conv = nn.Conv2d(dim//2, dim, 1)

def forward(self, x):

attn1 = self.conv0(x)

attn2 = self.conv_spatial(attn1)

attn1 = self.conv1(attn1)

attn2 = self.conv2(attn2)

attn = torch.cat([attn1, attn2], dim=1)

avg_attn = torch.mean(attn, dim=1, keepdim=True)

max_attn, _ = torch.max(attn, dim=1, keepdim=True)

agg = torch.cat([avg_attn, max_attn], dim=1)

sig = self.conv_squeeze(agg).sigmoid()

attn = attn1 * sig[:,0,:,:].unsqueeze(1) + attn2 * sig[:,1,:,:].unsqueeze(1)

attn = self.conv(attn)

return x * attn

class Attention(nn.Module):

def __init__(self, d_model):

super().__init__()

self.proj_1 = nn.Conv2d(d_model, d_model, 1)

self.activation = nn.GELU()

self.spatial_gating_unit = LSKblock(d_model)

self.proj_2 = nn.Conv2d(d_model, d_model, 1)

def forward(self, x):

shorcut = x.clone()

x = self.proj_1(x)

x = self.activation(x)

x = self.spatial_gating_unit(x)

x = self.proj_2(x)

x = x + shorcut

return x

class Block(nn.Module):

def __init__(self, dim, mlp_ratio=4., drop=0.,drop_path=0., act_layer=nn.GELU, norm_cfg=None):

super().__init__()

if norm_cfg:

self.norm1 = build_norm_layer(norm_cfg, dim)[1]

self.norm2 = build_norm_layer(norm_cfg, dim)[1]

else:

self.norm1 = nn.BatchNorm2d(dim)

self.norm2 = nn.BatchNorm2d(dim)

self.attn = Attention(dim)

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

layer_scale_init_value = 1e-2

self.layer_scale_1 = nn.Parameter(

layer_scale_init_value * torch.ones((dim)), requires_grad=True)

self.layer_scale_2 = nn.Parameter(

layer_scale_init_value * torch.ones((dim)), requires_grad=True)

def forward(self, x):

x = x + self.drop_path(self.layer_scale_1.unsqueeze(-1).unsqueeze(-1) * self.attn(self.norm1(x)))

x = x + self.drop_path(self.layer_scale_2.unsqueeze(-1).unsqueeze(-1) * self.mlp(self.norm2(x)))

return x

class OverlapPatchEmbed(nn.Module):

""" Image to Patch Embedding

"""

def __init__(self, img_size=224, patch_size=7, stride=4, in_chans=3, embed_dim=768, norm_cfg=None):

super().__init__()

patch_size = to_2tuple(patch_size)

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=stride,

padding=(patch_size[0] // 2, patch_size[1] // 2))

if norm_cfg:

self.norm = build_norm_layer(norm_cfg, embed_dim)[1]

else:

self.norm = nn.BatchNorm2d(embed_dim)

def forward(self, x):

x = self.proj(x)

_, _, H, W = x.shape

x = self.norm(x)

return x, H, W

@ROTATED_BACKBONES.register_module()

class LSKNet(BaseModule):

def __init__(self, img_size=224, in_chans=3, embed_dims=[64, 128, 256, 512],

mlp_ratios=[8, 8, 4, 4], drop_rate=0., drop_path_rate=0., norm_layer=partial(nn.LayerNorm, eps=1e-6),

depths=[3, 4, 6, 3], num_stages=4,

pretrained=None,

init_cfg=None,

norm_cfg=None):

super().__init__(init_cfg=init_cfg)

assert not (init_cfg and pretrained), \

'init_cfg and pretrained cannot be set at the same time'

if isinstance(pretrained, str):

warnings.warn('DeprecationWarning: pretrained is deprecated, '

'please use "init_cfg" instead')

self.init_cfg = dict(type='Pretrained', checkpoint=pretrained)

elif pretrained is not None:

raise TypeError('pretrained must be a str or None')

self.depths = depths

self.num_stages = num_stages

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule

cur = 0

for i in range(num_stages):

patch_embed = OverlapPatchEmbed(img_size=img_size if i == 0 else img_size // (2 ** (i + 1)),

patch_size=7 if i == 0 else 3,

stride=4 if i == 0 else 2,

in_chans=in_chans if i == 0 else embed_dims[i - 1],

embed_dim=embed_dims[i], norm_cfg=norm_cfg)

block = nn.ModuleList([Block(

dim=embed_dims[i], mlp_ratio=mlp_ratios[i], drop=drop_rate, drop_path=dpr[cur + j],norm_cfg=norm_cfg)

for j in range(depths[i])])

norm = norm_layer(embed_dims[i])

cur += depths[i]

setattr(self, f"patch_embed{i + 1}", patch_embed)

setattr(self, f"block{i + 1}", block)

setattr(self, f"norm{i + 1}", norm)

def init_weights(self):

print('init cfg', self.init_cfg)

if self.init_cfg is None:

for m in self.modules():

if isinstance(m, nn.Linear):

trunc_normal_init(m, std=.02, bias=0.)

elif isinstance(m, nn.LayerNorm):

constant_init(m, val=1.0, bias=0.)

elif isinstance(m, nn.Conv2d):

fan_out = m.kernel_size[0] * m.kernel_size[

1] * m.out_channels

fan_out //= m.groups

normal_init(

m, mean=0, std=math.sqrt(2.0 / fan_out), bias=0)

else:

super(LSKNet, self).init_weights()

def freeze_patch_emb(self):

self.patch_embed1.requires_grad = False

@torch.jit.ignore

def no_weight_decay(self):

return {'pos_embed1', 'pos_embed2', 'pos_embed3', 'pos_embed4', 'cls_token'} # has pos_embed may be better

def get_classifier(self):

return self.head

def reset_classifier(self, num_classes, global_pool=''):

self.num_classes = num_classes

self.head = nn.Linear(self.embed_dim, num_classes) if num_classes > 0 else nn.Identity()

def forward_features(self, x):

B = x.shape[0]

outs = []

for i in range(self.num_stages):

patch_embed = getattr(self, f"patch_embed{i + 1}")

block = getattr(self, f"block{i + 1}")

norm = getattr(self, f"norm{i + 1}")

x, H, W = patch_embed(x)

for blk in block:

x = blk(x)

x = x.flatten(2).transpose(1, 2)

x = norm(x)

x = x.reshape(B, H, W, -1).permute(0, 3, 1, 2).contiguous()

outs.append(x)

return outs

def forward(self, x):

x = self.forward_features(x)

# x = self.head(x)

return x

class DWConv(nn.Module):

def __init__(self, dim=768):

super(DWConv, self).__init__()

self.dwconv = nn.Conv2d(dim, dim, 3, 1, 1, bias=True, groups=dim)

def forward(self, x):

x = self.dwconv(x)

return x

def _conv_filter(state_dict, patch_size=16):

""" convert patch embedding weight from manual patchify + linear proj to conv"""

out_dict = {}

for k, v in state_dict.items():

if 'patch_embed.proj.weight' in k:

v = v.reshape((v.shape[0], 3, patch_size, patch_size))

out_dict[k] = v

return out_dict

实验

脚本

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLO

if __name__ == '__main__':

# 修改为自己的配置文件地址

model = YOLO('/root/ultralytics-main/ultralytics/cfg/models/11/yolov11-lsknet.yaml')

# 修改为自己的数据集地址

model.train(data='/root/ultralytics-main/ultralytics/cfg/datasets/coco8.yaml',

cache=False,

imgsz=640,

epochs=10,

single_cls=False, # 是否是单类别检测

batch=8,

close_mosaic=10,

workers=0,

optimizer='SGD',

amp=True,

project='runs/train',

name='lsknet',

)

结果