一、代码案例核心作用解析

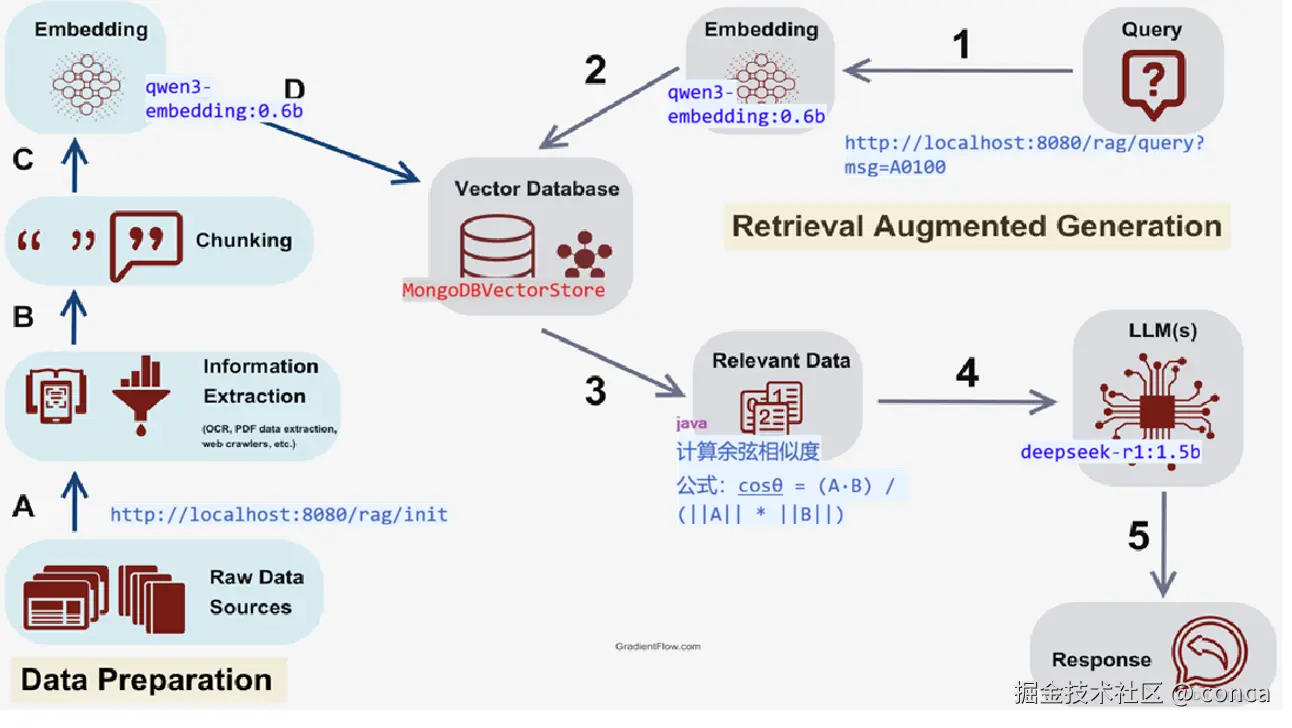

本案例基于 Java + Spring AI 框架,完整实现了私有化部署的轻量级 RAG(检索增强生成)问答系统,核心解决 “故障编码精准解释” 的垂直场景需求。整体代码分为四大核心模块,实现了从 “知识库向量化存储” 到 “用户查询 - 向量检索 - 小模型生成回答” 的全流程闭环。

1. 核心模块与代码作用

| 类名 | 核心作用 |

|---|---|

MongoConfig | 配置 MongoDB 连接(认证、地址、库名),生成MongoTemplate Bean,为向量存储提供数据库操作入口 |

MongoDBVectorStore | 自定义 MongoDB 向量存储实现,核心实现余弦相似度计算和向量检索逻辑,是整个系统的核心 |

VectorStoreConfig | 配置 MongoDB 向量存储 Bean,关联MongoTemplate和 Embedding 模型 |

OllamaLLMConfig | 配置 Ollama 本地小模型(qwen3:0.6b/deepseek-r1:1.5b)的连接和 ChatClient |

RagController | 对外提供 HTTP 接口,实现 “知识库初始化”“向量检索”“RAG 问答” 三大核心功能 |

RagApplication | 项目启动类,扫描包并初始化 Spring 上下文 |

![点击并拖拽以移动]() 编辑

编辑

1.1MongoConfig

配置 MongoDB 连接(认证、地址、库名),生成MongoTemplate Bean,为向量存储提供数据库操作入口

package com.conca.ai.mongodb;

import com.mongodb.MongoClientSettings;

import com.mongodb.MongoCredential;

import com.mongodb.ServerAddress;

import com.mongodb.client.MongoClients;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.data.mongodb.core.MongoTemplate;

import java.util.Arrays;

import java.util.Collections;

@Configuration

public class MongoConfig {

// 从配置文件读取参数(也可以硬编码,不推荐)

private final String mongoHost = "127.0.0.1";

private final int mongoPort = 27017;

private final String mongoDatabase = "chat_memory_db";

private final String mongoUsername = "root";

private final String mongoPassword = "123123";

private final String authDatabase = "admin";

@Bean

public MongoTemplate mongoTemplate() {

// 1. 创建认证信息

MongoCredential credential = MongoCredential.createCredential(

mongoUsername, // 用户名

authDatabase, // 认证数据库

mongoPassword.toCharArray() // 密码

);

// 2. 配置 MongoClient 连接参数

MongoClientSettings settings = MongoClientSettings.builder()

.applyToClusterSettings(builder ->

builder.hosts(Collections.singletonList(new ServerAddress(mongoHost, mongoPort))))

.credential(credential) // 添加认证信息

.build();

// 3. 创建 MongoClient 并初始化 MongoTemplate

return new MongoTemplate(MongoClients.create(settings), mongoDatabase);

}

}

1.2 MongoDBVectorStore

自定义 MongoDB 向量存储实现,核心实现余弦相似度计算和向量检索逻辑,是整个系统的核心

/*

* Copyright 2023-2025 the original author or authors.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* https://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.conca.ai.mongodb;

import java.util.ArrayList;

import java.util.Collections;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Optional;

import java.util.stream.Collectors;

import com.mongodb.MongoCommandException;

import com.mongodb.client.result.DeleteResult;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.ai.document.Document;

import org.springframework.ai.document.DocumentMetadata;

import org.springframework.ai.embedding.EmbeddingModel;

import org.springframework.ai.embedding.EmbeddingOptionsBuilder;

import org.springframework.ai.model.EmbeddingUtils;

import org.springframework.ai.observation.conventions.VectorStoreProvider;

import org.springframework.ai.vectorstore.AbstractVectorStoreBuilder;

import org.springframework.ai.vectorstore.SearchRequest;

import org.springframework.ai.vectorstore.filter.Filter;

import org.springframework.ai.vectorstore.observation.AbstractObservationVectorStore;

import org.springframework.ai.vectorstore.observation.VectorStoreObservationContext;

import org.springframework.beans.factory.InitializingBean;

import org.springframework.data.mongodb.UncategorizedMongoDbException;

import org.springframework.data.mongodb.core.MongoTemplate;

import org.springframework.data.mongodb.core.aggregation.Aggregation;

import org.springframework.data.mongodb.core.query.BasicQuery;

import org.springframework.data.mongodb.core.query.Criteria;

import org.springframework.data.mongodb.core.query.Query;

import org.springframework.util.Assert;

/**

* MongoDB Atlas-based vector store implementation using the Atlas Vector Search.

*

* <p>

* The store uses a MongoDB collection to persist vector embeddings along with their

* associated document content and metadata. By default, it uses the "vector_store"

* collection with a vector search index for similarity search operations.

* </p>

*

* <p>

* Features:

* </p>

* <ul>

* <li>Automatic schema initialization with configurable collection and index

* creation</li>

* <li>Support for cosine similarity search</li>

* <li>Metadata filtering using MongoDB Atlas Search syntax</li>

* <li>Configurable similarity thresholds for search results</li>

* <li>Batch processing support with configurable batching strategies</li>

* <li>Observation and metrics support through Micrometer</li>

* </ul>

*

* <p>

* Basic usage example:

* </p>

* <pre>{@code

* MongoDBAtlasVectorStore vectorStore = MongoDBAtlasVectorStore.builder(mongoTemplate, embeddingModel)

* .collectionName("vector_store")

* .initializeSchema(true)

* .build();

*

* // Add documents

* vectorStore.add(List.of(

* new Document("content1", Map.of("key1", "value1")),

* new Document("content2", Map.of("key2", "value2"))

* ));

*

* // Search with filters

* List<Document> results = vectorStore.similaritySearch(

* SearchRequest.query("search text")

* .withTopK(5)

* .withSimilarityThreshold(0.7)

* .withFilterExpression("key1 == 'value1'")

* );

* }</pre>

*

* <p>

* Advanced configuration example:

* </p>

* <pre>{@code

* MongoDBAtlasVectorStore vectorStore = MongoDBAtlasVectorStore.builder(mongoTemplate, embeddingModel)

* .collectionName("custom_vectors")

* .vectorIndexName("custom_vector_index")

* .pathName("custom_embedding")

* .numCandidates(500)

* .metadataFieldsToFilter(List.of("category", "author"))

* .initializeSchema(true)

* .batchingStrategy(new TokenCountBatchingStrategy())

* .build();

* }</pre>

*

* <p>

* Database Requirements:

* </p>

* <ul>

* <li>MongoDB Atlas cluster with Vector Search enabled</li>

* <li>Collection with vector search index configured</li>

* <li>Collection schema with id (string), content (string), metadata (document), and

* embedding (vector) fields</li>

* <li>Proper access permissions for index and collection operations</li>

* </ul>

*

* @author Chris Smith

* @author Soby Chacko

* @author Christian Tzolov

* @author Thomas Vitale

* @author Ilayaperumal Gopinathan

* @since 1.0.0

*/

public class MongoDBVectorStore extends AbstractObservationVectorStore implements InitializingBean {

private static final Logger logger = LoggerFactory.getLogger(MongoDBVectorStore.class);

public static final String ID_FIELD_NAME = "_id";

public static final String METADATA_FIELD_NAME = "metadata";

public static final String CONTENT_FIELD_NAME = "content";

public static final String SCORE_FIELD_NAME = "score";

public static final String DEFAULT_VECTOR_COLLECTION_NAME = "vector_store";

private static final String DEFAULT_VECTOR_INDEX_NAME = "vector_index";

private static final String DEFAULT_PATH_NAME = "embedding";

private static final int DEFAULT_NUM_CANDIDATES = 200;

private static final int INDEX_ALREADY_EXISTS_ERROR_CODE = 68;

private static final String INDEX_ALREADY_EXISTS_ERROR_CODE_NAME = "IndexAlreadyExists";

private final MongoTemplate mongoTemplate;

private final String collectionName;

private final String vectorIndexName;

private final String pathName;

private final List<String> metadataFieldsToFilter;

private final int numCandidates;

// private final MongoDBAtlasFilterExpressionConverter filterExpressionConverter;

private final boolean initializeSchema;

protected MongoDBVectorStore(Builder builder) {

super(builder);

Assert.notNull(builder.mongoTemplate, "MongoTemplate must not be null");

this.mongoTemplate = builder.mongoTemplate;

this.collectionName = builder.collectionName;

this.vectorIndexName = builder.vectorIndexName;

this.pathName = builder.pathName;

this.numCandidates = builder.numCandidates;

this.metadataFieldsToFilter = builder.metadataFieldsToFilter;

// this.filterExpressionConverter = builder.filterExpressionConverter;

this.initializeSchema = builder.initializeSchema;

}

@Override

public void afterPropertiesSet() throws Exception {

if (!this.initializeSchema) {

return;

}

// Create the collection if it does not exist

if (!this.mongoTemplate.collectionExists(this.collectionName)) {

this.mongoTemplate.createCollection(this.collectionName);

}

// Create search index

createSearchIndex();

}

private void createSearchIndex() {

try {

this.mongoTemplate.executeCommand(createSearchIndexDefinition());

}

catch (UncategorizedMongoDbException e) {

Throwable cause = e.getCause();

if (cause instanceof MongoCommandException commandException) {

// Ignore any IndexAlreadyExists errors

if (INDEX_ALREADY_EXISTS_ERROR_CODE == commandException.getCode()

|| INDEX_ALREADY_EXISTS_ERROR_CODE_NAME.equals(commandException.getErrorCodeName())) {

return;

}

}

throw e;

}

}

/**

* Provides the Definition for the search index

*/

private org.bson.Document createSearchIndexDefinition() {

List<org.bson.Document> vectorFields = new ArrayList<>();

vectorFields.add(new org.bson.Document().append("type", "vector")

.append("path", this.pathName)

.append("numDimensions", this.embeddingModel.dimensions())

.append("similarity", "cosine"));

vectorFields.addAll(this.metadataFieldsToFilter.stream()

.map(fieldName -> new org.bson.Document().append("type", "filter").append("path", "metadata." + fieldName))

.toList());

return new org.bson.Document().append("createSearchIndexes", this.collectionName)

.append("indexes",

List.of(new org.bson.Document().append("name", this.vectorIndexName)

.append("type", "vectorSearch")

.append("definition", new org.bson.Document("fields", vectorFields))));

}

/**

* Maps a Bson Document to a Spring AI Document

* @param mongoDocument the mongoDocument to map to a Spring AI Document

* @return the Spring AI Document

*/

private Document mapMongoDocument(org.bson.Document mongoDocument, float[] queryEmbedding) {

String id = mongoDocument.getString(ID_FIELD_NAME);

String content = mongoDocument.getString(CONTENT_FIELD_NAME);

Double score = mongoDocument.getDouble(SCORE_FIELD_NAME);

Map<String, Object> metadata = mongoDocument.get(METADATA_FIELD_NAME, org.bson.Document.class);

metadata.put(DocumentMetadata.DISTANCE.value(), 1 - score);

// @formatter:off

return Document.builder()

.id(id)

.text(content)

.metadata(metadata)

.score(score)

.build(); // @formatter:on

}

@Override

public void doAdd(List<Document> documents) {

List<float[]> embeddings = this.embeddingModel.embed(documents, EmbeddingOptionsBuilder.builder().build(),

this.batchingStrategy);

for (Document document : documents) {

MongoDBDocument mdbDocument = new MongoDBDocument(document.getId(), document.getText(),

document.getMetadata(), embeddings.get(documents.indexOf(document)));

this.mongoTemplate.save(mdbDocument, this.collectionName);

}

}

@Override

public void doDelete(List<String> idList) {

Query query = new Query(org.springframework.data.mongodb.core.query.Criteria.where(ID_FIELD_NAME).in(idList));

this.mongoTemplate.remove(query, this.collectionName);

}

@Override

public List<Document> similaritySearch(String query) {

return similaritySearch(SearchRequest.builder().query(query).build());

}

@Override

public List<Document> doSimilaritySearch(SearchRequest request) {

//第一步 查询参数进行编码

float[] queryEmbedding = this.embeddingModel.embed(request.getQuery());

System.out.println(request.getQuery()+">>"+queryEmbedding.length);

//第二步查询出来MongoDB文档

List<org.bson.Document> listDocument = this.mongoTemplate.findAll(org.bson.Document.class, this.collectionName);

Map<org.bson.Document, Float> mapSimilarity = new HashMap<org.bson.Document, Float>();

//第三步一次进行向量比较

for(org.bson.Document doc : listDocument){

// 将MongoDB中的List<Double>转换为float[]

List<Double> vectorList = doc.getList("embedding", Double.class);

float[] vector = new float[vectorList.size()];

for (int i = 0; i < vectorList.size(); i++) {

vector[i] = vectorList.get(i).floatValue();

}

float cosine = calculateCosineSimilarity(queryEmbedding, vector);

System.out.println(doc.get("content")+">>>"+cosine);

doc.put(SCORE_FIELD_NAME, Double.parseDouble(cosine+""));

mapSimilarity.put(doc, cosine);

}

//第四步获取评分最高的2名

// 1. 将Map.Entry转换为列表并排序

List<Map.Entry<org.bson.Document, Float>> entryList = new ArrayList<>(mapSimilarity.entrySet());

// 2. 自定义排序规则:按Value降序(Float.compare(a,b)是升序,反转则为降序)

entryList.sort((entry1, entry2) -> Float.compare(entry2.getValue(), entry1.getValue()));

// 3. 提取排序后的Document

List<org.bson.Document> listSort = entryList.stream()

.map(Map.Entry::getKey)

.collect(Collectors.toList());

return listSort

.stream()

.limit(2)

.map(d -> mapMongoDocument(d, queryEmbedding))

.toList();

}

/**

* 计算余弦相似度(核心算法)

* 公式:cosθ = (A·B) / (||A|| * ||B||)

* 结果范围[-1,1],越接近1表示越相似

*/

private static float calculateCosineSimilarity(float[] vectorA, float[] vectorB) {

if (vectorA.length != vectorB.length) {

throw new IllegalArgumentException("向量维度不一致");

}

float dotProduct = 0.0f; // 点积

float normA = 0.0f; // 向量A的模长

float normB = 0.0f; // 向量B的模长

for (int i = 0; i < vectorA.length; i++) {

dotProduct += vectorA[i] * vectorB[i];

normA += vectorA[i] * vectorA[i];

normB += vectorB[i] * vectorB[i];

}

// 避免除以0

if (normA == 0 || normB == 0) {

return 0.0f;

}

return dotProduct / (float) (Math.sqrt(normA) * Math.sqrt(normB));

}

@Override

public VectorStoreObservationContext.Builder createObservationContextBuilder(String operationName) {

return VectorStoreObservationContext.builder(VectorStoreProvider.MONGODB.value(), operationName)

.collectionName(this.collectionName)

.dimensions(this.embeddingModel.dimensions())

.fieldName(this.pathName);

}

@Override

public <T> Optional<T> getNativeClient() {

@SuppressWarnings("unchecked")

T client = (T) this.mongoTemplate;

return Optional.of(client);

}

/**

* Creates a new builder instance for MongoDBAtlasVectorStore.

* @return a new MongoDBBuilder instance

*/

public static Builder builder(MongoTemplate mongoTemplate, EmbeddingModel embeddingModel) {

return new Builder(mongoTemplate, embeddingModel);

}

public static class Builder extends AbstractVectorStoreBuilder<Builder> {

private final MongoTemplate mongoTemplate;

private String collectionName = DEFAULT_VECTOR_COLLECTION_NAME;

private String vectorIndexName = DEFAULT_VECTOR_INDEX_NAME;

private String pathName = DEFAULT_PATH_NAME;

private int numCandidates = DEFAULT_NUM_CANDIDATES;

private List<String> metadataFieldsToFilter = Collections.emptyList();

private boolean initializeSchema = false;

// private MongoDBAtlasFilterExpressionConverter filterExpressionConverter = new MongoDBAtlasFilterExpressionConverter();

/**

* @throws IllegalArgumentException if mongoTemplate is null

*/

private Builder(MongoTemplate mongoTemplate, EmbeddingModel embeddingModel) {

super(embeddingModel);

Assert.notNull(mongoTemplate, "MongoTemplate must not be null");

this.mongoTemplate = mongoTemplate;

}

/**

* Configures the collection name. This must match the name of the collection for

* the Vector Search Index in Atlas.

* @param collectionName the name of the collection

* @return the builder instance

* @throws IllegalArgumentException if collectionName is null or empty

*/

public Builder collectionName(String collectionName) {

Assert.hasText(collectionName, "Collection Name must not be null or empty");

this.collectionName = collectionName;

return this;

}

/**

* Configures the vector index name. This must match the name of the Vector Search

* Index Name in Atlas.

* @param vectorIndexName the name of the vector index

* @return the builder instance

* @throws IllegalArgumentException if vectorIndexName is null or empty

*/

public Builder vectorIndexName(String vectorIndexName) {

Assert.hasText(vectorIndexName, "Vector Index Name must not be null or empty");

this.vectorIndexName = vectorIndexName;

return this;

}

/**

* Configures the path name. This must match the name of the field indexed for the

* Vector Search Index in Atlas.

* @param pathName the name of the path

* @return the builder instance

* @throws IllegalArgumentException if pathName is null or empty

*/

public Builder pathName(String pathName) {

Assert.hasText(pathName, "Path Name must not be null or empty");

this.pathName = pathName;

return this;

}

/**

* Sets the number of candidates for vector search.

* @param numCandidates the number of candidates

* @return the builder instance

*/

public Builder numCandidates(int numCandidates) {

this.numCandidates = numCandidates;

return this;

}

/**

* Sets the metadata fields to filter in vector search.

* @param metadataFieldsToFilter list of metadata field names

* @return the builder instance

* @throws IllegalArgumentException if metadataFieldsToFilter is null or empty

*/

public Builder metadataFieldsToFilter(List<String> metadataFieldsToFilter) {

Assert.notEmpty(metadataFieldsToFilter, "Fields list must not be empty");

this.metadataFieldsToFilter = metadataFieldsToFilter;

return this;

}

/**

* Sets whether to initialize the schema.

* @param initializeSchema true to initialize schema, false otherwise

* @return the builder instance

*/

public Builder initializeSchema(boolean initializeSchema) {

this.initializeSchema = initializeSchema;

return this;

}

/**

* Builds the MongoDBAtlasVectorStore instance.

* @return a new MongoDBAtlasVectorStore instance

* @throws IllegalStateException if the builder is in an invalid state

*/

@Override

public MongoDBVectorStore build() {

return new MongoDBVectorStore(this);

}

}

/**

* The representation of {@link Document} along with its embedding.

*

* @param id The id of the document

* @param content The content of the document

* @param metadata The metadata of the document

* @param embedding The vectors representing the content of the document

*/

public record MongoDBDocument(String id, String content, Map<String, Object> metadata, float[] embedding) {

}

}

1.3 VectorStoreConfig

配置 MongoDB 向量存储 Bean,关联MongoTemplate和 Embedding 模型

package com.conca.ai.config;

import org.springframework.ai.embedding.EmbeddingModel;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.data.mongodb.core.MongoTemplate;

import com.conca.ai.mongodb.MongoDBVectorStore;

@Configuration

public class VectorStoreConfig {

@Bean

public MongoDBVectorStore mongoDbVectorStore(MongoTemplate mongoTemplate,

EmbeddingModel embeddingModel) {

return MongoDBVectorStore

.builder(mongoTemplate, embeddingModel)

.build();

}

// //MongoDB驱动包查询网址

// //https://repo.spring.io/ui/native/milestone/org/springframework/ai/

// @Bean

// public MongoDBAtlasVectorStore mongoDbAtlasVectorStore(MongoTemplate mongoTemplate,

// EmbeddingModel embeddingModel) {

// return MongoDBAtlasVectorStore

// .builder(mongoTemplate, embeddingModel)

// .build();

// }

}

1.4 OllamaLLMConfig

配置 Ollama 本地小模型(qwen3:0.6b/deepseek-r1:1.5b)的连接和 ChatClient

package com.conca.ai.config;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.client.advisor.MessageChatMemoryAdvisor;

import org.springframework.ai.chat.memory.MessageWindowChatMemory;

import org.springframework.ai.chat.model.ChatModel;

import org.springframework.ai.ollama.OllamaChatModel;

import org.springframework.ai.ollama.api.OllamaApi;

import org.springframework.ai.ollama.api.OllamaOptions;

import org.springframework.beans.factory.annotation.Qualifier;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

/**

*/

@Configuration

public class OllamaLLMConfig

{

// 从 application.properties 读取 ollama 的 base-url 配置

@Value("${spring.ai.ollama.base-url}")

private String ollamaBaseUrl;

@Bean(name="ollamaDeepseekChatModel")

public OllamaChatModel ollamaQwenChatModel() {

OllamaApi ollamaApi = OllamaApi.builder().baseUrl(ollamaBaseUrl).build();

OllamaOptions options = OllamaOptions.builder()

.model("qwen3:0.6b") // 第一个模型名称

// .model("deepseek-r1:1.5b") // 第二个模型名称

.temperature(0.9) // 不同的参数配置

.build();

return OllamaChatModel.builder()

.ollamaApi(ollamaApi)

.defaultOptions(options).build();

}

@Bean(name = "ollamaDeepseekMongoChatClient")

public ChatClient ollamaQwenMongoChatClient(@Qualifier("ollamaDeepseekChatModel") ChatModel qwen)

{

// MessageWindowChatMemory windowChatMemory = MessageWindowChatMemory.builder()

// .chatMemoryRepository(chatMemoryRepository)

// .maxMessages(10)

// .build();

return ChatClient.builder(qwen)

.defaultOptions(OllamaOptions.builder()

.build())

// .defaultAdvisors(MessageChatMemoryAdvisor.builder(windowChatMemory).build())

.build();

}

}

1.5 RagController

对外提供 HTTP 接口,实现 “知识库初始化”“向量检索”“RAG 问答” 三大核心功能

package com.conca.ai;

import java.util.Arrays;

import java.util.List;

import java.util.Map;

import java.util.function.Function;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.prompt.Prompt;

import org.springframework.ai.chat.prompt.PromptTemplate;

import org.springframework.ai.document.Document;

import org.springframework.ai.embedding.EmbeddingModel;

import org.springframework.ai.embedding.EmbeddingRequest;

import org.springframework.ai.embedding.EmbeddingResponse;

import org.springframework.ai.ollama.api.OllamaOptions;

import org.springframework.ai.vectorstore.SearchRequest;

import org.springframework.ai.vectorstore.VectorStore;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

import jakarta.annotation.Resource;

import lombok.extern.slf4j.Slf4j;

@RestController

@Slf4j

public class RagController

{

@Resource

private EmbeddingModel embeddingModel;

@Resource

private VectorStore vectorStore;

@Resource(name="ollamaDeepseekMongoChatClient")

private ChatClient chatClient;

/**

* 步骤一:模拟文本切片,文本向量化 后存入向量数据库MongoDB

* http://localhost:8080/rag/init

*/

@GetMapping("/rag/init")

public String add()

{

List<Document> documents = List.of(

new Document("A0200:用户登录验证失败二级宏观错误码"),

new Document("A0201:用户名或密码错误"),

new Document("A0202:用户token过期失效"),

new Document("B2222:订单接口调用失败"),

new Document("B2223:库存扣减接口超时"),

new Document("B3333:物流查询接口返回异常"),

new Document("C1111:Kafka消息发送失败"),

new Document("C1112:Kafka消费组重平衡异常"),

new Document("C3333:Redis缓存写入失败"),

new Document("C3334:Redis连接池耗尽"),

new Document("D0001:数据库错误一级宏观错误码"),

new Document("D0100:数据库连接超时二级宏观错误码"),

new Document("D0101:SQL执行报错"),

new Document("D0102:事务提交失败"),

new Document("E0001:第三方服务错误一级宏观错误码"),

new Document("E0100:短信发送服务调用失败二级宏观错误码"),

new Document("E0101:人脸识别接口返回异常"),

new Document("F0001:文件处理错误一级宏观错误码"),

new Document("F0100:文件上传超出大小限制二级宏观错误码"),

new Document("F0101:文件格式解析失败"),

new Document("00000:系统OK正确执行后的返回"),

new Document("C2222:Kafka消息解压严重"),

new Document("B1111:支付接口超时"),

new Document("A0100:用户注册错误二级宏观错误码"),

new Document("A0001:用户端错误一级宏观错误码")

);

vectorStore.add(documents);

return embeddingModel.toString();

}

/**

* 模拟:从向量数据库mongodb查找,进行相似度查找

* http://localhost:8080/rag/get?msg=LLM

* @param msg

* @return

*/

@GetMapping("/rag/get")

public List getAll(@RequestParam(name = "msg") String msg)

{

SearchRequest searchRequest = SearchRequest.builder()

.query(msg)

.topK(2)

.build();

List<Document> list = vectorStore.similaritySearch(searchRequest);

System.out.println(list);

return list;

}

/**

* 模拟:从向量数据库mongodb查找,rag

* http://localhost:8080/rag/query?msg=A0100

* @param msg

* @return

*/

@GetMapping("/rag/query")

public String query(@RequestParam(name = "msg") String msg)

{

// 1. 检索相似文档(从MongoDB中找Top3相似文档)

SearchRequest searchRequest = SearchRequest.builder()

.query(msg)

.topK(3)

.build();

List<Document> similarDocuments = vectorStore.similaritySearch(searchRequest);

// 2. 拼接上下文

String context = similarDocuments.stream()

.map(Document::getText)

.reduce("", (a, b) -> a + "\n" + b);

// // 3. 构建Prompt(将上下文和问题传给LLM)

// String promptTemplate = """

// 你是一个运维工程师,按照给出的编码给出对应故障解释,否则请明确说明“无法从提供的文档中找到相关答案”。

// 故障编码如下:

// {context}

// 用户问题:

// {question}

// """;

// 3. 构建Prompt(将上下文和问题传给LLM)

StringBuffer promptTemplate = new StringBuffer();

promptTemplate.append("你是运维工程师,**只按以下故障编码表解释**,不做任何额外推理、补充或关联。");

promptTemplate.append("若编码不在表中,**只输出:无法从提供的文档中找到相关答案**。");

promptTemplate.append("输出规则:直接输出“编码 解释”,不添加任何额外文字。");

promptTemplate.append("故障编码表(编码:解释):");

promptTemplate.append("{context}。 ");

promptTemplate.append("用户问题:");

promptTemplate.append("{question}");

PromptTemplate template = new PromptTemplate(promptTemplate.toString());

Prompt prompt = template.create(Map.of("context", context,

"question", msg));

System.out.println(prompt);

// 4. 调用Ollama生成回答

return chatClient.prompt(prompt).call().content();

}

}

1.6 RagApplication项目启动类

扫描包并初始化 Spring 上下文

package com.conca.ai;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.context.annotation.ComponentScan;

@SpringBootApplication

@ComponentScan(basePackages = {"com.conca.ai"}) // 扫描整个com.conca.ai包及其子包

public class RagApplication {

public static void main(String[] args) {

SpringApplication.run(RagApplication.class, args);

// 1. 获取并打印项目包路径(当前类的包名)

String packagePath = RagApplication.class.getPackage().getName();

// 2. 定义项目名称(可根据实际情况修改)

String projectName = "spring AI Stream Output Project"; // 你可以改成自己的项目名

// 格式化输出信息

System.out.println("====================================");

System.out.println(" 应用启动完毕 🚀");

System.out.println("====================================");

System.out.println("项目名称:" + projectName);

System.out.println("包路径:" + packagePath);

System.out.println("欢迎来到ai");

System.out.println("====================================");

}

}

2. Java 实现 MongoDB 向量比较的核心逻辑(重点)

MongoDBVectorStore类中的doSimilaritySearch方法是向量比较的核心,完全通过 Java 代码手动实现余弦相似度计算,而非依赖 MongoDB Atlas 的原生向量搜索能力,核心步骤如下:

@Override

public List<Document> doSimilaritySearch(SearchRequest request) {

// 1. 对用户查询文本进行Embedding向量化(转为float[]向量)

float[] queryEmbedding = this.embeddingModel.embed(request.getQuery());

// 2. 全量查询MongoDB中存储的所有向量文档(无索引,性能瓶颈)

List<org.bson.Document> listDocument = this.mongoTemplate.findAll(org.bson.Document.class, this.collectionName);

// 3. 遍历所有文档,逐一遍历计算余弦相似度

Map<org.bson.Document, Float> mapSimilarity = new HashMap<>();

for(org.bson.Document doc : listDocument){

// 3.1 从MongoDB中读取存储的List<Double>向量,转为float[](适配余弦计算)

List<Double> vectorList = doc.getList("embedding", Double.class);

float[] vector = new float[vectorList.size()];

for (int i = 0; i < vectorList.size(); i++) {

vector[i] = vectorList.get(i).floatValue();

}

// 3.2 核心:调用余弦相似度算法计算相似度

float cosine = calculateCosineSimilarity(queryEmbedding, vector);

doc.put(SCORE_FIELD_NAME, Double.parseDouble(cosine+""));

mapSimilarity.put(doc, cosine);

}

// 4. 按相似度降序排序,取Top2结果返回

List<Map.Entry<org.bson.Document, Float>> entryList = new ArrayList<>(mapSimilarity.entrySet());

entryList.sort((entry1, entry2) -> Float.compare(entry2.getValue(), entry1.getValue()));

List<org.bson.Document> listSort = entryList.stream().map(Map.Entry::getKey).collect(Collectors.toList());

return listSort.stream().limit(2).map(d -> mapMongoDocument(d, queryEmbedding)).toList();

}

// 余弦相似度核心算法:cosθ = (A·B) / (||A|| * ||B||)

private static float calculateCosineSimilarity(float[] vectorA, float[] vectorB) {

if (vectorA.length != vectorB.length) {

throw new IllegalArgumentException("向量维度不一致");

}

float dotProduct = 0.0f; // 点积

float normA = 0.0f; // 向量A模长

float normB = 0.0f; // 向量B模长

for (int i = 0; i < vectorA.length; i++) {

dotProduct += vectorA[i] * vectorB[i];

normA += vectorA[i] * vectorA[i];

normB += vectorB[i] * vectorB[i];

}

if (normA == 0 || normB == 0) {

return 0.0f;

}

return dotProduct / (float) (Math.sqrt(normA) * Math.sqrt(normB));

}

2.1核心步骤

-

对用户查询文本进行Embedding向量化(转为float[]向量)

-

全量查询MongoDB中存储的所有向量文档(无索引,性能瓶颈)

-

遍历所有文档,逐一遍历计算余弦相似度

3.1 从MongoDB中读取存储的List向量,转为float[](适配余弦计算)

3.2 核心:调用余弦相似度算法计算相似度

- 按相似度降序排序,取Top2结果返回

2.2 向量比较日志

查询http://localhost:8080/rag/query?msg=A0100上面向量计算逻辑打印如下:

A0100>>1024

A0200:用户登录验证失败二级宏观错误码>>>0.549359

A0201:用户名或密码错误>>>0.58822674

A0202:用户token过期失效>>>0.52870256

B2222:订单接口调用失败>>>0.39715943

B2223:库存扣减接口超时>>>0.3791776

B3333:物流查询接口返回异常>>>0.35427678

C1111:Kafka消息发送失败>>>0.3918751

C1112:Kafka消费组重平衡异常>>>0.35871327

C3333:Redis缓存写入失败>>>0.3698006

C3334:Redis连接池耗尽>>>0.41192493

D0001:数据库错误一级宏观错误码>>>0.45165136

D0100:数据库连接超时二级宏观错误码>>>0.4534981

D0101:SQL执行报错>>>0.5083353

D0102:事务提交失败>>>0.5334933

E0001:第三方服务错误一级宏观错误码>>>0.46328068

E0100:短信发送服务调用失败二级宏观错误码>>>0.50492793

E0101:人脸识别接口返回异常>>>0.43495056

F0001:文件处理错误一级宏观错误码>>>0.46615037

F0100:文件上传超出大小限制二级宏观错误码>>>0.44284472

F0101:文件格式解析失败>>>0.51341987

00000:系统OK正确执行后的返回>>>0.4970258

C2222:Kafka消息解压严重>>>0.32977524

B1111:支付接口超时>>>0.40897542

A0100:用户注册错误二级宏观错误码>>>0.6324899

A0001:用户端错误一级宏观错误码>>>0.565165

3. 整体流程闭环

- 初始化:调用

/rag/init接口,将 25 条故障编码文本通过 Embedding 模型向量化,存入 MongoDB 的vector_store集合; - 检索:调用

/rag/get?msg=XXX,对用户输入向量化后,与 MongoDB 中所有向量计算相似度,返回 Top2 相似文档; - 问答:调用

/rag/query?msg=XXX,检索 Top3 相似文档拼接上下文,传给本地小模型生成回答。

编辑

二、代码案例测试

1 启动程序,初始化知识库内容

http://localhost:8080/rag/init

MongoDB存储的内容如下:

编辑

2小模型qwen3:0.6b的RAG

启动小模型qwen3:0.6b,访问http://localhost:8080/rag/query?msg=A0100

编辑

其实发送给大模型的具体内容打印如下:

Prompt{messages=[UserMessage{content='你是运维工程师,只按以下故障编码表解释,不做任何额外推理、补充或关联。若编码不在表中,只输出:无法从提供的文档中找到相关答案。输出规则:直接输出“编码 解释”,不添加任何额外文字。故障编码表(编码:解释):

A0100:用户注册错误二级宏观错误码

A0201:用户名或密码错误。 用户问题:A0100', properties={messageType=USER}, messageType=USER}], modelOptions=null}

3小模型deepseek-r1:1.5b的RAG

启动小模型deepseek-r1:1.5b,访问http://localhost:8080/rag/query?msg=A0100

编辑

其实发送给大模型的具体内容打印如下:

Prompt{messages=[UserMessage{content='你是运维工程师,只按以下故障编码表解释,不做任何额外推理、补充或关联。若编码不在表中,只输出:无法从提供的文档中找到相关答案。输出规则:直接输出“编码 解释”,不添加任何额外文字。故障编码表(编码:解释):

A0100:用户注册错误二级宏观错误码

A0201:用户名或密码错误。 用户问题:A0100', properties={messageType=USER}, messageType=USER}], modelOptions=null}

三、本地小模型的核心缺点(结合本案例实测)

本案例使用的 qwen3:0.6b、deepseek-r1:1.5b 均为轻量级本地模型,虽然实现了私有化部署,但缺点极为突出,且直接影响系统的实用性:

1. 输出格式控制能力极差(最核心痛点)

案例中通过 Prompt 严格要求 “直接输出编码 解释,无额外文字”,但实测结果显示:

- qwen3:0.6b:输入 “A0100”,输出:编码 解释;

- deepseek-r1:1.5b:输入 “A0100”,输出:A0100 解释:用户注册错误二级宏观错误码。

根本原因:小模型参数规模小(千万级),对 Prompt 的语义约束理解能力弱,无法严格遵循输出规则,导致回答格式不统一,难以直接用于自动化场景。

2 生成结果稳定性差,重复查询输出不一致

对同一查询 “A0100” 多次调用:

- qwen3:0.6b:输入 “A0100”,输出::无法从提供的文档中找到相关答案

- deepseek-r1:1.5b:输入 “A0100”,输出:A0100 解释:用户注册错误二级宏观错误码

根本原因:小模型的上下文窗口小(qwen3:0.6b 仅 2k tokens)、语义理解能力弱,无法处理多文档拼接后的逻辑关联。

根本原因:小模型的训练数据量少、模型结构简单,生成时的随机性无法有效控制(即使 temperature 设为 0.1 也无法完全避免)。

3. 性能与效果难以平衡

- qwen3:0.6b:响应快(1-2 秒),但输出错误率高;

- deepseek-r1:1.5b:输出准确率稍高,但响应慢(3-5 秒),且普通 PC 运行时内存占用高(>4G)。

四、优化建议

-

向量存储优化:使用 MongoDB Atlas 原生向量搜索(创建向量索引),替代 Java 手动计算相似度,提升检索性能;

-

模型层面优化:

- 升级模型:使用 7B 级别的模型(如 qwen3:7b),平衡性能与效果;

- Prompt 优化:添加示例(Few-shot),提升格式控制能力;

-

流程优化:增加结果校验层,对小模型输出进行格式校验,不符合规则则重新生成。

本案例验证了 “Spring AI + 本地小模型 + MongoDB” 构建私有化 RAG 的可行性,但小模型的能力短板和 Java 手动向量计算的性能问题,决定了该方案仅适用于数据量小、场景简单的内部测试场景,无法满足生产环境的高要求。