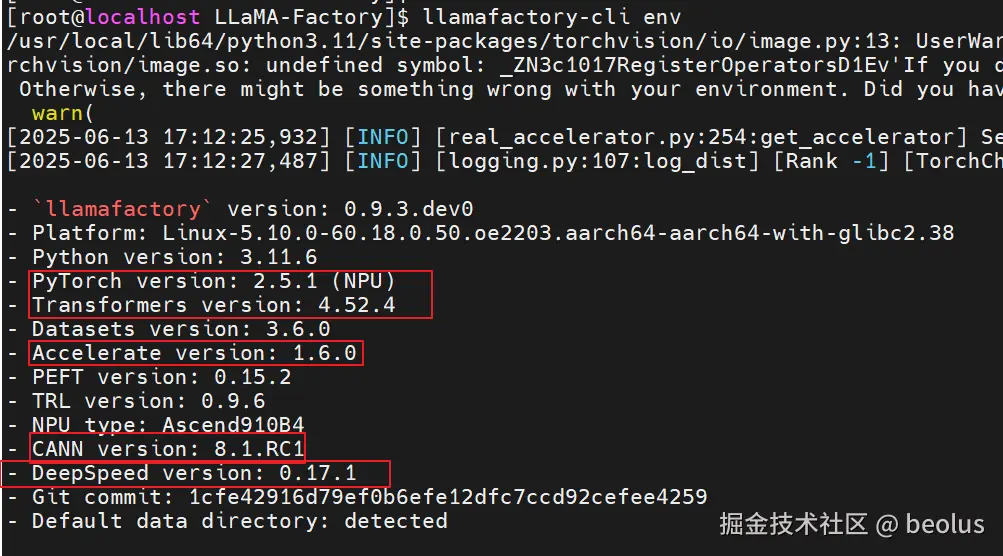

环境安装(在安装好cann的容器执行)

git clone https://github.com/hiyouga/LLaMA-Factory.git

cd LLaMA-Factory

pip install -e ".[torch-npu,metrics]"

# 查看安装的版本

llamafactory-cli env

全流程实践

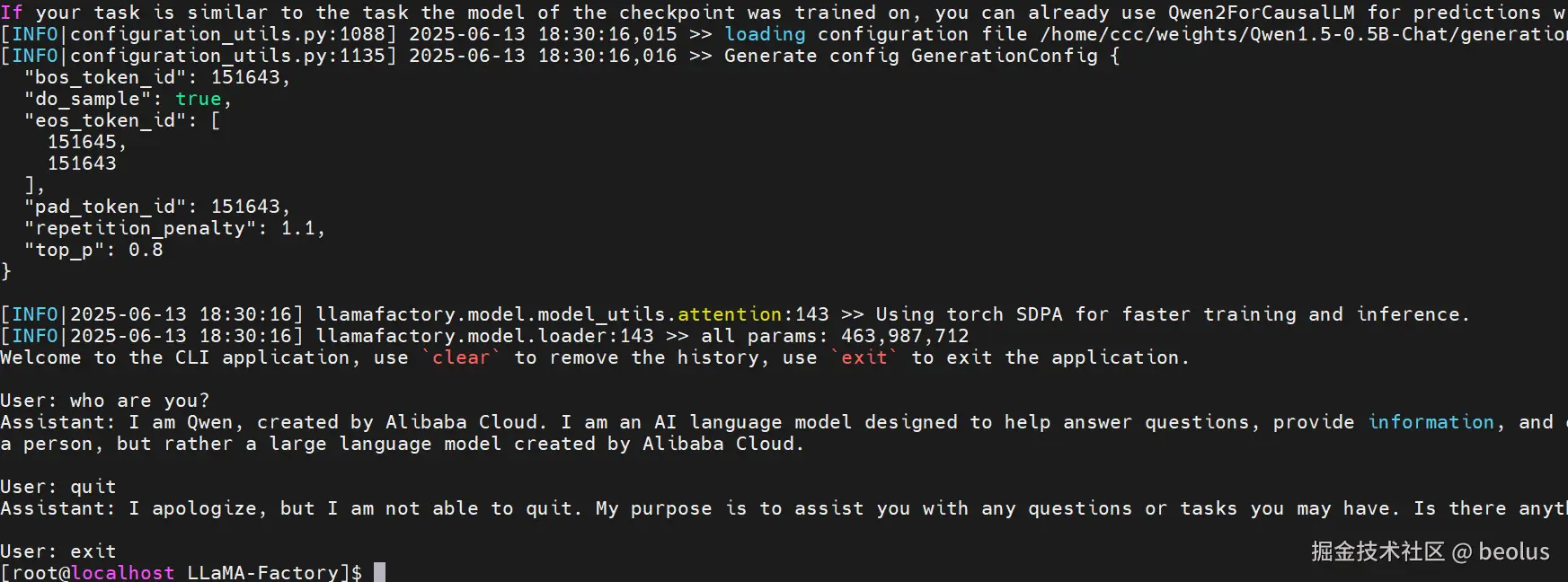

原始模型推理

cd LLaMA-Factory

# 需要提前下载好模型权重:如/weights/Qwen1.5-0.5B-Chat/

ASCEND_RT_VISIBLE_DEVICES=0 llamafactory-cli chat --model_name_or_path /weights/Qwen1.5-0.5B-Chat/ --template qwen

# 模型部署好可以进行对话

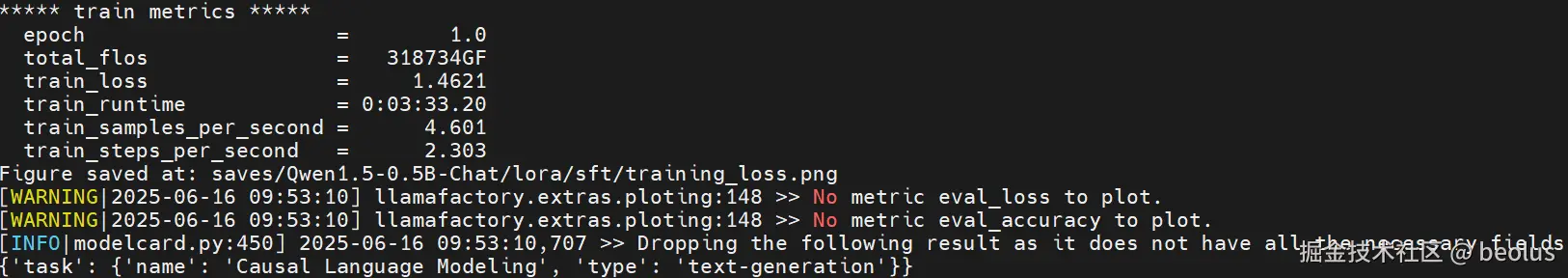

基于LORA的sft指令微调

model_name_or_path: /weights/Qwen1.5-0.5B-Chat

stage: sft

do_train: true

finetuning_type: lora

lora_target: q_proj,v_proj

dataset: identity,alpaca_en_demo

template: qwen

cutoff_len: 1024

max_samples: 1000

overwrite_cache: true

preprocessing_num_workers: 16

output_dir: saves/Qwen1.5-0.5B-Chat/lora/sft

logging_steps: 10

save_steps: 500

plot_loss: true

overwrite_output_dir: true

per_device_train_batch_size: 1

gradient_accumulation_steps: 2

learning_rate: 0.0001

num_train_epochs: 1.0

lr_scheduler_type: cosine

warmup_ratio: 0.1

fp16: true

val_size: 0.1

per_device_eval_batch_size: 1

eval_steps: 500

cd LLaMA-Factory

ASCEND_RT_VISIBLE_DEVICES=0 llamafactory-cli train qwen1_5_lora_sft_ds.yaml

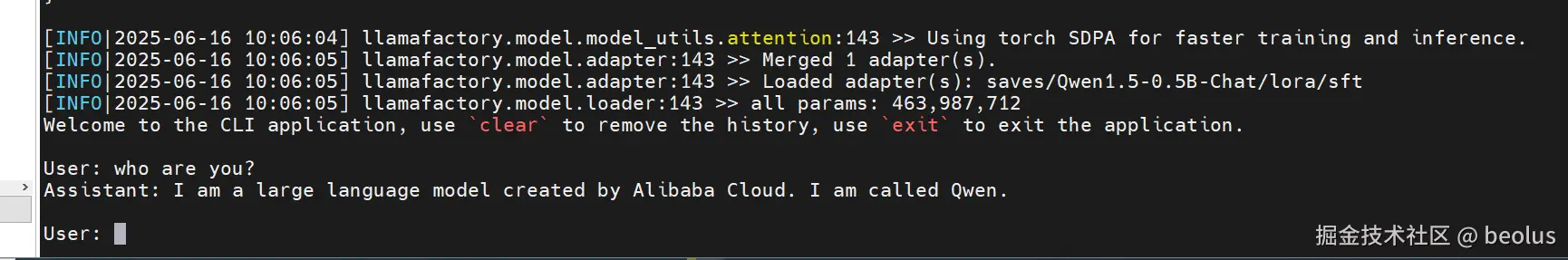

动态合并LoRA的推理

ASCEND_RT_VISIBLE_DEVICES=0 llamafactory-cli chat --model_name_or_path /weights/Qwen1.5-0.5B-Chat/ --template qwen --adapter_name_or_path saves/Qwen1.5-0.5B-Chat/lora/sft --finetuning_type lora

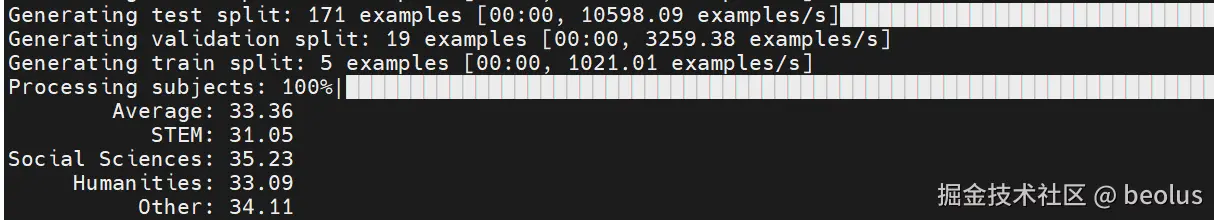

benchmark评测

llamafactory-cli eval --model_name_or_path /weights/Qwen1.5-0.5B-Chat/ --template fewshot --task mmlu_test --lang en --n_shot 5 --batch_size 1 --trust_remote_code true

参考资料

llama-factory全流程昇腾实战

llama-factory指导手册

llama-factory代码样例