mkdir /anaconda

赋予权限

chown -R py:py /anaconda

* 上传并执行Anaconda安装脚本

cd /anaconda rz chmod u+x Anaconda3-5.3.1-Linux-x86_64.sh sh Anaconda3-5.3.1-Linux-x86_64.sh

+ 自定义安装路径

```

Anaconda3 will now be installed into this location:

/root/anaconda3

- Press ENTER to confirm the location

- Press CTRL-C to abort the installation

- Or specify a different location below

[/root/anaconda3] >>> /anaconda/anaconda3

```

* 添加到系统环境变量

修改环境变量

vi /root/.bash_profile

添加下面这行

export PATH=/anaconda/anaconda3/bin:$PATH

刷新

source /root/.bash_profile

验证

python -V

* 配置pip

mkdir ~/.pip touch ~/.pip/pip.conf echo '[global]' >> ~/.pip/pip.conf echo 'trusted-host=mirrors.aliyun.com' >> ~/.pip/pip.conf echo 'index-url=mirrors.aliyun.com/pypi/simple…' >> ~/.pip/pip.conf

pip默认是10.x版本,更新pip版本

pip install PyHamcrest==1.9.0 pip install --upgrade pip

查看pip版本

pip -V

#### 2、安装AirFlow

* 安装

pip install --ignore-installed PyYAML pip install apache-airflow[celery] pip install apache-airflow[redis] pip install apache-airflow[mysql] pip install flower pip install celery

* 验证

airflow -h ll /root/airflow

#### 3、安装Redis

* 下载安装

wget download.redis.io/releases/re… tar zxvf redis-4.0.9.tar.gz -C /opt cd /opt/redis-4.0.9 make

* 启动

cp redis.conf src/ cd src nohup /opt/redis-4.0.9/src/redis-server redis.conf > output.log 2>&1 &

* 验证

ps -ef | grep redis

#### 4、配置启动AirFlow

* 修改配置文件:airflow.cfg

[core] #18行:时区 default_timezone = Asia/Shanghai #24行:运行模式

SequentialExecutor是单进程顺序执行任务,默认执行器,通常只用于测试

LocalExecutor是多进程本地执行任务使用的

CeleryExecutor是分布式调度使用(可以单机),生产环境常用

DaskExecutor则用于动态任务调度,常用于数据分析

executor = CeleryExecutor #30行:修改元数据使用mysql数据库,默认使用sqlite sql_alchemy_conn = mysql://airflow:airflow@localhost/airflow

[webserver] #468行:web ui地址和端口 base_url = http://localhost:8085 #474行 default_ui_timezone = Asia/Shanghai #480行 web_server_port = 8085

[celery] #735行 broker_url = redis://localhost:6379/0 #736 celery_result_backend = redis://localhost:6379/0 #743 result_backend = db+mysql://airflow:airflow@localhost:3306/airflow

* 初始化元数据数据库

+ 进入mysql

```

mysql -uroot -p

set global explicit_defaults_for_timestamp =1;

exit

```

+ 初始化

```

airflow db init

```

* 配置Web访问

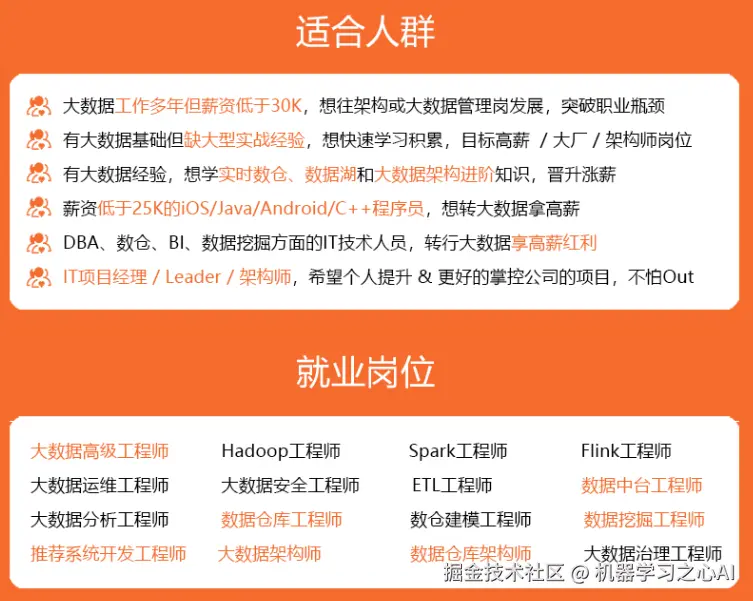

**既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!**

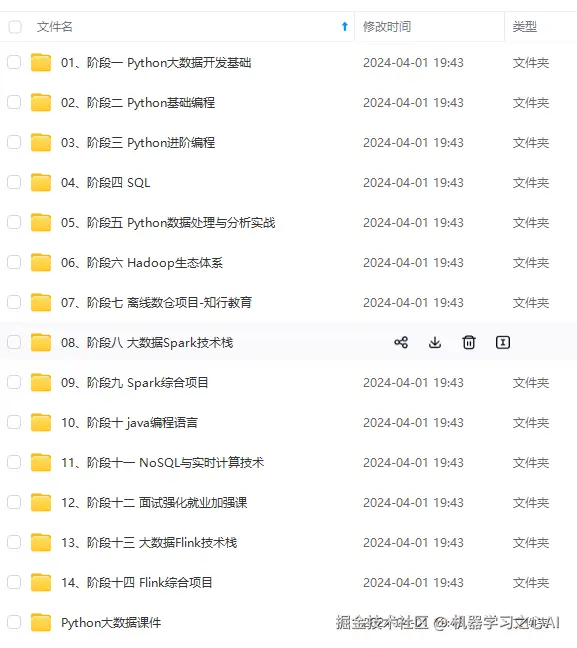

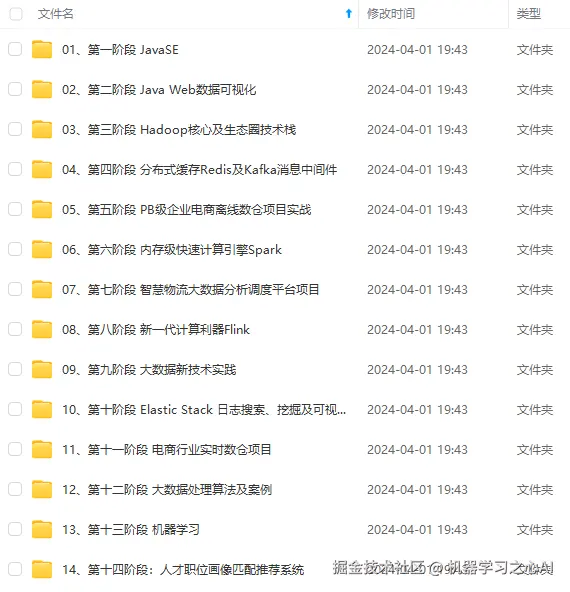

**由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新**

**[需要这份系统化资料的朋友,可以戳这里获取](https://gitee.com/vip204888)**