前情提要:我还是在忙项目组件化,这次负责的是协议模块,里面的协议计算也挺有意思,虽然没采用规则引擎,不过这次主要分享的是协议结果的缓存更新方案,计算部分,这次可能不会过多讲,合在一起篇幅过长,感兴趣的也可以评论区催更,我再找时间写一章协议计算。

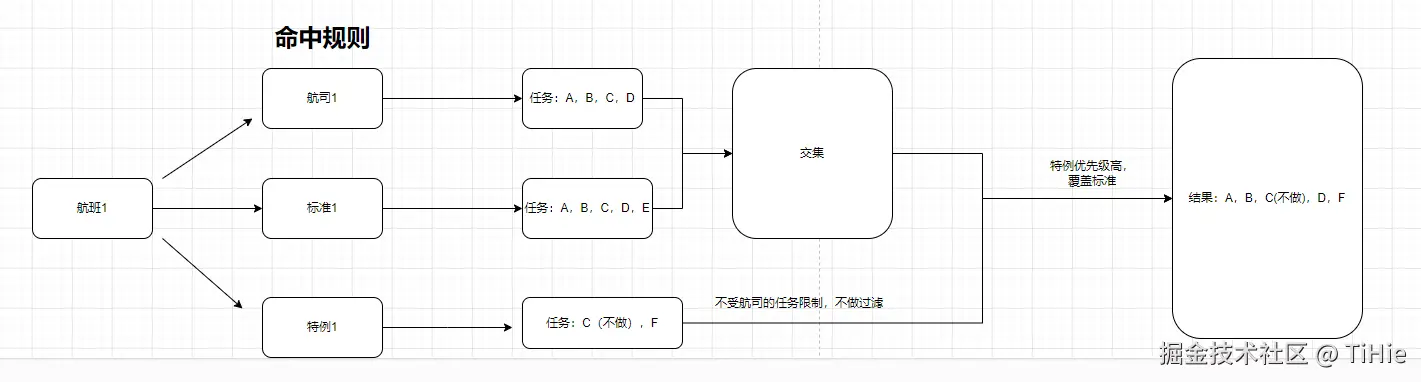

协议模块:规则分为航司规则,标准任务规则,特例任务规则,以下是它们的关系。

对于这几个协议规则你可以理解为,航司规则配置的任务是航司跟机场购买的服务,该航司下的子航司下的航班匹配到的标准任务只能是航司任务下的,所以取的是交集数据,而特例规则是用来处理应急的,比如航司3U下有很多子航司,对于B1633这个子航司,今天不想做任务A,就添加一个有效期是今天的规则,匹配该子航司的特例规则,指定任务A不做,也可以额外指定任务F,多做一个航司任务之外的任务,来满足当前的机场业务需要。

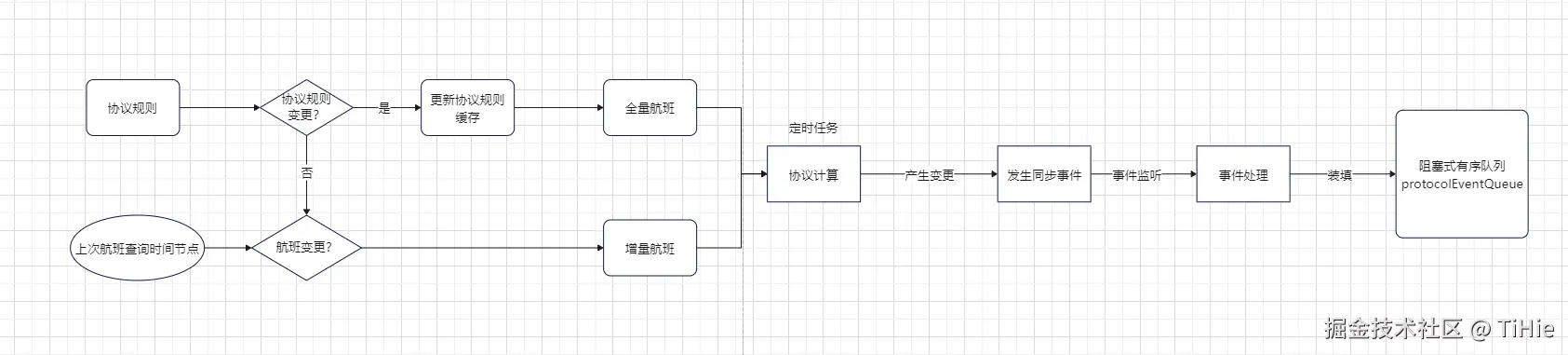

协议计算+缓存同步方案流程图

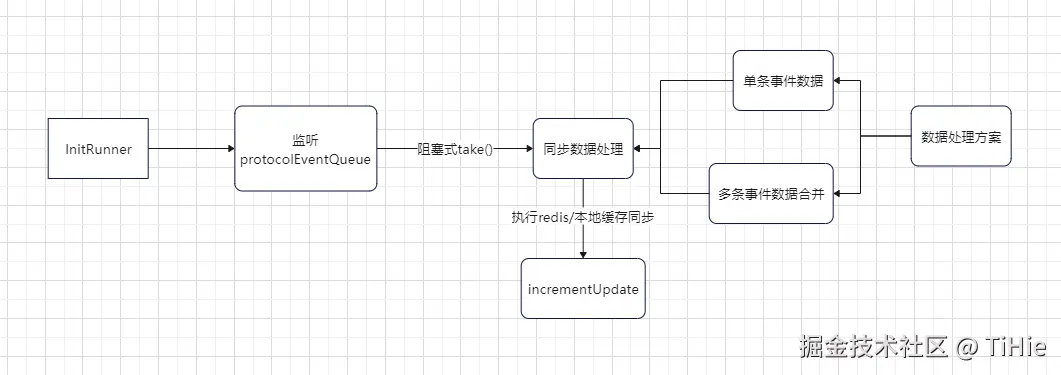

从有序队列获取同步信息,进行缓存同步。

流程解析:协议计算是一个定时任务,执行机制是 @Scheduled(fixedDelay = 10000) 间隔10秒执行一次,上一次没执行完成就延后执行

数据一致性的要点:

- 保证同一时刻只有一个生产者在产生数据, 同一时刻只有一个消费者在消费数据,有序队列已经在控制处理的数据处理的顺序了。

- 保证发生同步事件跟协议变更数据录入数据库是同一事务,避免数据库录入失败,但发送了同步事件。

- 在同步缓存方法中做异常兜底方法,出现异常就清空protocolEventQueue队列,并且删除redis和本地缓存,避免数据不一致。

- 了解数据变化,避免潜在的脏数据遗留问题。

缓存同步方案代码:

redis缓存结构:Map<String,List> key是日期,value是该日期的协议数据列表

阻塞式队列

@Component public class ProtocolSyncService {

@Autowired

private RedissonClient redissonClient;

private RBlockingQueue<String> protocolEventQueue;

@PostConstruct

public void init() {

this.protocolEventQueue = redissonClient.getBlockingQueue(RedisConstant.REDIS_PROTOCOL_EVENT_QUEUE, JsonJacksonCodec.INSTANCE);

}

public void addToQueue(String event) {

protocolEventQueue.add(event);

}

public String take() throws InterruptedException {

return protocolEventQueue.take();

}

public BlockingQueue<String> getQueue() {

return p

发送事件

final List<Protocol> finalProtocols = new ArrayList<>(allProtocols);

if (CollUtil.isNotEmpty(allProtocols)) {

List<Protocol> dirtyData = updateData.stream()

.filter(f -> Objects.nonNull(f.getOldSafeguardDate()))

.map(p -> {

p.setSafeguardDate(p.getOldSafeguardDate())

.setUpdateStatus(UpdateStatusEnum.DELETE.getCode());

return p;

})

.collect(Collectors.toList());

allProtocols.addAll(dirtyData);

Map<String, List<Protocol>> dateListMap = allProtocols.stream()

.collect(Collectors.groupingBy(protocol -> DateUtils.format(protocol.getSafeguardDate(), DateUtils.YYYY_MM_DD)));

log.info("发送同步协议事件:{}", dateListMap.size());

applicationContext.publishEvent(new SyncProtocolEvent(this, dateListMap));

}

事件实体

@ToString

@Getter

@Setter

public class SyncProtocolEvent extends ApplicationEvent implements Serializable {

private static final long serialVersionUID = 1L;

private Long uniqueId;

private Map<String, List<Protocol>> protocolMap;

public SyncProtocolEvent() {

super(new Object());

this.uniqueId = IdUtil.getSnowflakeNextId();

}

public SyncProtocolEvent(Object source) {

super(source);

this.uniqueId = IdUtil.getSnowflakeNextId();

}

public SyncProtocolEvent(Object source, Map<String, List<Protocol>> protocolMap) {

super(source);

this.protocolMap = protocolMap;

this.uniqueId = IdUtil.getSnowflakeNextId();

}

}

事件监听器

@EventListener(SyncProtocolEvent.class)

public void handleSyncProtocolEvent(SyncProtocolEvent event) {

log.info("同步protocol数据事件填装进有序队列:{}", event.getUniqueId());

protocolSyncService.addToQueue(JSONUtil.toJsonStr(event));

}

initRunner(监听阻塞式有序队列)

@Slf4j @Component(value = "protocolDataInitRunner") public class InitRunner implements CommandLineRunner {

@Autowired

private ProtocolService protocolService;

@Autowired

private RedissonClient redissonClient;

@Autowired

private ProtocolSyncService protocolSyncService;

private final ObjectMapper objectMapper;

private static final int BATCH_SIZE = 100;

private static final int POLL_TIMEOUT_MS = 5000;

private final ExecutorService executor = Executors.newSingleThreadExecutor();

private final Lock processBatchLock = new ReentrantLock();

public InitRunner() {

this.objectMapper = new ObjectMapper();

this.objectMapper.configure(DeserializationFeature.FAIL_ON_UNKNOWN_PROPERTIES, false);

}

@Override

public void run(String... args) throws Exception {

startProcessing();

}

public void startProcessing() {

executor.execute(() -> {

while (true) {

try {

log.info("检测队列是否阻塞");

String initialElement = protocolSyncService.take();

log.info("获取同步协议数据");

List<String> batchStrList = new LinkedList<>();

if (initialElement != null) {

long s1 = System.currentTimeMillis();

batchStrList.add(initialElement);

if (!batchStrList.isEmpty()) {

ObjectReader reader = objectMapper.readerFor(SyncProtocolEvent.class);

List<Map<String, List<Protocol>>> protocolMapList = batchStrList.stream()

.map(json -> {

try {

SyncProtocolEvent syncProtocolEvent = reader.readValue(json);

return syncProtocolEvent.getProtocolMap();

} catch (JsonProcessingException e) {

throw new RuntimeException("Error processing JSON", e);

}

})

.collect(Collectors.toList());

Map<String, List<Protocol>> mergedData = mergeData(protocolMapList);

RLock lock = redissonClient.getLock("processBatchLock");

if (lock.tryLock(5, TimeUnit.SECONDS)) {

try {

log.info("获取到分布式锁,开始处理数据");

processBatch(mergedData);

} finally {

lock.unlock();

log.info("释放分布式锁");

}

} else {

log.warn("未能获取分布式锁,跳过此次处理");

}

}

}

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

log.info("处理事件时线程被中断", e);

} catch (Exception e) {

log.error("处理事件时出现错误", e);

}

}

});

}

private static Map<String, List<Protocol>> mergeData(List<Map<String, List<Protocol>>> batch) {

Map<String, List<Protocol>> mergedMap = new HashMap<>();

for (Map<String, List<Protocol>> data : batch) {

for (Map.Entry<String, List<Protocol>> entry : data.entrySet()) {

String timeKey = entry.getKey();

List<Protocol> protocols = entry.getValue();

if (!mergedMap.containsKey(timeKey)) {

mergedMap.put(timeKey, new ArrayList<>(protocols));

} else {

List<Protocol> existingProtocols = mergedMap.get(timeKey);

Map<Long, Protocol> protocolMap = existingProtocols.stream()

.collect(Collectors.toMap(Protocol::getId, p -> p));

for (Protocol protocol : protocols) {

protocolMap.put(protocol.getId(), protocol);

}

mergedMap.put(timeKey, new ArrayList<>(protocolMap.values()));

}

}

}

return mergedMap;

}

private void processBatch(Map<String, List<Protocol>> mergedData) {

protocolService.incrementUpdate(mergedData);

}

缓存同步方法

private Cache<String, List<Protocol>> localCache;

ExecutorService virtualExecutor = Executors.newVirtualThreadPerTaskExecutor();

@PostConstruct

public void init() {

this.localCache = Caffeine.newBuilder()

.expireAfterWrite(10, TimeUnit.MINUTES)

.maximumSize(1000)

.build();

loadCacheFromRedis();

}

public void incrementUpdate(Map<String, List<Protocol>> protocolMap) {

log.info("接收到同步协议结果到Redis的事件");

long startTime = System.currentTimeMillis();

if (protocolMap == null || protocolMap.isEmpty()) {

log.info("同步的数据为空,直接返回");

return;

}

protocolMap.entrySet().parallelStream().forEach(entry -> {

String dateStr = entry.getKey();

List<Protocol> protocols = entry.getValue();

if (protocols == null || protocols.isEmpty()) {

return;

}

RLock lock = redissonClient.getLock(String.format(RedisConstant.REDIS_PROTOCOL_SYNC_KEY, dateStr));

try {

if (lock.tryLock(5, 30, TimeUnit.SECONDS)) {

RMapCache<String, List<byte[]>> protocolMapRedis = redissonClient.getMapCache(RedisConstant.REDIS_PROTOCOL_INFO_KEY, StringJavaCodec.INSTANCE);

protocolMapRedis.expire(1, TimeUnit.DAYS);

List<byte[]> currentBytes = protocolMapRedis.get(dateStr);

List<Protocol> currentProtocols = currentBytes == null ? new ArrayList<>() :

currentBytes.stream()

.map(bytes -> FuryUtils.deserialize(bytes, Protocol.class))

.collect(Collectors.toList());

List<Protocol> toAdd = new ArrayList<>();

List<Protocol> toRemove = new ArrayList<>();

Map<Long, Protocol> protocolMapById = currentProtocols.stream()

.collect(Collectors.toMap(

Protocol::getId,

protocol -> protocol,

(existingProtocol, newProtocol) -> {

if (newProtocol.getUpdateTime().after(existingProtocol.getUpdateTime())) {

toRemove.add(existingProtocol);

return newProtocol;

} else {

toRemove.add(newProtocol);

return existingProtocol;

}

}

));

protocols.forEach(protocol -> {

UpdateStatusEnum statusEnum = UpdateStatusEnum.getEnumByCode(protocol.getUpdateStatus());

if (statusEnum != null) {

switch (statusEnum) {

case ADD:

toAdd.add(protocol);

break;

case DELETE:

Protocol existing = protocolMapById.get(protocol.getId());

if (existing != null) {

toRemove.add(existing);

}

break;

case UPDATE:

Protocol existingUpdate = protocolMapById.get(protocol.getId());

if (existingUpdate != null) {

toRemove.add(existingUpdate);

}

toAdd.add(protocol);

break;

default:

break;

}

}

});

boolean hasChanges = false;

if (!toRemove.isEmpty()) {

currentProtocols.removeAll(toRemove);

hasChanges = true;

}

if (!toAdd.isEmpty()) {

currentProtocols.addAll(toAdd);

hasChanges = true;

}

if (hasChanges) {

log.info("协议变更: {},添加ids: {}, 删除ids: {}", dateStr,

toAdd.stream().map(Protocol::getId).collect(Collectors.toSet()),

toRemove.stream().map(Protocol::getId).collect(Collectors.toSet()));

List<byte[]> serializedUpdatedProtocols = currentProtocols.stream()

.map(FuryUtils::serialize)

.collect(Collectors.toList());

protocolMapRedis.put(dateStr, serializedUpdatedProtocols, 1, TimeUnit.DAYS);

localCache.put(dateStr, currentProtocols);

}

} else {

log.warn("无法获取锁,跳过日期: {}", dateStr);

}

} catch (InterruptedException e) {

log.error("同步协议变更时发生异常,清空本地缓存和Redis缓存", e);

localCache.invalidate(dateStr);

RMapCache<String, List<byte[]>> protocolMapRedis = redissonClient.getMapCache(RedisConstant.REDIS_PROTOCOL_INFO_KEY, StringJavaCodec.INSTANCE);

protocolMapRedis.clear();

clearUpdateEventQueue();

throw new RuntimeException("同步协议变更时发生致命异常,终止处理", e);

} finally {

if (lock.isHeldByCurrentThread()) {

lock.unlock();

}

}

});

long endTime = System.currentTimeMillis();

log.info("同步协议数据到Redis和本地缓存耗时: {} ms", endTime - startTime);

}

缓存查询

public List<Protocol> fetchProtocolForDateRange(Date startDate, Date endDate) {

long s1 = DateUtil.currentSeconds();

List<Protocol> result = new ArrayList<>();

Set<String> missingDates = new HashSet<>();

List<String> dateRange = CommonUtils.getDateRange(startDate, endDate);

List<Date> dates = new ArrayList<>();

List<CompletableFuture<Void>> futures = new ArrayList<>();

RMapCache<String, List<byte[]>> protocolMap = redissonClient.getMapCache(RedisConstant.REDIS_PROTOCOL_INFO_KEY, StringJavaCodec.INSTANCE);

for (String date : dateRange) {

CompletableFuture<Void> completableFuture = CompletableFuture.runAsync(() -> {

try {

List<Protocol> protocols = localCache.getIfPresent(date);

if (protocols == null) {

List<byte[]> protocolBytes = protocolMap.get(date);

if (protocolBytes == null || protocolBytes.isEmpty()) {

synchronized (this) {

missingDates.add(date);

}

} else {

protocols = protocolBytes.stream()

.map(bytes -> FuryUtils.deserialize(bytes, Protocol.class))

.collect(Collectors.toList());

synchronized (this) {

result.addAll(protocols);

localCache.put(date, protocols);

}

}

} else {

synchronized (this) {

result.addAll(protocols);

}

}

} catch (Exception e) {

e.printStackTrace();

}

}, virtualExecutor);

futures.add(completableFuture);

}

CompletableFuture.allOf(futures.toArray(new CompletableFuture[0])).join();

long s2 = DateUtil.currentSeconds();

log.info("协议查询redis耗时:{}", s2 - s1);

if (!missingDates.isEmpty()) {

log.info("查询数据库,更新缓存");

List<Protocol> dbData = getSafeguardDataFromDB(missingDates);

result.addAll(dbData);

if (CollUtil.isNotEmpty(dbData)) {

List<Date> dbDates = dbData.stream()

.map(Protocol::getSafeguardDate)

.collect(Collectors.toList());

dates.addAll(dbDates);

for (String missingDate : missingDates) {

List<Protocol> protocolsForDate = dbData.stream()

.filter(p -> missingDate.equals(DateUtil.formatDate(p.getSafeguardDate())))

.collect(Collectors.toList());

if (!protocolsForDate.isEmpty()) {

List<byte[]> serializedProtocols = protocolsForDate.stream()

.map(FuryUtils::serialize)

.collect(Collectors.toList());

protocolMap.put(missingDate, serializedProtocols, 1, TimeUnit.DAYS);

localCache.put(missingDate, protocolsForDate);

}

}

}

}

long s3 = DateUtil.currentSeconds();

log.info("协议查询数据库耗时:{}", s3 - s2);

log.info("协议查询总耗时:{}", s3 - s1);

protocolMap.expire(1, TimeUnit.DAYS);

return result;

}

private List<Protocol> getSafeguardDataFromDB(Set<String> dates) {

List<Protocol> dbProtocols = this.lambdaQuery()

.in(Protocol::getSafeguardDate, dates)

.list();

if (CollUtil.isEmpty(dbProtocols)) {

return new ArrayList<>();

}

return dbProtocols;

}

序列化,反序列化工具 Fury(很强大,感兴趣的可以去了解一下)

public class FuryUtils {

private static final ThreadSafeFury fury = Fury.builder()

.withLanguage(Language.JAVA)

.withCompatibleMode(CompatibleMode.COMPATIBLE)

.requireClassRegistration(false)

.buildThreadSafeFury();

public static <T> byte[] serialize(T object) {

if (object == null) {

throw new IllegalArgumentException("The object to serialize cannot be null");

}

return fury.serialize(object);

}

public static <T> T deserialize(byte[] bytes, Class<T> clazz) {

if (bytes == null || clazz == null) {

throw new IllegalArgumentException("The byte array or class type cannot be null");

}

return (T) fury.deserialize(bytes);

}

public static <T> T deepCopy(T object) {

if (object == null) {

throw new IllegalArgumentException("The object to deep copy cannot be null");

}

byte[] serializedData = serialize(object);

return deserialize(serializedData, (Class<T>) object.getClass());

}

脏数据发现与解决过程:

我的 redis缓存结构:Map<String,List> key是日期,value是该日期的协议数据

redis缓存更新的前提是,redis中有当前变更数据日期的key,才会更新。

航班日期会变更,如果航班A原来是2024-09-22 保障的,我同步到了key为2024-09-22的元素下,但是下一次增量航班数据查询的时候,航班A变成了2024-09-23保障的,跨天了这时候他会在2024-09-23插入一条数据,但是2024-09-22这个key还是会留下一条脏数据,没处理,这误导我以为缓存是被多线程还是异步影响了一致性。

解决方案:

for (Protocol mergedProtocol : mergedList) {

Protocol dbProtocol = dbProtocolMap.get(mergedProtocol.getUniqueKey());

if (dbProtocol != null) {

if (!dbProtocol.getMd5().equals(mergedProtocol.getMd5())) {

mergedProtocol.setId(dbProtocol.getId());

mergedProtocol.setUpdateStatus(UpdateStatusEnum.UPDATE.getCode());

if (!dbProtocol.getSafeguardDate().equals(mergedProtocol.getSafeguardDate())) {

mergedProtocol.setOldSafeguardDate(dbProtocol.getSafeguardDate());

}

}

} else {

mergedProtocol.setUpdateStatus(UpdateStatusEnum.ADD.getCode());

}

}

-----------------------------------------------------------------------------------------

final List<Protocol> finalProtocols = new ArrayList<>(allProtocols);

if (CollUtil.isNotEmpty(allProtocols)) {

List<Protocol> dirtyData = updateData.stream()

.filter(f -> Objects.nonNull(f.getOldSafeguardDate()))

.map(p -> {

p.setSafeguardDate(p.getOldSafeguardDate())

.setUpdateStatus(UpdateStatusEnum.DELETE.getCode());

return p;

})

.collect(Collectors.toList());

allProtocols.addAll(dirtyData);

Map<String, List<Protocol>> dateListMap = allProtocols.stream()

.collect(Collectors.groupingBy(protocol -> DateUtils.format(protocol.getSafeguardDate(), DateUtils.YYYY_MM_DD)));

log.info("发送同步协议事件:{}", dateListMap.size());

applicationContext.publishEvent(new SyncProtocolEvent(this, dateListMap));

}

待实现功能:

问题: redis会一直累计fetch过的日期的航班,也许2天后就不会用到这些日期的航班了,但是数据还是一直保留在redis中

实现方案:保留当天fetch的最小日期,定时在0点删除redis中小于这个日期的数据。

总结:对于多生产者,多消费者的缓存同步,如何保障数据一致性这一功能,我还没实现过,后续如果走通了,会再补充进帖子中,关于缓存一致性的帖子,我在掘金看到了一篇很棒的文章,这里推荐一下

作者:竹子爱熊猫

(如果推荐他人文章存在版权问题,可以私信我删除。有什么疑问或建议,欢迎在评论区讨论,如果想了解协议规则计算过程,也可以催更)