基于 Paddle Serving 的 OCR 服务化部署(GPU)

环境信息

- 操作系统:Alibaba Cloud Linux release 3 (Soaring Falcon)

- 内核:Linux iZwz9fcjgtw3vkobxamrhpZ 5.10.134-14.al8.x86_64 #1 SMP Thu Apr 27 16:46:29 CST 2023 x86_64 x86_64 x86_64 GNU/Linux

- CPU:Intel(R) Xeon(R) Platinum 8163 CPU @ 2.50GHz 8核16线程

- GPU:NVIDIA Corporation TU104GL [Tesla T4] (rev a1) 显存16GB

- 内存:30GB

- 硬盘:128GB

软件版本

- docker:24.0.4

- CUDA:11.4

- PaddleOCR:2.6

步骤

docker环境准备

- 使用阿里云的主机默认勾选安装NVIDIA驱动

nvidia-smi

cd /usr/local/cuda/include/

cat cudnn_version.h

- 安装docker,需要安装19版本以上,19版本以上可以直接使用docker --gpus 命令使用gpu,不需要再安装nvidia-docker。安装后运行容器可能报错,可以根据下面的“问题”部分解决。

部署步骤

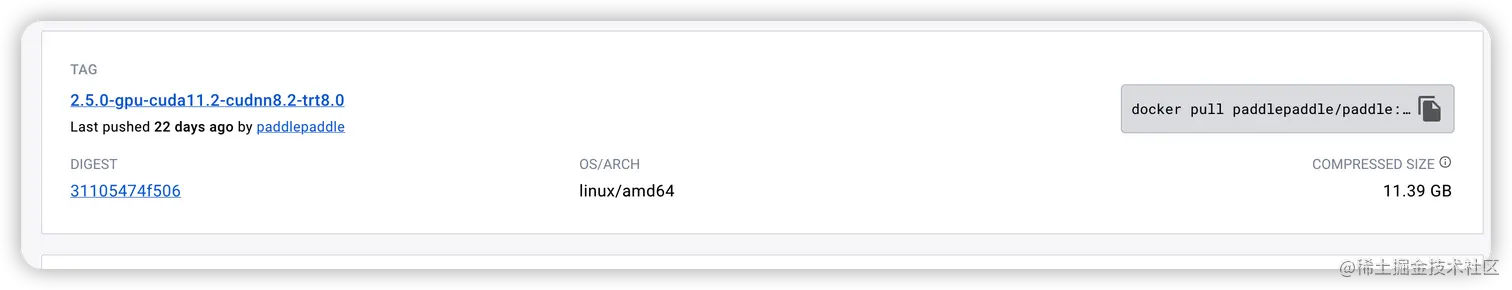

- 下载官网的镜像(hub.docker.com/r/paddlepad…)

- 根据当前的软件版本选择对应的镜像

nohup docker pull paddlepaddle/paddle:2.5.0-gpu-cuda11.2-cudnn8.2-trt8.0

- 构建镜像:docker build -t paddleocr-pdserving-gpu:v1 .

FROM paddlepaddle/paddle:2.5.0-gpu-cuda11.2-cudnn8.2-trt8.0

LABEL authors="ywxiang"

LABEL version="1.0"

LABEL description="PaddleOCR pdserving GPU version"

# 更换为码云

ENV REPO_LINK=https://gitee.com/paddlepaddle/PaddleOCR.git

# 模型数据

ENV orc_detect_model=https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

ENV orc_recognition_model=https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

# 安装serving,用于启动服务

# cuda11.2-cudnn8-TensorRT8

ENV paddle_serving=https://paddle-serving.bj.bcebos.com/test-dev/whl/paddle_serving_server_gpu-0.8.3.post112-py3-none-any.whl

# client,用于向服务发送请求

ENV paddle_serving_client=https://paddle-serving.bj.bcebos.com/test-dev/whl/paddle_serving_client-0.8.3-cp37-none-any.whl

# 安装serving-app

ENV paddle_serving_app https://paddle-serving.bj.bcebos.com/test-dev/whl/paddle_serving_app-0.8.3-py3-none-any.whl

WORKDIR /paddle

RUN pip3 config set global.index-url https://mirror.baidu.com/pypi/simple \

&& pip3 install --upgrade pip \

&& git clone $REPO_LINK \

&& pip3 install -r ./PaddleOCR/requirements.txt

WORKDIR PaddleOCR/deploy/pdserving

RUN pip3 install $paddle_serving \

&& pip3 install $paddle_serving_client \

&& pip3 install $paddle_serving_app

ADD $orc_detect_model .

ADD $orc_recognition_model .

RUN for f in *.tar; do tar xf "$f"; done;rm -fr *.tar \

&& python3 -m paddle_serving_client.convert --dirname ./ch_PP-OCRv3_det_infer/ \

--model_filename inference.pdmodel \

--params_filename inference.pdiparams \

--serving_server ./ppocr_det_v3_serving/ \

--serving_client ./ppocr_det_v3_client/ \

&& python3 -m paddle_serving_client.convert --dirname ./ch_PP-OCRv3_rec_infer/ \

--model_filename inference.pdmodel \

--params_filename inference.pdiparams \

--serving_server ./ppocr_rec_v3_serving/ \

--serving_client ./ppocr_rec_v3_client/ \

&& rm -fr *.tar

EXPOSE 9998

ENTRYPOINT ["/bin/bash","-c","python3 web_service.py --config=config.yml >log.txt"]

- 启动镜像

docker run -it -d --shm-size=64G --network=host --runtime=nvidia --gpus all --name ppocr paddleocr-pdserving-gpu:v1 /bin/bash

问题

- 使用docker --gpus 出现错误:docker: Error response from daemon: could not select device driver “” with capabilities: [[gpu]].

解决办法:(www.hangge.com/blog/cache/…)

- 执行以下步骤

yum install -y nvidia-docker2

curl -s -L https://nvidia.github.io/nvidia-container-runtime/centos7/nvidia-container-runtime.repo | \

sudo tee /etc/yum.repos.d/nvidia-container-runtime.repo

{

"registry-mirrors": ["https://registry.docker-cn.com"],

"experimental": true,

"runtimes": {

"nvidia": {

"path": "/usr/bin/nvidia-container-runtime",

"runtimeArgs": []

}

}

}

docker run -it --rm --gpus all centos nvidia-smi