In the previous post

=======================

, we looked into the most commonly used cache-aside architecture, and some necessary design details to ensure eventual consistency between the cache layer and the ground-truth DB. We also pointed out that even with all the design details done right, it is still possible for stale read and data inconsistency to happen in some situations. Fall-back strategies are usually used to compensate when these rare situations do happen.

Fall back strategies

If DB update succeeded in a write request while the cache invalidation failed, the cache entry becomes stale. Such situations happen not only in cache-aside architecture, but also in delayed-delete design. Let’s take a look at several commonly used fall back strategies to bring the cache system back to a consistent state.

Retry cache invalidation

The idea is simple: if something fails in the world of distributed systems, we simply retry it. Who knows, likely the next retry may succeed. There are two ways to do the retry:

- synchronous retry: we simply retry in the write critical path. If the initial cache invalidation did not return with a success message, the client immediately retry for 2–3 times. The advantage is that the implementation is quite straightforward, and also no new dependencies are introduced. The tradeoff is that the client now needs to do more work since the write request may take longer to complete, hence the write throughput that the system can handle is lower.

- asynchronous retry: a message queue is usually used here to decouple the client and the cache system. If cache invalidation did not return with success message, the client simply write the failed key to a message queue. The message queue broker will take over from there to retry cache invalidations. The advantage is that client does not need to spend CPU/memory resources on retry, so the max throughput the system can handle is largely not affected. But the disadvantage is that a new dependency — the message queue — is introduced, and a consumer service to consume the failed keys and send invalidation requests to the cache.

Consume DB change log to invalidate cache

In the above-mentioned retry mechanism, client coordinates the necessary fall-back tasks to compensate for failed cache invalidation. The client essentially is a single point of failure. If client dies in the middle of executing the retry strategy, the system can still become inconsistent.

As we know, most relational DBs come with redo/undo logs for transactions, such as binlog in MySQL, and change log table in F1 (which represents log records in a table and allows you to query change logs using SQL). Essentially, every DB data change is recorded in the change log. We can use a Change Data Capture (CSC) component to consume the change log, and invalidate cache entries in the background. In this elegant solution, the client does not need to worry about cache invalidation any more.

Open source solutions

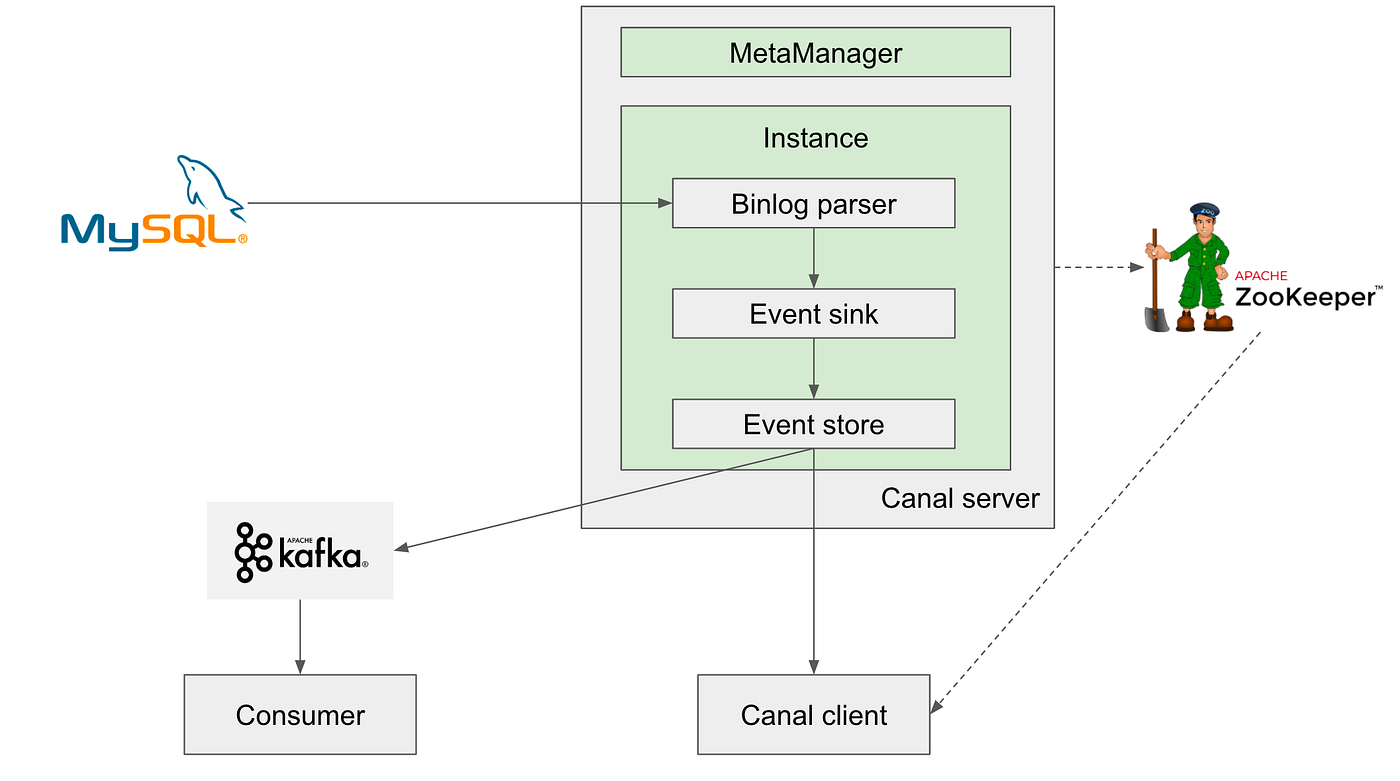

MySQL does not provide a CSC component out of the box, but there are many open-source solutions. For example, Databus, Maxwell, Canal, etc. Let’s take a brief look into the design of Canal, and how it achieves HA (high availability) as a shared infra component to support use cases from many product teams.

- Canal server utilizes MySQL leader-follower replication protocol. For the MySQL leader (write replica), Canal server appears as if a normal MySQL follower instance (read replica). Essentially, Canal server sends a dump request to MySQL leader instance. Once the leader accepts the dump request, it will start to push binary log to the follower, in this case, the Canal server. Binlog is simply a low level raw byte stream, so Canal server interprets the binlog like a real follower.

- Canal client then consumes the interpreted change logs from canal server. Alternatively, Canal server can send the interpreted change logs to a message queue such as Kafka and RocketMQ, then other custom-built consumers can consume from the message queue.

Further, canal uses ZooKeeper as a distributed lock service to achieve HA. HA consists two rules:

- To reduce the load from dump requests on the MySQL leader instance, only one Canal server instance out of a cluster is in active state (actively sending dump requests), while other instances in the cluster are in standby state (running as hot backup).

- To guarantee ordered change log consumption, only one Canal client can consume from a Canal server instance at a time.

With this CSC-based system, cache delete operations can be implemented in the Canal client or custom-built consumers. In a real production deployment, cache client handles normal read/write as discussed in

Design for cache consistency

without fall back strategies, and this CSC system continuously runs in the background, fixing any inconsistent cache entries.

One thing to call out: considering the inevitable replication lag in many leader-follower MySQL deployments, the CSC component should consume binlog from a follower instance to make the fallback strategy more reliable. Otherwise imagine this situation (which can for sure happen in real prod environments): the leader instance has a newer value, then CSC consumes binlog from the leader, and invalidates the cache entry successfully. But at this point, other MySQL follower instances may not yet get in-sync with the leader due to the replication lag. As a result, if a client reads a stale value from a follower instance and then updates the cache entry, the cache system becomes inconsistent again! And this time, there is no fallback CSC component to fix it.

Public cloud solutions

Besides open source solutions in the community, many cloud-native solutions are also provided on various public clouds such as AWS and GCP. Such solutions allow cloud users to directly consume change log messages, without the need to deploy and maintain separate on-premise CSC components.

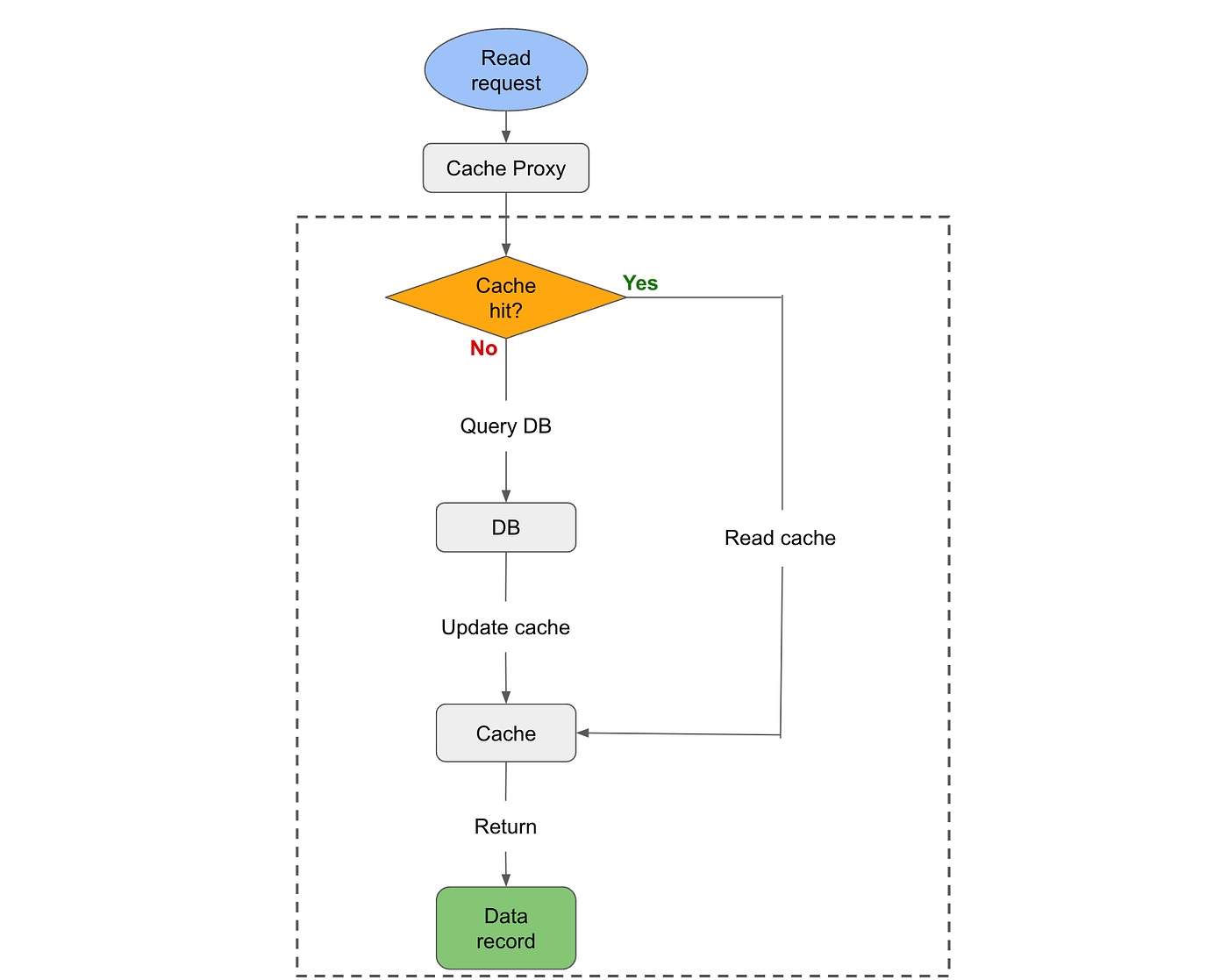

Read-through

Read-through is very similar to cache-aside, and in some cases they are used interchangeably especially when describing the strategy used in the read path. However, strictly speaking, a key difference of read-through cache is that it has a proxy layer. The proxy handles all the read and write strategies, as well as communication with the cache layer and the DB layer. Client only needs to interact with the proxy, rather than directly with both the cache and the DB as in the case of cache-aside.

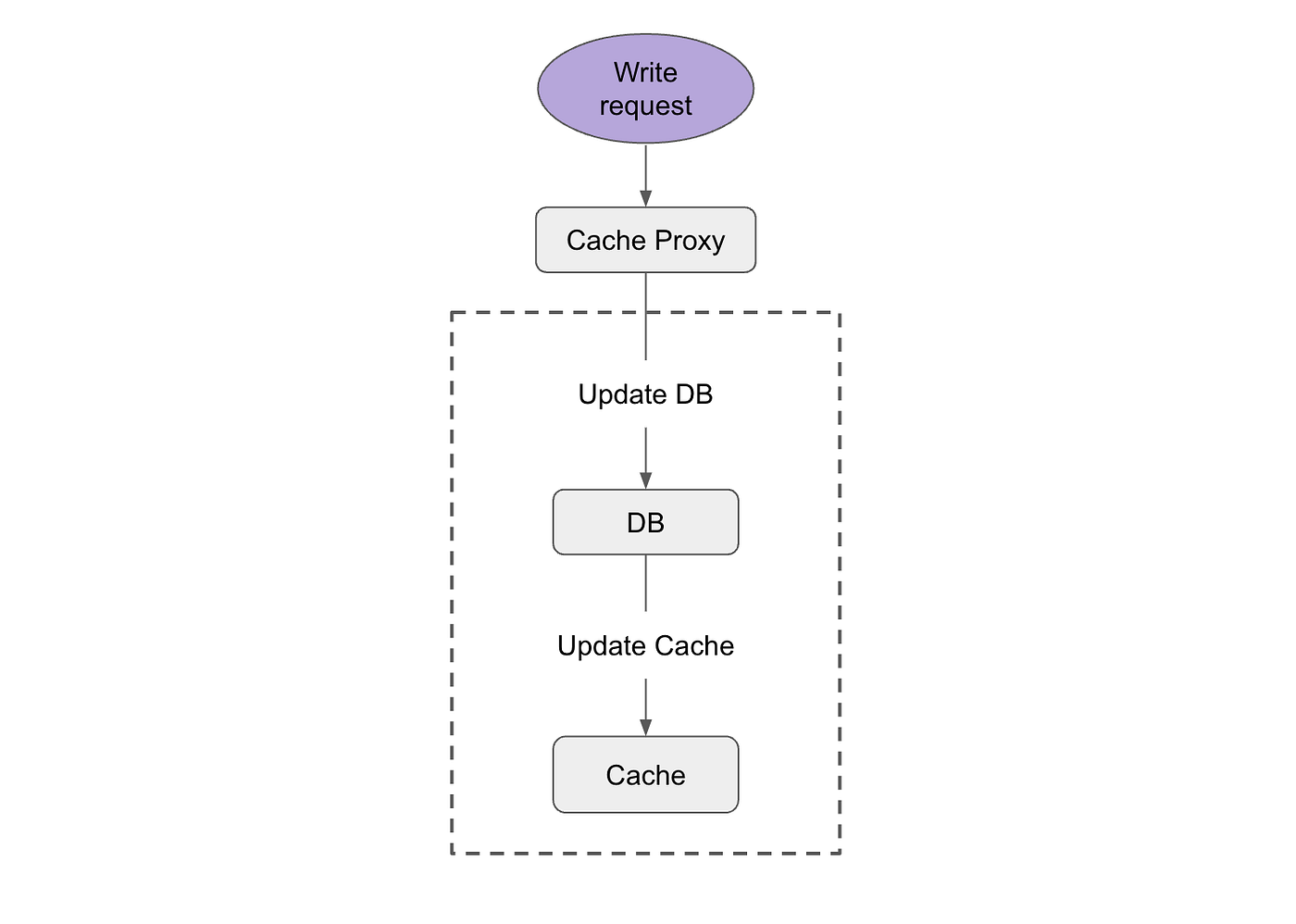

Write-through

The write-through strategy means that a write request first updates the DB, then updates the cache. As we can recall, the classical cache-aside will invalidate the cache rather than update the cache once DB update succeeds. What are the trade-offs here? And how to mitigate stale read issues we pointed out earlier when the cache is under high concurrent load?

The advantage of write-through is that the read path becomes very simple: read from cache if cache hit, and read from DB if cache miss. The read path no longer refreshes the cache entry with DB data. The disadvantage is that the cache system is now very susceptible to data staleness. Besides the above-mentioned high concurrency, cache-DB inconsistency can also be introduced if either of the operation — DB update or cache update — fails.

To mitigate the possible cache staleness issue, the two operations in the write path — DB update and cache update — should be atomic. Distributed locks are usually used for such purposes.

Write-through cache is more suitable for systems with high write QPS and no staleness tolerance. Distributed lock is a critical component to guarantee atomic update to both the cache layer and the DB layer. Further, at extremely high QPS, lock competition may start to become the bottleneck of the system throughput. For more details on the design of distributed locks, please refer to my

.

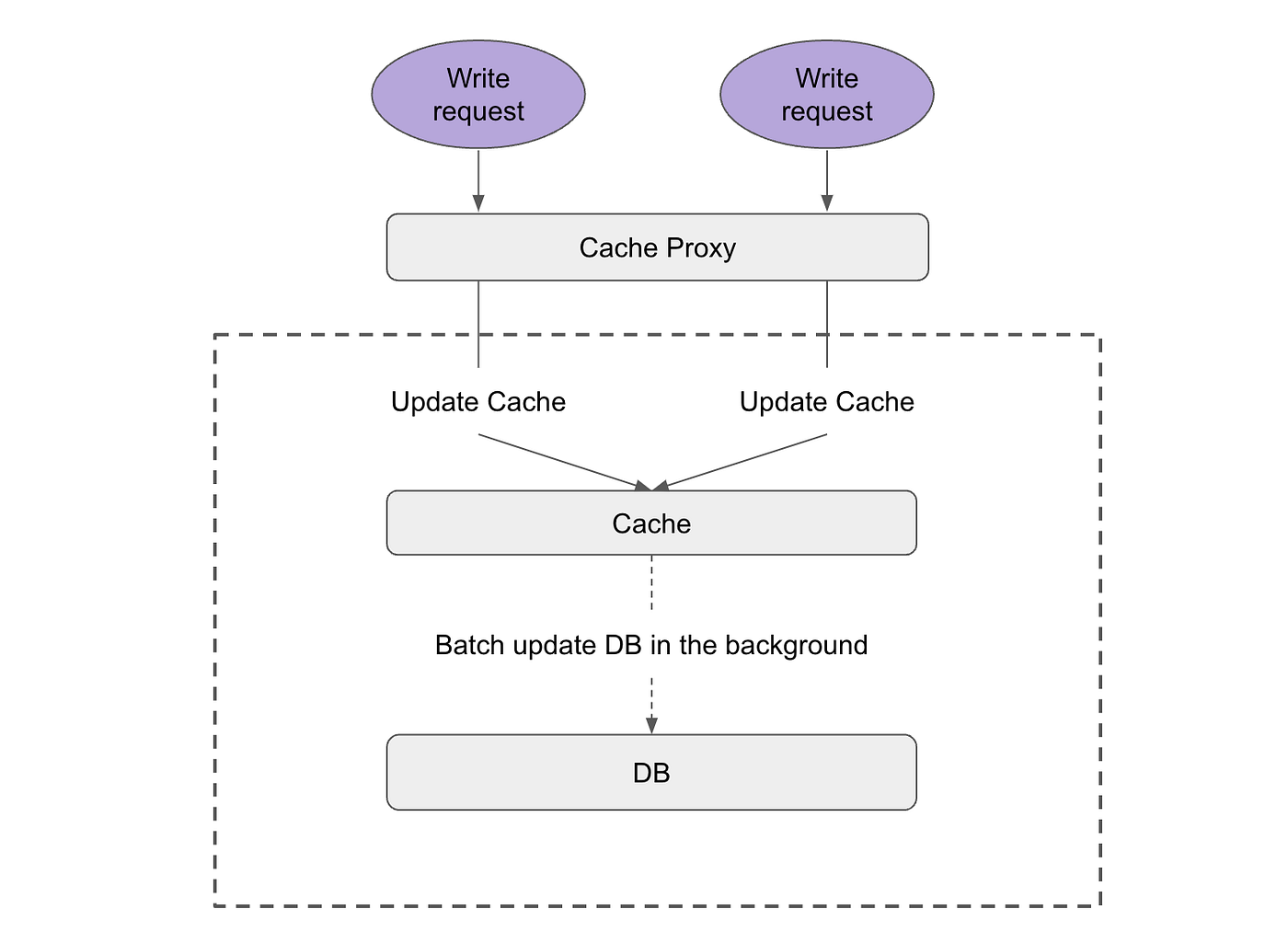

Write-behind

In a write-behind cache, a write request only updates the cache. Then another background process asynchronously updates the DB with the new entries in the cache. The asynchronous DB update can be implemented as periodic batch update, and the workload can be scheduled to run during mid-night, i.e. when the DB load is low.

The advantage of write-behind is that the cache update is very fast, so the write latency observed by the clients is very low, and the system can handle very high write QPS. Further, DB load overall is optimized by batching write requests. However, the tradeoff is that the consistency between cache and DB becomes even more brittle, as more failure surfaces are introduced. For example, if updates are not persisted to DB, a client directly reading the DB will see stale data. In this case, the cache becomes the ground truth with the most up-to-date data. If cache fails, all the data not persisted to DB yet will be lost. High availability designs such as described in

How Redis cluster achieves high availability and data persistence

are usually added to the cache to avoid data loss in such cases.

Write-around

Many real-world business cases can tolerate some level of staleness, and very simple write-around cache can be used here.

- Read path: sets a TTL for the backfilled cache entry after reading DB.

- Write path: simply updates the DB. No need to worry about cache at all.

In this design, cache entry only expires when exceeds the pre-set TTL. There is no cache invalidation nor cache update in the write path. The advantage is that the implementation is very simple, but at the cost of even more cache staleness — as long as the TTL window.

Summary

Cache design is overall a very sophisticated topic. Even if we only focus on the cache eventual consistency, there are still many architectures, read/write strategies, and fallback strategies. High level-wise, the rule of thumb is:

- high read QPS, moderate to low write QPS: choose cache-aside (or read-through), and consume DB change log to fix any inconsistency in the background.

- high write QPS: use write-through plus distributed lock to ensure consistency.

- high write QPS and can tolerate some staleness: consider write-behind for its simplicity, but also need to weigh out the impact of staleness to user experience.

As is the case for any distributed systems, there is no universal best solution for cache design. The best design should always take into account the real business requirements, and available headcount, compute/storage resources to fulfill these requirements.