一、准备

k8s集群master01:192.168.100.10 kube-apiserver kube-controller-manager kube-scheduler etcd

k8s集群master02:192.168.100.40

k8s集群node01:192.168.100.20 kubelet kube-proxy docker

k8s集群node02:192.168.100.30

etcd集群节点1:192.168.80.10 etcd

etcd集群节点2:192.168.80.20

etcd集群节点3:192.168.80.30

负载均衡nginx+keepalive01(master):192.168.100.50

负载均衡nginx+keepalive02(backup):192.168.100.60

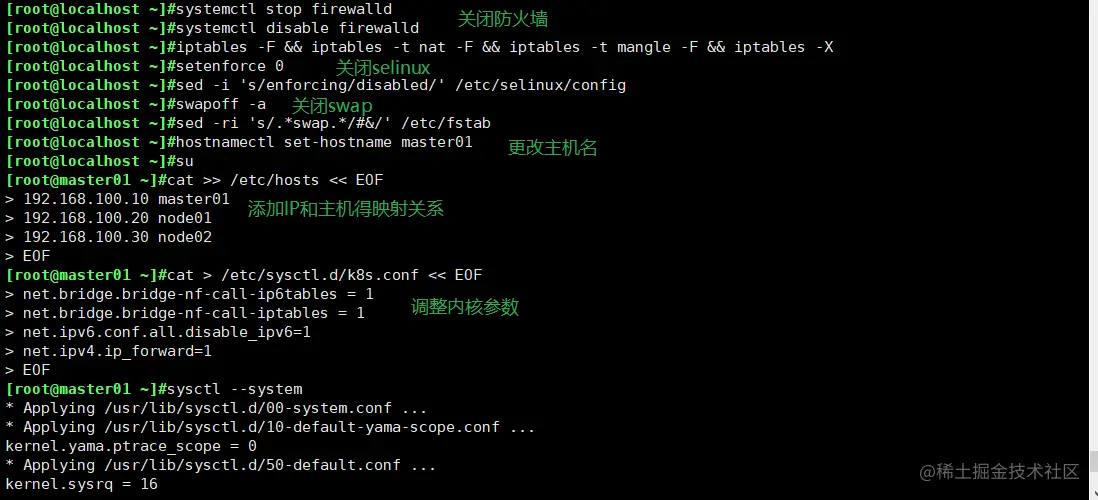

二、操作系统初始化配置

systemctl stop firewalld

systemctl disable firewalld

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

hostnamectl set-hostname master01

hostnamectl set-hostname node01

hostnamectl set-hostname node02

cat >> /etc/hosts << EOF

192.168.100.10 master01

192.168.100.20 node01

192.168.100.30 node02

EOF

cat > /etc/sysctl.d/k8s.conf << EOF

#开启网桥模式,可将网桥的流量传递给iptables链

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

#关闭ipv6协议

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

EOF

sysctl --system

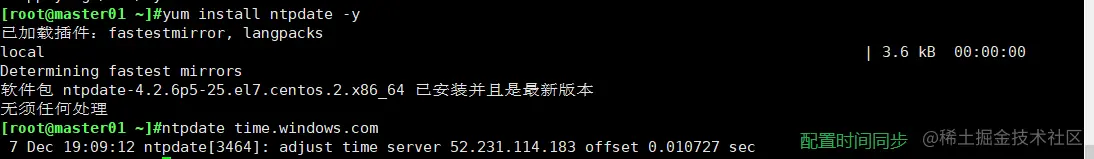

yum install ntpdate -y

ntpdate time.windows.com

三、 部署 docker引擎

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https:

yum install -y docker-ce docker-ce-cli containerd.io

systemctl start docker.service

systemctl enable docker.service

四、 部署 etcd 集群

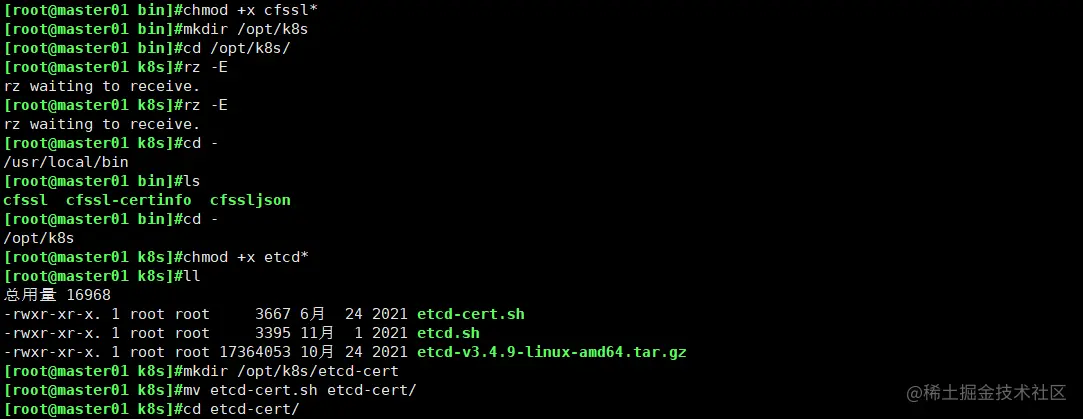

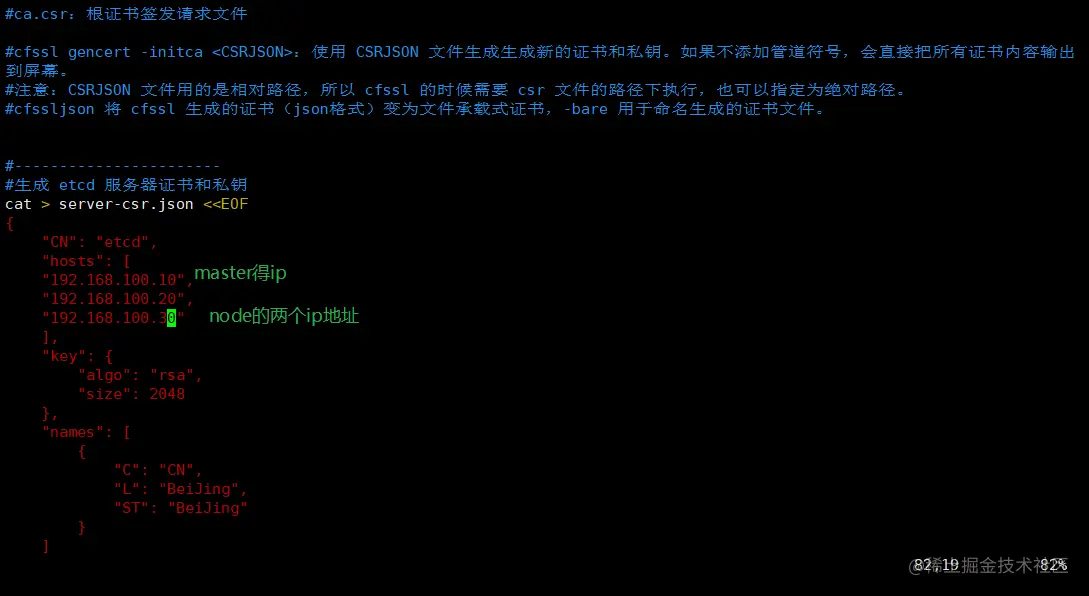

//在 master01 节点上操作

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/local/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/local/bin/cfssljson

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl*

mkdir /opt/k8s

cd /opt/k8s/

chmod +x etcd-cert.sh etcd.sh

mkdir /opt/k8s/etcd-cert

mv etcd-cert.sh etcd-cert/

cd /opt/k8s/etcd-cert/

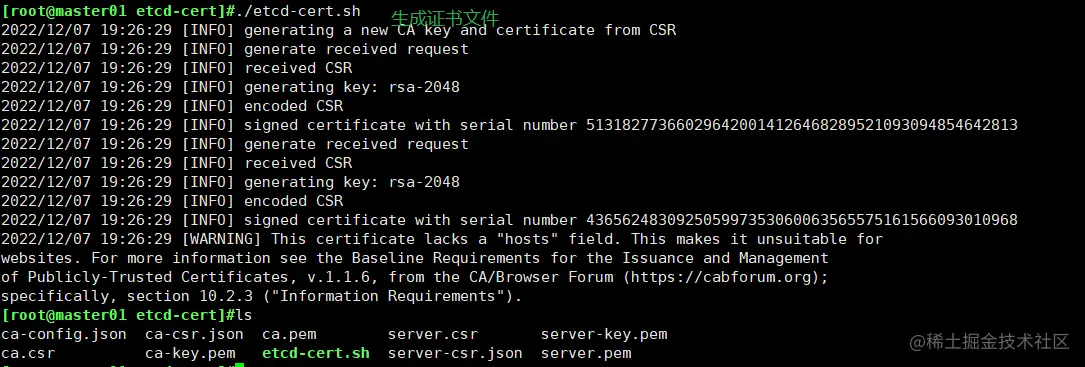

./etcd-cert.sh

ls

ca-config.json ca-csr.json ca.pem server.csr server-key.pem

ca.csr ca-key.pem etcd-cert.sh server-csr.json server.pem

cd /opt/k8s/

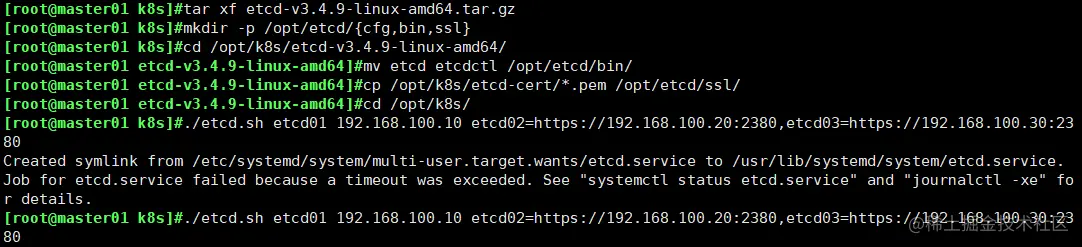

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

mkdir -p /opt/etcd/{cfg,bin,ssl}

cd /opt/k8s/etcd-v3.4.9-linux-amd64/

mv etcd etcdctl /opt/etcd/bin/

cp /opt/k8s/etcd-cert/*.pem /opt/etcd/ssl/

cd /opt/k8s/

./etcd.sh etcd01 192.168.100.10 etcd02=https://192.168.100.20:2380,etcd03=https://192.168.100.30:2380

ps -ef | grep etcd

scp -r /opt/etcd/ root@192.168.100.20:/opt/

scp -r /opt/etcd/ root@192.168.100.30:/opt/

scp /usr/lib/systemd/system/etcd.service root@192.168.100.20:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service root@192.168.100.30:/usr/lib/systemd/system/

//在 node01 节点上操作

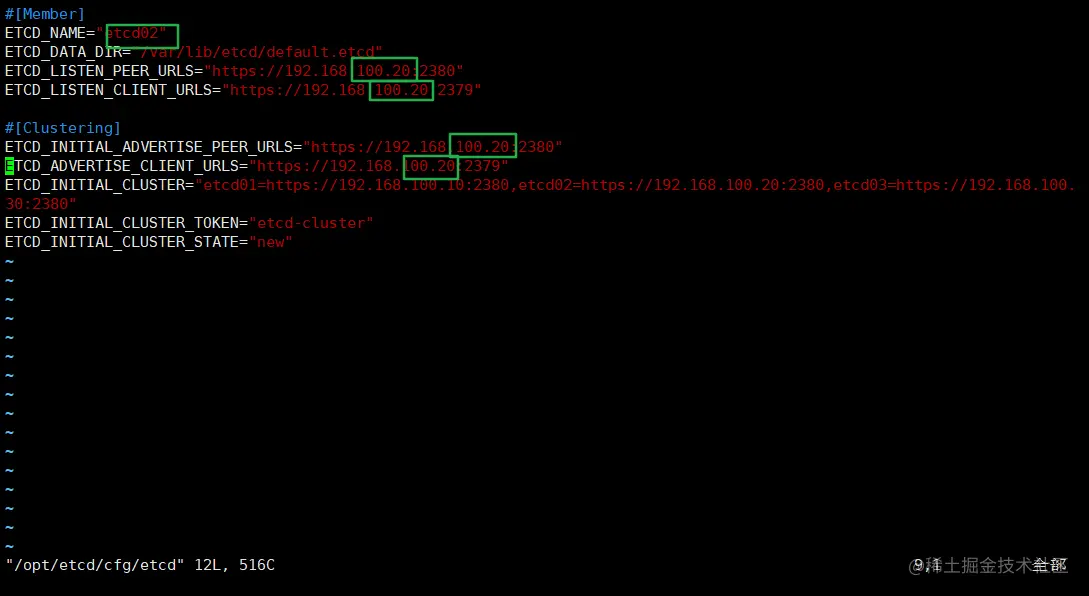

vim /opt/etcd/cfg/etcd

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.100.30:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.100.30:2379"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.30:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.30:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.100.10:2380,etcd02=https://192.168.100.20:2380,etcd03=https://192.168.100.30:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

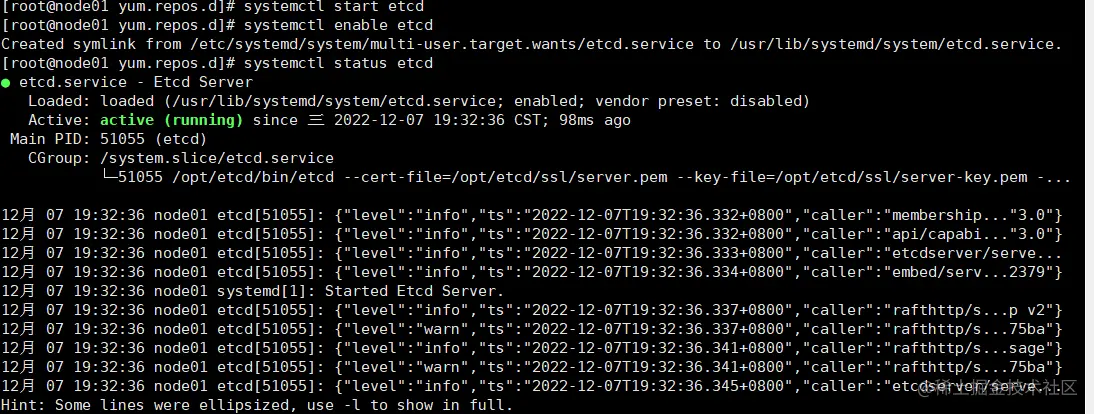

systemctl start etcd

systemctl enable etcd

systemctl status etcd

//在 node02 节点上操作

vim /opt/etcd/cfg/etcd

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.100.30:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.100.30:2379"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.30:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.30:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.100.10:2380,etcd02=https://192.168.100.20:2380,etcd03=https://192.168.100.30:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

systemctl start etcd

systemctl enable etcd

systemctl status etcd

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.100.10:2379,https://192.168.100.20:2379,https://192.168.100.30:2379" endpoint health --write-out=table

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.100.10:2379,https://192.168.100.20:2379,https://192.168.100.30:2379" --write-out=table member list

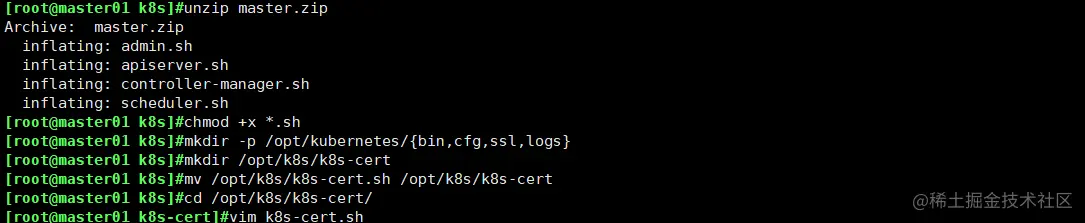

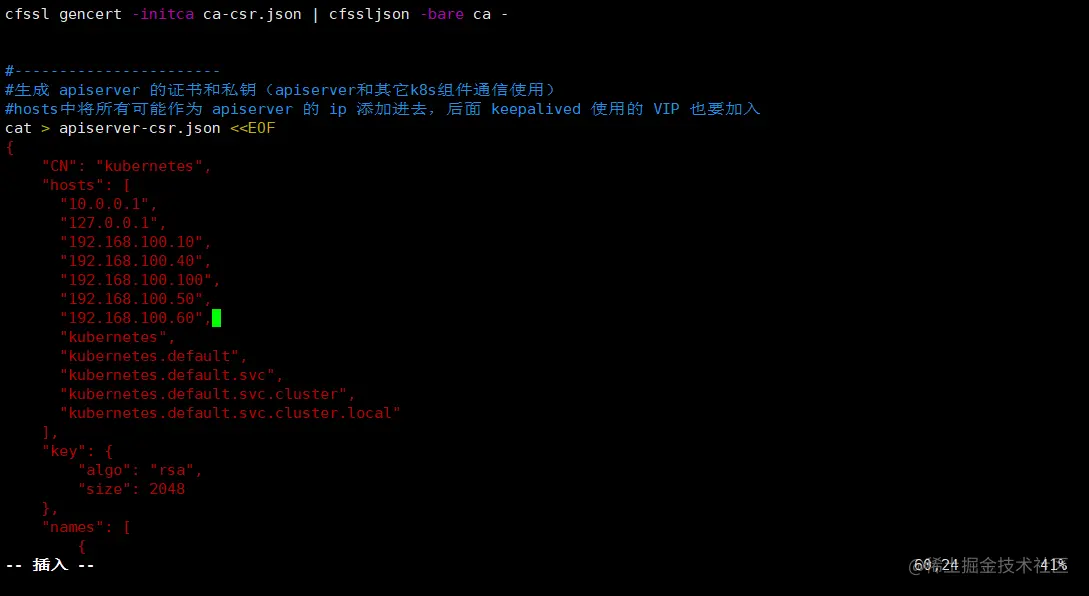

五、部署 Master 组件

//在 master01 节点上操作

cd /opt/k8s/

unzip master.zip

chmod +x *.sh

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

mkdir /opt/k8s/k8s-cert

mv /opt/k8s/k8s-cert.sh /opt/k8s/k8s-cert

cd /opt/k8s/k8s-cert/

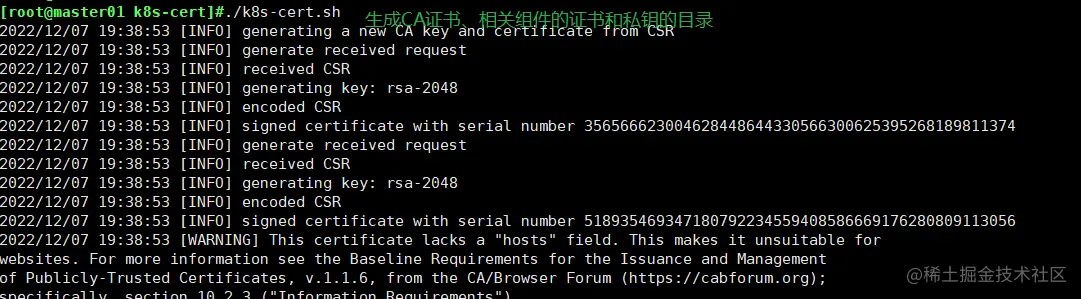

./k8s-cert.sh

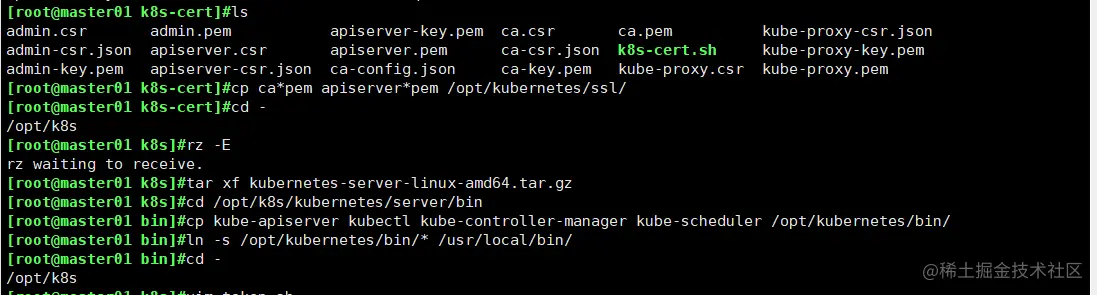

ls *pem

admin-key.pem apiserver-key.pem ca-key.pem kube-proxy-key.pem

admin.pem apiserver.pem ca.pem kube-proxy.pem

cp ca*pem apiserver*pem /opt/kubernetes/ssl/

cd /opt/k8s/

tar zxvf kubernetes-server-linux-amd64.tar.gz

cd /opt/k8s/kubernetes/server/bin

cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

ln -s /opt/kubernetes/bin/* /usr/local/bin/

cd /opt/k8s/

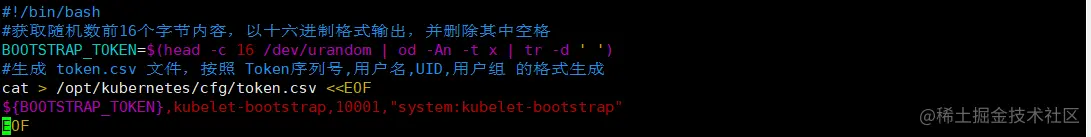

vim token.sh

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

cat > /opt/kubernetes/cfg/token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

chmod +x token.sh

./token.sh

cat /opt/kubernetes/cfg/token.csv

cd /opt/k8s/

./apiserver.sh 192.168.100.10 https://192.168.100.10:2379,https://192.168.100.20:2379,https://192.168.100.30:2379

ps aux | grep kube-apiserver

netstat -natp | grep 6443

cd /opt/k8s/

./scheduler.sh

ps aux | grep kube-scheduler

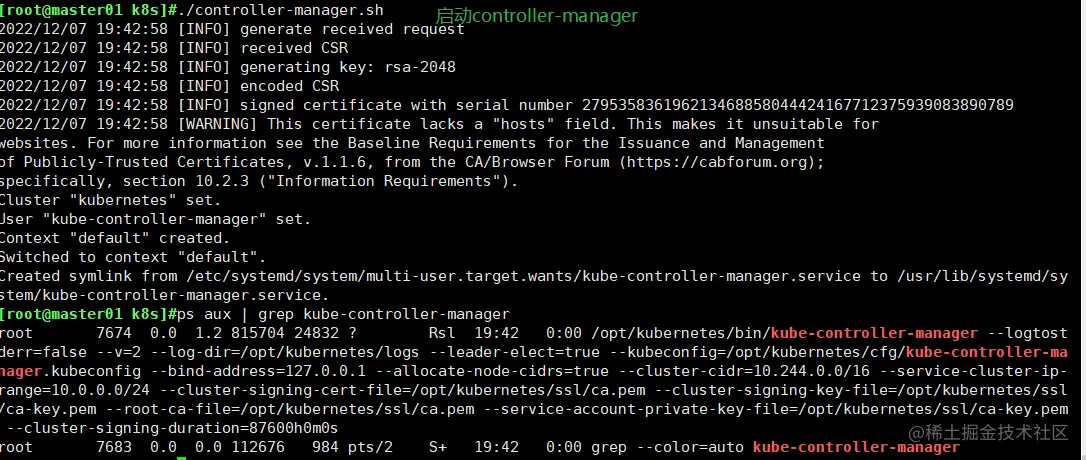

./controller-manager.sh

ps aux | grep kube-controller-manager

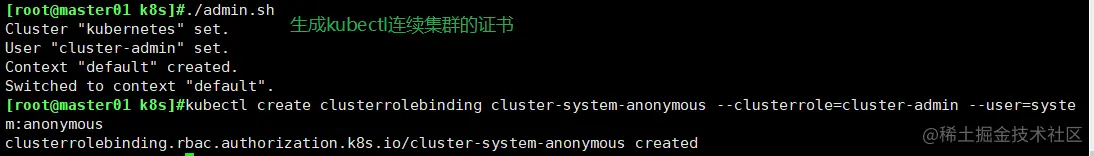

./admin.sh

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

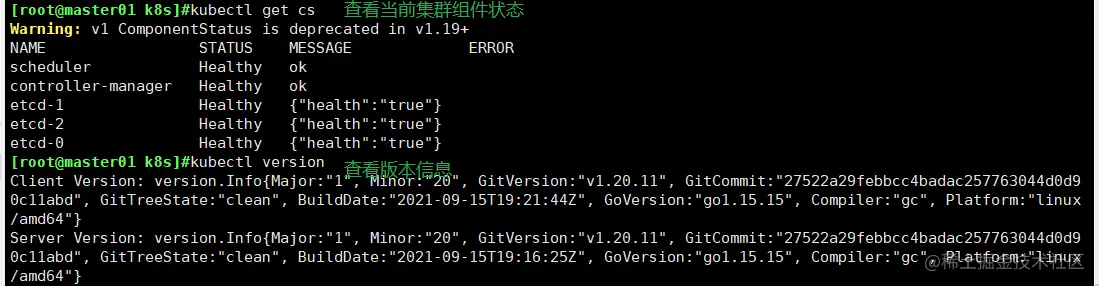

kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

kubectl version

六、部署worker node节点

//在所有 node 节点上操作

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

cd /opt/

unzip node.zip

chmod +x kubelet.sh proxy.sh

//在 master01 节点上操作

cd /opt/k8s/kubernetes/server/bin

scp kubelet kube-proxy root@192.168.100.20:/opt/kubernetes/bin/

scp kubelet kube-proxy root@192.168.100.30:/opt/kubernetes/bin/

mkdir /opt/k8s/kubeconfig

cd /opt/k8s/kubeconfig

chmod +x kubeconfig.sh

./kubeconfig.sh 192.168.100.10 /opt/k8s/k8s-cert/

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.100.30:/opt/kubernetes/cfg/

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.100.20:/opt/kubernetes/cfg/

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

//在 node01 节点上操作

cd /opt/

./kubelet.sh 192.168.100.20

ps aux | grep kubelet

//在 node02 节点上操作

cd /opt/

./kubelet.sh 192.168.100.30

ps aux | grep kubelet

//在 master01 节点上操作,通过 CSR 请求

kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 12s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

kubectl certificate approve node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE

kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 2m5s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.100.20 NotReady <none> 108s v1.20.11

192.168.100.30 NotReady <none> 108s v1.20.11

//在 node01 节点上操作

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done

cd /opt/

./proxy.sh 192.168.100.20

ps aux | grep kube-proxy

//在 node02 节点上操作

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done

cd /opt/

./proxy.sh 192.168.100.30

ps aux | grep kube-proxy

七、部署网络组件

部署flannel

//在 node01 节点上操作

cd /opt/

docker load -i flannel.tar

mkdir /opt/cni/bin

tar zxvf cni-plugins-linux-amd64-v0.8.6.tgz -C /opt/cni/bin

//在 node02 节点上操作

cd /opt/

docker load -i flannel.tar

mkdir /opt/cni/bin

tar zxvf cni-plugins-linux-amd64-v0.8.6.tgz -C /opt/cni/bin

//在 master01 节点上操作

cd /opt/k8s

kubectl apply -f kube-flannel.yml

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-hjtc7 1/1 Running 0 7s

kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.100.20 Ready <none> 81m v1.20.11

192.168.100.30 Ready <none> 81m v1.20.11