二、master节点部署etcd

一.准备工作

1.只需在master01上执行,下载安装cfssl证书生成工具和etcd安装包

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64

sudo chmod 777 /usr/local/bin/

mv cfssl_1.6.1_linux_amd64 /usr/local/bin/cfssl

mv cfssljson_1.6.1_linux_amd64 /usr/local/bin/cfssljson

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

wget https://github.com/etcd-io/etcd/releases/download/v3.5.5/etcd-v3.5.5-linux-amd64.tar.gz

2.安装etcd

tar -xf etcd-v3.5.5-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.5-linux-amd64/etcd{,ctl}

k8s@master01:~/tools$ etcdctl version

etcdctl version: 3.5.5

API version: 3.5

sudo chmod 777 /usr/local/bin/

scp k8s@master01:/usr/local/bin/etcd* /usr/local/bin/

sudo mkdir -p /opt/cni/bin && sudo chmod 777 /opt/cni && sudo chmod 777 /opt/cni/bin

sudo mkdir /etc/etcd/ssl -p && sudo chmod 777 /etc/etcd && sudo chmod 777 /etc/etcd/ssl

sudo mkdir /etc/kubernetes -p && sudo chmod 777 /etc/kubernetes && mkdir /etc/kubernetes/pki/etcd -p

mkdir pki && cd pki/

cat > etcd-ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

ll /etc/etcd/ssl/etcd-ca*

-rw-r--r-- 1 k8s k8s 1050 Oct 28 18:23 /etc/etcd/ssl/etcd-ca.csr

-rw------- 1 k8s k8s 1675 Oct 28 18:23 /etc/etcd/ssl/etcd-ca-key.pem

-rw-rw-r-- 1 k8s k8s 1318 Oct 28 18:23 /etc/etcd/ssl/etcd-ca.pem

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF

cfssl gencert \

-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \

-config=ca-config.json \

-hostname=127.0.0.1,master01,master02,master03,192.188.3.200,192.188.3.201,192.188.3.202 \

-profile=kubernetes \

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

scp k8s@master01:/etc/etcd/ssl/*.pem /etc/etcd/ssl/

3.配置etcd

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master01'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.188.3.200:2380'

listen-client-urls: 'https://192.188.3.200:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.188.3.200:2380'

advertise-client-urls: 'https://192.188.3.200:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://192.188.3.200:2380,master02=https://192.188.3.201:2380,master03=https://192.188.3.202:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master02'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.188.3.201:2380'

listen-client-urls: 'https://192.188.3.201:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.188.3.201:2380'

advertise-client-urls: 'https://192.188.3.201:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://192.188.3.200:2380,master02=https://192.188.3.201:2380,master03=https://192.188.3.202:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master03'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.188.3.202:2380'

listen-client-urls: 'https://192.188.3.202:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.188.3.202:2380'

advertise-client-urls: 'https://192.188.3.202:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://192.188.3.200:2380,master02=https://192.188.3.201:2380,master03=https://192.188.3.202:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

sudo bash -c "cat > /lib/systemd/system/etcd.service" <<EOF

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF

sudo chmod 777 /lib/systemd/system/etcd.service

ln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

sudo systemctl daemon-reload && sudo systemctl enable --now etcd

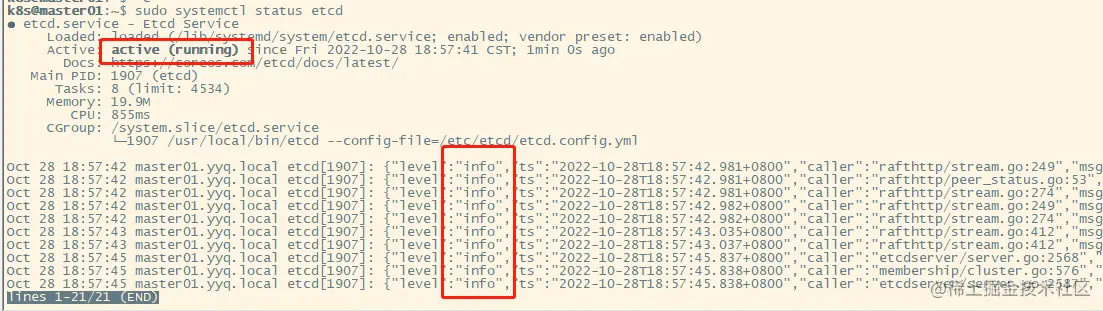

sudo systemctl status etcd

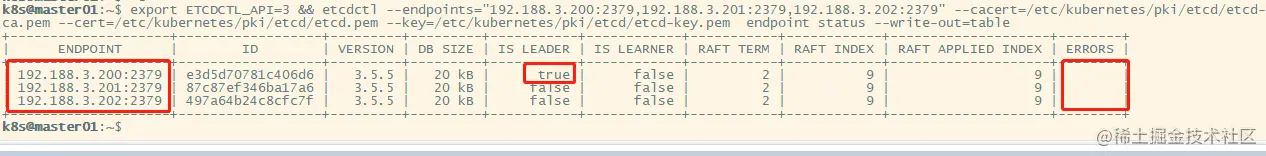

4.查看etcd集群状态,在master01上执行

export ETCDCTL_API=3 && etcdctl --endpoints="192.188.3.200:2379,192.188.3.201:2379,192.188.3.202:2379" --cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

三.在master节点上部署haproxy和keepalived实现高可用

1.下载haproxy和keepalived

sudo apt install keepalived haproxy -y

sudo chmod 777 /etc/haproxy/haproxy.cfg

cp /etc/haproxy/haproxy.cfg /etc//haproxy/haproxy.cfg.bak

sudo chmod 777 /etc/keepalived

cp /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak

2.配置haproxy

cat >/etc/haproxy/haproxy.cfg<<"EOF"

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend k8s-master

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master01 192.188.3.200:6443 check

server master02 192.188.3.201:6443 check

server master03 192.188.3.202:6443 check

EOF

3.配置keepalived

3.1 配置keepalived的主节点

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER #这里表示master01是主节点

interface ens33 #注意替换为自己的网卡名称

mcast_src_ip 192.188.3.200 #注意这里是master01的ip

virtual_router_id 51 #注意如果同一网段有多个keepalived集群时,同一集群virtual_router_id不能和其他集群相同

priority 100

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.188.3.240 #注意这里是虚拟的ip

}

track_script {

chk_apiserver

} }

EOF

3.2 配置backup节点

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

mcast_src_ip 192.188.3.201

virtual_router_id 51

priority 50

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.188.3.240

}

track_script {

chk_apiserver

} }

EOF

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

mcast_src_ip 192.188.3.202

virtual_router_id 51

priority 50

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.188.3.240

}

track_script {

chk_apiserver

} }

EOF

3.3 master01、master02、master03上配置建卡检查

cat > /etc/keepalived/check_apiserver.sh << EOF

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

chmod +x /etc/keepalived/check_apiserver.sh

3.4 启动haproxy和keepalived,检查是否高可用

sudo systemctl daemon-reload && sudo systemctl enable --now haproxy && sudo systemctl enable --now keepalived

ping 192.188.3.240

PING 192.188.3.240 (192.188.3.240) 56(84) bytes of data.

64 bytes from 192.188.3.240: icmp_seq=1 ttl=64 time=0.017 ms

64 bytes from 192.188.3.240: icmp_seq=2 ttl=64 time=0.021 ms

64 bytes from 192.188.3.240: icmp_seq=3 ttl=64 time=0.027 ms

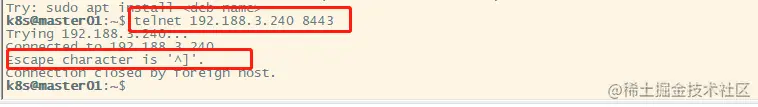

telnet 192.188.3.240 8443