本文基于linux-5.0内核源码分析

include/linux/mmzone.h

include/linux/pagevec.h

include/linux/mm_inline.h

include/linux/pagemap.h

include/linux/vmstat.h

mm/swap.c

mm/vmscan.c

mm/util.c

mm/rmap.c

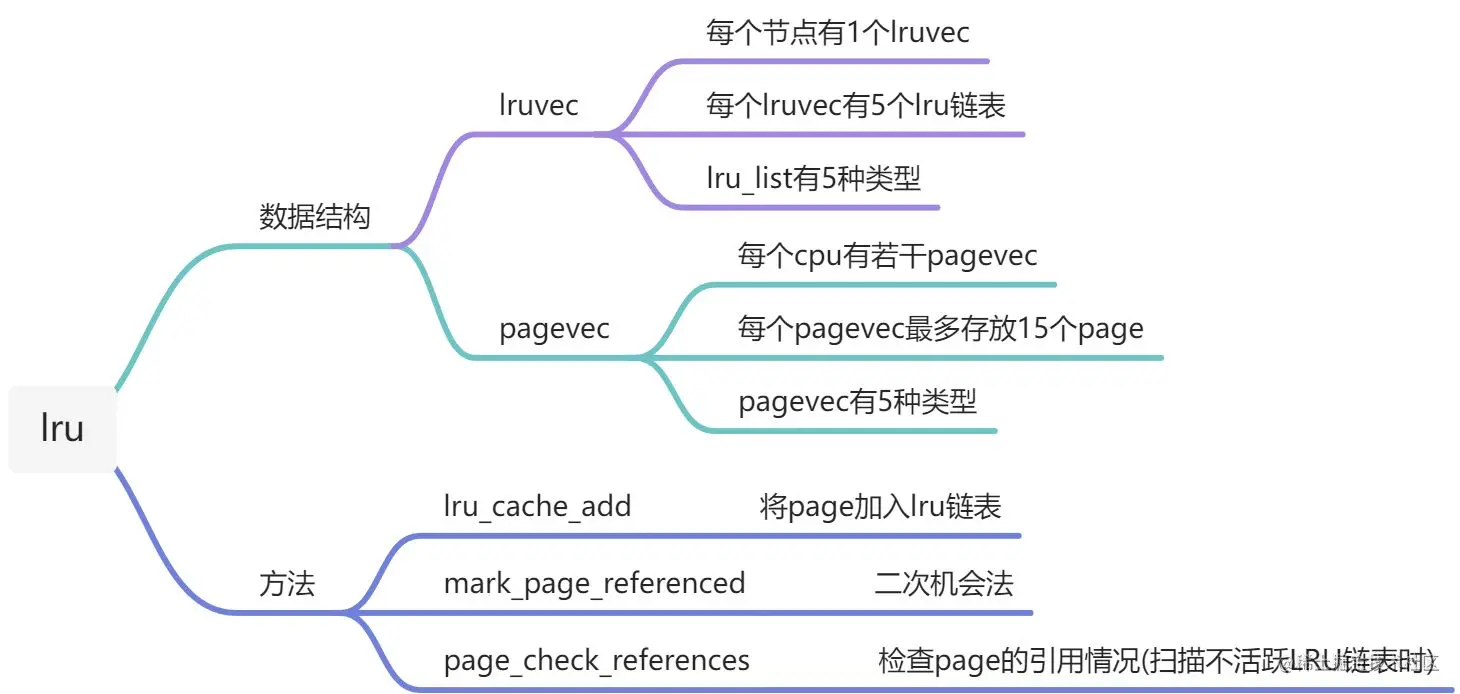

1. lru_list

#define LRU_BASE 0

#define LRU_ACTIVE 1

#define LRU_FILE 2

enum lru_list {

LRU_INACTIVE_ANON = LRU_BASE,

LRU_ACTIVE_ANON = LRU_BASE + LRU_ACTIVE,

LRU_INACTIVE_FILE = LRU_BASE + LRU_FILE,

LRU_ACTIVE_FILE = LRU_BASE + LRU_FILE + LRU_ACTIVE,

LRU_UNEVICTABLE,

NR_LRU_LISTS

};

2. lruvec

struct lruvec {

struct list_head lists[NR_LRU_LISTS];

struct zone_reclaim_stat reclaim_stat;

atomic_long_t inactive_age;

unsigned long refaults;

#ifdef CONFIG_MEMCG

struct pglist_data *pgdat;

#endif

};

3. pagevec

#define PAGEVEC_SIZE 15

struct pagevec {

unsigned long nr;

bool percpu_pvec_drained;

struct page *pages[PAGEVEC_SIZE];

};

4. lru_cache_add

void lru_cache_add(struct page *page)

{

VM_BUG_ON_PAGE(PageActive(page) && PageUnevictable(page), page);

VM_BUG_ON_PAGE(PageLRU(page), page);

__lru_cache_add(page);

}

static DEFINE_PER_CPU(struct pagevec, lru_add_pvec);

static DEFINE_PER_CPU(struct pagevec, lru_rotate_pvecs);

static DEFINE_PER_CPU(struct pagevec, lru_deactivate_file_pvecs);

static DEFINE_PER_CPU(struct pagevec, lru_lazyfree_pvecs);

#ifdef CONFIG_SMP

static DEFINE_PER_CPU(struct pagevec, activate_page_pvecs);

#endif

static void __lru_cache_add(struct page *page)

{

struct pagevec *pvec = &get_cpu_var(lru_add_pvec);

get_page(page);

if (!pagevec_add(pvec, page) || PageCompound(page))

__pagevec_lru_add(pvec);

put_cpu_var(lru_add_pvec);

}

4.1 pagevec_add

static inline unsigned pagevec_add(struct pagevec *pvec, struct page *page)

{

pvec->pages[pvec->nr++] = page;

return pagevec_space(pvec);

}

4.2 pagevec_space

static inline unsigned pagevec_space(struct pagevec *pvec)

{

return PAGEVEC_SIZE - pvec->nr;

}

4.3 __pagevec_lru_add

void __pagevec_lru_add(struct pagevec *pvec)

{

pagevec_lru_move_fn(pvec, __pagevec_lru_add_fn, NULL);

}

4.4 pagevec_lru_move_fn

static void pagevec_lru_move_fn(struct pagevec *pvec,

void (*move_fn)(struct page *page, struct lruvec *lruvec, void *arg),

void *arg)

{

int i;

struct pglist_data *pgdat = NULL;

struct lruvec *lruvec;

unsigned long flags = 0;

for (i = 0; i < pagevec_count(pvec); i++) {

struct page *page = pvec->pages[i];

struct pglist_data *pagepgdat = page_pgdat(page);

if (pagepgdat != pgdat) {

if (pgdat)

spin_unlock_irqrestore(&pgdat->lru_lock, flags);

pgdat = pagepgdat;

spin_lock_irqsave(&pgdat->lru_lock, flags);

}

lruvec = mem_cgroup_page_lruvec(page, pgdat);

(*move_fn)(page, lruvec, arg);

}

if (pgdat)

spin_unlock_irqrestore(&pgdat->lru_lock, flags);

release_pages(pvec->pages, pvec->nr, pvec->cold);

pagevec_reinit(pvec);

}

4.5 __pagevec_lru_add_fn

static inline int page_is_file_cache(struct page *page)

{

return !PageSwapBacked(page);

}

static inline enum lru_list page_lru_base_type(struct page *page)

{

if (page_is_file_cache(page))

return LRU_INACTIVE_FILE;

return LRU_INACTIVE_ANON;

}

static void __pagevec_lru_add_fn(struct page *page, struct lruvec *lruvec,

void *arg)

{

enum lru_list lru;

int was_unevictable = TestClearPageUnevictable(page);

VM_BUG_ON_PAGE(PageLRU(page), page);

SetPageLRU(page);

smp_mb();

if (page_evictable(page)) {

lru = page_lru(page);

update_page_reclaim_stat(lruvec, page_is_file_cache(page),

PageActive(page));

if (was_unevictable)

count_vm_event(UNEVICTABLE_PGRESCUED);

} else {

lru = LRU_UNEVICTABLE;

ClearPageActive(page);

SetPageUnevictable(page);

if (!was_unevictable)

count_vm_event(UNEVICTABLE_PGCULLED);

}

add_page_to_lru_list(page, lruvec, lru);

trace_mm_lru_insertion(page, lru);

}

4.5.1 page_evictable

int page_evictable(struct page *page)

{

int ret;

rcu_read_lock();

ret = !mapping_unevictable(page_mapping(page)) && !PageMlocked(page);

rcu_read_unlock();

return ret;

}

4.5.2 page_mapping

struct address_space *page_mapping(struct page *page)

{

struct address_space *mapping;

page = compound_head(page);

if (unlikely(PageSlab(page)))

return NULL;

if (unlikely(PageSwapCache(page))) {

swp_entry_t entry;

entry.val = page_private(page);

return swap_address_space(entry);

}

mapping = page->mapping;

if ((unsigned long)mapping & PAGE_MAPPING_ANON)

return NULL;

return (void *)((unsigned long)mapping & ~PAGE_MAPPING_FLAGS);

}

4.5.3 mapping_unevictable

enum mapping_flags {

AS_EIO = 0,

AS_ENOSPC = 1,

AS_MM_ALL_LOCKS = 2,

AS_UNEVICTABLE = 3,

AS_EXITING = 4,

AS_NO_WRITEBACK_TAGS = 5,

};

static inline int mapping_unevictable(struct address_space *mapping)

{

if (mapping)

return test_bit(AS_UNEVICTABLE, &mapping->flags);

return !!mapping;

}

4.6 add_page_to_lru_list

static __always_inline void add_page_to_lru_list(struct page *page,

struct lruvec *lruvec, enum lru_list lru)

{

update_lru_size(lruvec, lru, page_zonenum(page), hpage_nr_pages(page));

list_add(&page->lru, &lruvec->lists[lru]);

}

4.6.1 update_lru_size

static __always_inline void update_lru_size(struct lruvec *lruvec,

enum lru_list lru, enum zone_type zid,

int nr_pages)

{

__update_lru_size(lruvec, lru, zid, nr_pages);

#ifdef CONFIG_MEMCG

mem_cgroup_update_lru_size(lruvec, lru, zid, nr_pages);

#endif

}

4.6.2 __update_lru_size

static __always_inline void __update_lru_size(struct lruvec *lruvec,

enum lru_list lru, enum zone_type zid,

int nr_pages)

{

struct pglist_data *pgdat = lruvec_pgdat(lruvec);

__mod_node_page_state(pgdat, NR_LRU_BASE + lru, nr_pages);

__mod_zone_page_state(&pgdat->node_zones[zid],

NR_ZONE_LRU_BASE + lru, nr_pages);

}

4.6.3 __mod_node_page_state

enum node_stat_item {

NR_LRU_BASE,

NR_INACTIVE_ANON = NR_LRU_BASE,

NR_ACTIVE_ANON,

NR_INACTIVE_FILE,

NR_ACTIVE_FILE,

NR_UNEVICTABLE,

...

NR_VM_NODE_STAT_ITEMS

};

static inline void __mod_node_page_state(struct pglist_data *pgdat,

enum node_stat_item item, int delta)

{

node_page_state_add(delta, pgdat, item);

}

static inline void node_page_state_add(long x, struct pglist_data *pgdat,

enum node_stat_item item)

{

atomic_long_add(x, &pgdat->vm_stat[item]);

atomic_long_add(x, &vm_node_stat[item]);

}

4.6.4 __mod_zone_page_state

enum zone_stat_item {

NR_FREE_PAGES,

NR_ZONE_LRU_BASE,

NR_ZONE_INACTIVE_ANON = NR_ZONE_LRU_BASE,

NR_ZONE_ACTIVE_ANON,

NR_ZONE_INACTIVE_FILE,

NR_ZONE_ACTIVE_FILE,

NR_ZONE_UNEVICTABLE,

...

NR_VM_ZONE_STAT_ITEMS };

static inline void __mod_zone_page_state(struct zone *zone,

enum zone_stat_item item, long delta)

{

zone_page_state_add(delta, zone, item);

}

static inline void zone_page_state_add(long x, struct zone *zone,

enum zone_stat_item item)

{

atomic_long_add(x, &zone->vm_stat[item]);

atomic_long_add(x, &vm_zone_stat[item]);

}

5. mark_page_accessed(二次机会法)

void mark_page_accessed(struct page *page)

{

page = compound_head(page);

if (!PageActive(page) && !PageUnevictable(page) &&

PageReferenced(page)) {

if (PageLRU(page))

activate_page(page);

else

__lru_cache_activate_page(page);

ClearPageReferenced(page);

if (page_is_file_cache(page))

workingset_activation(page);

} else if (!PageReferenced(page)) {

SetPageReferenced(page);

}

if (page_is_idle(page))

clear_page_idle(page);

}

5.1 activate_page

#ifdef CONFIG_SMP

static DEFINE_PER_CPU(struct pagevec, activate_page_pvecs);

void activate_page(struct page *page)

{

page = compound_head(page);

if (PageLRU(page) && !PageActive(page) && !PageUnevictable(page)) {

struct pagevec *pvec = &get_cpu_var(activate_page_pvecs);

get_page(page);

if (!pagevec_add(pvec, page) || PageCompound(page))

pagevec_lru_move_fn(pvec, __activate_page, NULL);

put_cpu_var(activate_page_pvecs);

}

}

#else

void activate_page(struct page *page)

{

struct zone *zone = page_zone(page);

page = compound_head(page);

spin_lock_irq(zone_lru_lock(zone));

__activate_page(page, mem_cgroup_page_lruvec(page, zone->zone_pgdat), NULL);

spin_unlock_irq(zone_lru_lock(zone));

}

#endif

5.2 __activate_page

static void __activate_page(struct page *page, struct lruvec *lruvec,

void *arg)

{

if (PageLRU(page) && !PageActive(page) && !PageUnevictable(page)) {

int file = page_is_file_cache(page);

int lru = page_lru_base_type(page);

del_page_from_lru_list(page, lruvec, lru);

SetPageActive(page);

lru += LRU_ACTIVE;

add_page_to_lru_list(page, lruvec, lru);

trace_mm_lru_activate(page);

__count_vm_event(PGACTIVATE);

update_page_reclaim_stat(lruvec, file, 1);

}

}

5.2.1 del_page_from_lru_list

static __always_inline void del_page_from_lru_list(struct page *page,

struct lruvec *lruvec, enum lru_list lru)

{

list_del(&page->lru);

update_lru_size(lruvec, lru, page_zonenum(page), -hpage_nr_pages(page));

}

5.2.2 add_page_to_lru_list

static __always_inline void add_page_to_lru_list(struct page *page,

struct lruvec *lruvec, enum lru_list lru)

{

update_lru_size(lruvec, lru, page_zonenum(page), hpage_nr_pages(page));

list_add(&page->lru, &lruvec->lists[lru]);

}

5.3 __lru_cache_activate_page

static DEFINE_PER_CPU(struct pagevec, lru_add_pvec);

static void __lru_cache_activate_page(struct page *page)

{

struct pagevec *pvec = &get_cpu_var(lru_add_pvec);

int i;

for (i = pagevec_count(pvec) - 1; i >= 0; i--) {

struct page *pagevec_page = pvec->pages[i];

if (pagevec_page == page) {

SetPageActive(page);

break;

}

}

put_cpu_var(lru_add_pvec);

}

6. page_check_references

static enum page_references page_check_references(struct page *page,

struct scan_control *sc)

{

int referenced_ptes, referenced_page;

unsigned long vm_flags;

referenced_ptes = page_referenced(page, 1, sc->target_mem_cgroup,

&vm_flags);

referenced_page = TestClearPageReferenced(page);

if (vm_flags & VM_LOCKED)

return PAGEREF_RECLAIM;

if (referenced_ptes) {

if (PageSwapBacked(page))

return PAGEREF_ACTIVATE;

SetPageReferenced(page);

if (referenced_page || referenced_ptes > 1)

return PAGEREF_ACTIVATE;

if (vm_flags & VM_EXEC)

return PAGEREF_ACTIVATE;

return PAGEREF_KEEP;

}

if (referenced_page && !PageSwapBacked(page))

return PAGEREF_RECLAIM_CLEAN;

return PAGEREF_RECLAIM;

}

6.1 page_referenced

// 1.利用rmap系统遍历所有映射该页面的pte

// 2.对每个pte: 如果L_PTE_YOUNG比特位置位说明之前被访问过,referenced计数加1;

// 然后清空L_PTE_YOUNG.对ARM32来说会清空硬件页表项内容, 人为制造一个缺页中断

// 当再次访问该pte时,在缺页中断中设置L_PTE_YOUNG比特位

// 2.返回referenced计数, 表示该页有多少个访问引用pte

int page_referenced(struct page *page,

int is_locked,

struct mem_cgroup *memcg,

unsigned long *vm_flags)

{

int we_locked = 0

struct page_referenced_arg pra = {

.mapcount = total_mapcount(page),

.memcg = memcg,

}

struct rmap_walk_control rwc = {

.rmap_one = page_referenced_one,

.arg = (void *)&pra,

.anon_lock = page_lock_anon_vma_read,

}

*vm_flags = 0

if (!page_mapped(page))

return 0

if (!page_rmapping(page))

return 0

if (!is_locked && (!PageAnon(page) || PageKsm(page))) {

we_locked = trylock_page(page)

if (!we_locked)

return 1

}

/*

* If we are reclaiming on behalf of a cgroup, skip

* counting on behalf of references from different

* cgroups

*/

if (memcg) {

rwc.invalid_vma = invalid_page_referenced_vma

}

rmap_walk(page, &rwc)

*vm_flags = pra.vm_flags

if (we_locked)

unlock_page(page)

return pra.referenced

}

6.2 page_referenced_one

static bool page_referenced_one(struct page *page, struct vm_area_struct *vma,

unsigned long address, void *arg)

{

struct page_referenced_arg *pra = arg

struct page_vma_mapped_walk pvmw = {

.page = page,

.vma = vma,

.address = address,

}

int referenced = 0

while (page_vma_mapped_walk(&pvmw)) {

address = pvmw.address

if (vma->vm_flags & VM_LOCKED) {

page_vma_mapped_walk_done(&pvmw)

pra->vm_flags |= VM_LOCKED

return false

}

if (pvmw.pte) {

if (ptep_clear_flush_young_notify(vma, address,

pvmw.pte)) {

if (likely(!(vma->vm_flags & VM_SEQ_READ)))

referenced++

}

} else if (IS_ENABLED(CONFIG_TRANSPARENT_HUGEPAGE)) {

if (pmdp_clear_flush_young_notify(vma, address,

pvmw.pmd))

referenced++

} else {

/* unexpected pmd-mapped page? */

WARN_ON_ONCE(1)

}

pra->mapcount--

}

if (referenced)

clear_page_idle(page)

if (test_and_clear_page_young(page))

referenced++

if (referenced) {

pra->referenced++

pra->vm_flags |= vma->vm_flags

}

if (!pra->mapcount)

return false

return true

}