目录

一、安装环境

JDK 1.8

二、安装Hadoop

1、下载hadoop

mirror.bit.edu.cn/apache/hado… 选择合适的版本

下载hadoop

wget http://mirror.bit.edu.cn/apache/hadoop/common/hadoop-3.3.0/hadoop-3.3.0.tar.gz

执行 进行解压,为了方便使用吗,mv进行修改名称

tar -xzvf hadoop-3.3.0.tar.gz

mv hadoop-3.3.0.tar.gz hadoop

2、修改环境变量

将hadoop环境信息写入环境变量中

vim /etc/profile

export HADOOP_HOME=/opt/hadoop

export PATH=$HADOOP_HOME/bin:$PATH

执行source etc/profile使其生效

3、修改配置文件

修改hadoop-env.sh文件,vim etc/hadoop/hadoop-env.sh修改JAVA_HOME信息

export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk-1.8.0.262.b10-0.el7_8.x86_64

执行hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.0.jar grep input output 'dfs[a-z]',hadoop自带的例子,验证hadoop是否安装成功

三、安装hive

1、下载hive

wget mirror.bit.edu.cn/apache/hive…

解压tar -zxvf apache-hive-3.1.2-bin.tar.gz

修改名称 mv apache-hive-3.1.2-bin hive

2、修改环境变量

vim /etc/profile

export HIVE_HOME=/opt/hive

export PATH=$MAVEN_HOME/bin:$HIVE_HOME/bin:$HADOOP_HOME/bin:$PATH

source etc/profile

3、修改hivesite 配置

<!-- WARNING!!! This file is auto generated for documentation purposes ONLY! -->

<!-- WARNING!!! Any changes you make to this file will be ignored by Hive. -->

<!-- WARNING!!! You must make your changes in hive-site.xml instead. -->

<!-- Hive Execution Parameters -->

<!-- 以下配置原配置都有,搜索之后进行修改或者删除后在统一位置添加 -->

<property>

<name>javax.jdo.option.ConnectionUserName</name>用户名

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>密码

<value> 123456 </value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>mysql

<value>jdbc:mysql: //127.0.0.1:3306/hive</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>mysql驱动程序

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>hive.exec.script.wrapper</name>

<value/>

<description/>

</property>

复制mysql的驱动程序到hive/lib下面,然后进入/hive/bin 目录执行

schematool -dbType mysql -initSchema

4、验证是否安装成功

hive --version查看当前版本

hive 看是否进入hive命令操作行,进去的话说明成功

四、Hive数据集成

配置了hive的钩子后,在hive中做任何操作,都会被钩子所感应到,并以事件的形式发布到kafka,然后,atlas的Ingest模块会消费到kafka中的消息,并解析生成相应的atlas元数据写入底层的Janus图数据库来存储管理;

1、Hive同步配置集成

修改hive-env.sh,指定hive钩子的jar包位置,钩子的jar包和工具在atlas编译完成之后自动生成,在apache-atlas-sources-2.1.0/distro/target/目录下

export HIVE_AUX_JARS_PATH=/opt/apache-atlas-2.1.0/hook/hive

修改hive-site.xml,指定钩子执行的方法

<property>

<name>hive.exec.post.hooks</name>

<value>org.apache.atlas.hive.hook.HiveHook</value>

</property>

注意,这里其实是执行后的监控,可以有执行前,执行中的监控。其实就是一个执行生命周期的回调监控。

2、全量同步配置

拷贝atlas配置文件atlas-application.properties到hive配置目录

添加两行配置:

atlas.hook.hive.synchronous=false

atlas.rest.address=http://doit33:21000

atlas安装之前,hive中已存在的表,钩子是不会自动感应并生成相关元数据的;可以通过atlas的一个工具,来对已存在的hive库或表进行元数据导入;该工具也是存在atlas编译生成的hive-hook包里。

bin/import-hive.sh

执行结果如下,导入数据需要输入atlas的账号密码,输入完之后会开始导入数据,

提示Hive Meta Data imported successfully!!!说明数据导入成功

sh import-hive.sh

Using Hive configuration directory [/opt/hive/conf]

Log file for import is /opt/apache-atlas-2.1.0/logs/import-hive.log

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hive/lib/log4j-slf4j-impl-2.10.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

2021-01-15T11:41:01,614 INFO [main] org.apache.atlas.ApplicationProperties - Looking for atlas-application.properties in classpath

2021-01-15T11:41:01,619 INFO [main] org.apache.atlas.ApplicationProperties - Loading atlas-application.properties from file:/opt/hive/conf/atlas-application.properties

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Using graphdb backend 'janus'

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Using storage backend 'hbase2'

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Using index backend 'solr'

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Atlas is running in MODE: PROD.

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Setting solr-wait-searcher property 'true'

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Setting index.search.map-name property 'false'

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Setting atlas.graph.index.search.max-result-set-size = 150

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Property (set to default) atlas.graph.cache.db-cache = true

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Property (set to default) atlas.graph.cache.db-cache-clean-wait = 20

2021-01-15T11:41:01,660 INFO [main] org.apache.atlas.ApplicationProperties - Property (set to default) atlas.graph.cache.db-cache-size = 0.5

2021-01-15T11:41:01,661 INFO [main] org.apache.atlas.ApplicationProperties - Property (set to default) atlas.graph.cache.tx-cache-size = 15000

2021-01-15T11:41:01,661 INFO [main] org.apache.atlas.ApplicationProperties - Property (set to default) atlas.graph.cache.tx-dirty-size = 120

Enter username for atlas :- admin #手动输入atlas用户名和密码

Enter password for atlas :-

2021-01-15T11:41:05,721 INFO [main] org.apache.atlas.AtlasBaseClient - Trying with address http://127.0.0.1:21000

2021-01-15T11:41:05,831 INFO [main] org.apache.atlas.AtlasBaseClient - method=GET path=api/atlas/admin/status contentType=application/json; charset=UTF-8 accept=application/json status=200

3、钩子测试

配置好所有钩子之后,hive中尝试创建一个测试表,再看一下atlas中是否可以搜索到。可以就算配置成功了

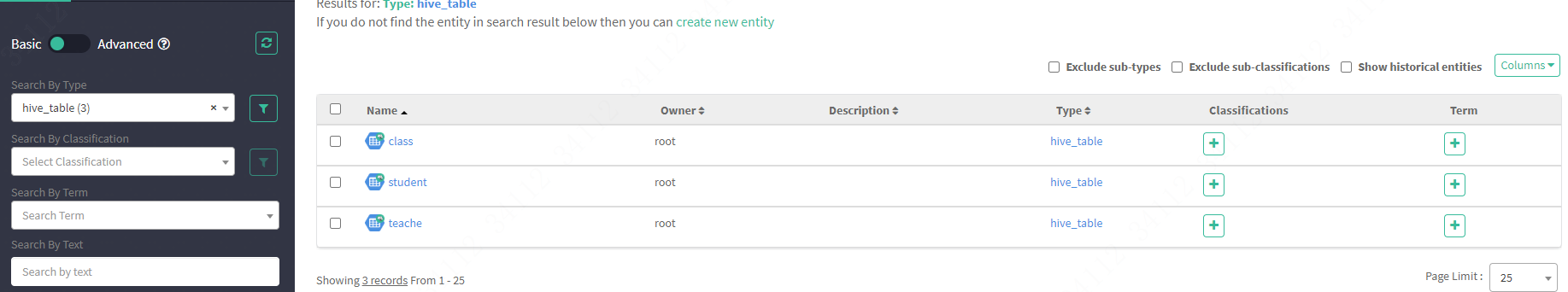

创建之前,数据表信息展示如下

之后在hive里再创建一张表

| ``` |

hive> CREATE TABLE teache ( > id int , > name string , > age int , > sex string, > peoject string > ) ; OK Time taken: 0.645 seconds hive> show tables; OK class student teache Time taken: 0.108 seconds, Fetched: 3 row(s) |

atlas自动就有了

# 五、错误记录

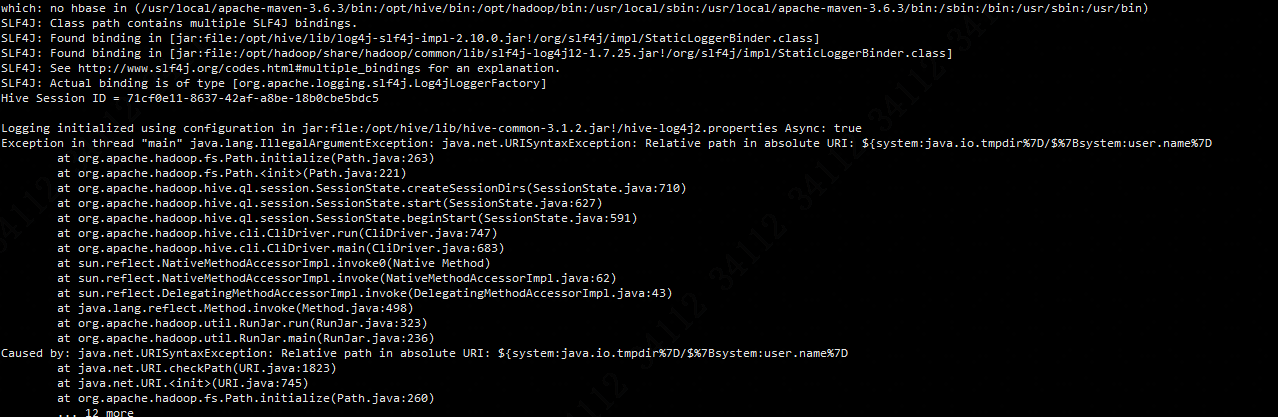

### 1、配置文件中存在异常字符

根据指定的

Logging initialized using configuration in jar:file:/opt/hive/lib/hive-common- 3.1 . 2 .jar!/hive-log4j2.properties Async: true

Exception in thread "main" java.lang.IllegalArgumentException: java.net.URISyntaxException: Relative path in absolute URI: {system:java.io.tmpdir%7D/%7Bsystem:user.name%7D

at org.apache.hadoop.fs.Path.initialize(Path.java: 263 )

at org.apache.hadoop.fs.Path.(Path.java: 221 )

at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java: 710 )

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java: 627 )

at org.apache.hadoop.hive.ql.session.SessionState.beginStart(SessionState.java: 591 )

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java: 747 )

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java: 683 )

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java: 62 )

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java: 43 )

at java.lang.reflect.Method.invoke(Method.java: 498 )

at org.apache.hadoop.util.RunJar.run(RunJar.java: 323 )

at org.apache.hadoop.util.RunJar.main(RunJar.java: 236 )

Caused by: java.net.URISyntaxException: Relative path in absolute URI: {system:java.io.tmpdir%7D/%7Bsystem:user.name%7D

at java.net.URI.checkPath(URI.java: 1823 )

at java.net.URI.(URI.java: 745 )

at org.apache.hadoop.fs.Path.initialize(Path.java: 260 )

... 12 more

解决方式:

找到指定的配置文件行数,将描述进行删除

hive.exec.scratchdir /tmp/hive HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: {hive.exec.scratchdir}/<username> is created, with {hive.scratch.dir.permission}.

hive.exec.local.scratchdir /tmp/hive/local Local scratch space for Hive jobs

hive.downloaded.resources.dir /tmp/hive/resources Temporary local directory for added resources in the remote file system.

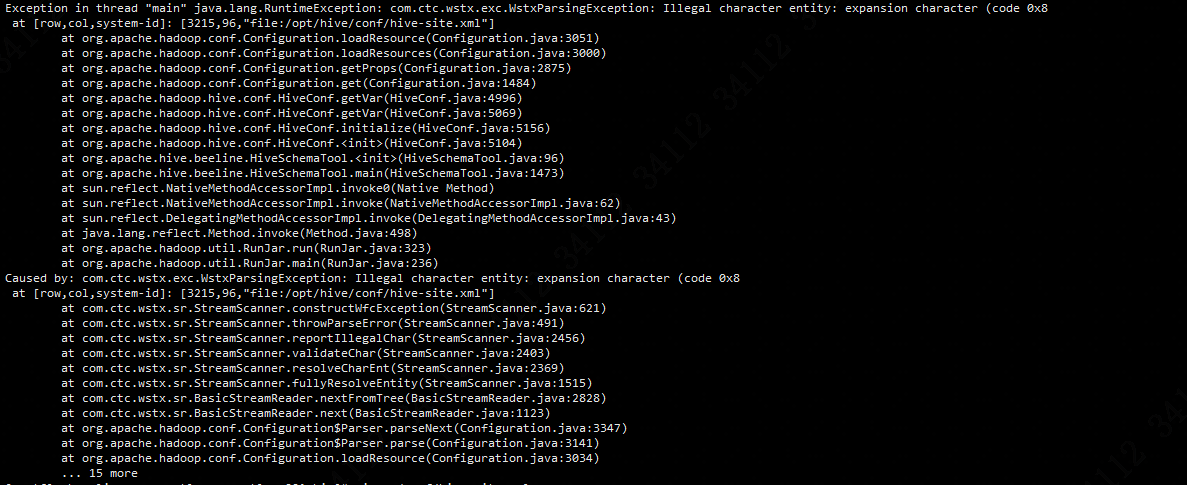

### 2、guava版本不一致

Exception in thread "main" java.lang.RuntimeException: com.ctc.wstx.exc.WstxParsingException: Illegal character entity: expansion character (code 0x8

at [row,col,system-id]: [ 3215 , 96 , "file:/opt/hive/conf/hive-site.xml" ]

at org.apache.hadoop.conf.Configuration.loadResource(Configuration.java: 3051 )

at org.apache.hadoop.conf.Configuration.loadResources(Configuration.java: 3000 )

at org.apache.hadoop.conf.Configuration.getProps(Configuration.java: 2875 )

at org.apache.hadoop.conf.Configuration.get(Configuration.java: 1484 )

at org.apache.hadoop.hive.conf.HiveConf.getVar(HiveConf.java: 4996 )

at org.apache.hadoop.hive.conf.HiveConf.getVar(HiveConf.java: 5069 )

at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java: 5156 )

at org.apache.hadoop.hive.conf.HiveConf.(HiveConf.java: 5104 )

at org.apache.hive.beeline.HiveSchemaTool.(HiveSchemaTool.java: 96 )

at org.apache.hive.beeline.HiveSchemaTool.main(HiveSchemaTool.java: 1473 )

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java: 62 )

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java: 43 )

at java.lang.reflect.Method.invoke(Method.java: 498 )

at org.apache.hadoop.util.RunJar.run(RunJar.java: 323 )

at org.apache.hadoop.util.RunJar.main(RunJar.java: 236 )

Caused by: com.ctc.wstx.exc.WstxParsingException: Illegal character entity: expansion character (code 0x8

at [row,col,system-id]: [ 3215 , 96 , "file:/opt/hive/conf/hive-site.xml" ]

at com.ctc.wstx.sr.StreamScanner.constructWfcException(StreamScanner.java: 621 )

at com.ctc.wstx.sr.StreamScanner.throwParseError(StreamScanner.java: 491 )

at com.ctc.wstx.sr.StreamScanner.reportIllegalChar(StreamScanner.java: 2456 )

at com.ctc.wstx.sr.StreamScanner.validateChar(StreamScanner.java: 2403 )

at com.ctc.wstx.sr.StreamScanner.resolveCharEnt(StreamScanner.java: 2369 )

at com.ctc.wstx.sr.StreamScanner.fullyResolveEntity(StreamScanner.java: 1515 )

at com.ctc.wstx.sr.BasicStreamReader.nextFromTree(BasicStreamReader.java: 2828 )

at com.ctc.wstx.sr.BasicStreamReader.next(BasicStreamReader.java: 1123 )

at org.apache.hadoop.conf.Configuration$Parser.parseNext(Configuration.java: 3347 )

at org.apache.hadoop.conf.Configuration$Parser.parse(Configuration.java: 3141 )

at org.apache.hadoop.conf.Configuration.loadResource(Configuration.java: 3034 )

... 15 more

解决办法:

1、com.google.common.base.Preconditions.checkArgument这个类所在的jar包为:guava.jar

2、hadoop-3.2.1(路径:hadoop\share\hadoop\common\lib)中该jar包为 guava-27.0-jre.jar;而hive-3.1.2(路径:hive/lib)中该jar包为guava-19.0.1.jar

3、将jar包变成一致的版本:删除hive中低版本jar包,将hadoop中高版本的复制到hive的lib中。

再次启动问题得到解决!