Debezium 作为一个数据监控平台,可以与kafka mysql 协作,通过读取binlog信息,写入到kafka当中。

安装好mysql之后,binlog 配置

mysql版本5.7.23

kafka版本kafka_2.12-2.3.0

debezium版本debezium-connector-mysql-1.0.0.Beta3-plugin.tar.gz

1.如果是docker 里面安装的mysql,需要cp出 mysql的配置文件, copy出来的方式为

docker cp mysql:/etc/mysql/mysql.conf.d/mysqld.cnf /data

修改完成以后再copy回去。

docker cp /data/mysqld.cn mysql:/etc/mysql/mysql.conf.d/

增加三行数据

log-bin=/var/lib/mysql/mysql-bin

binlog-format=ROW

server-id=123454

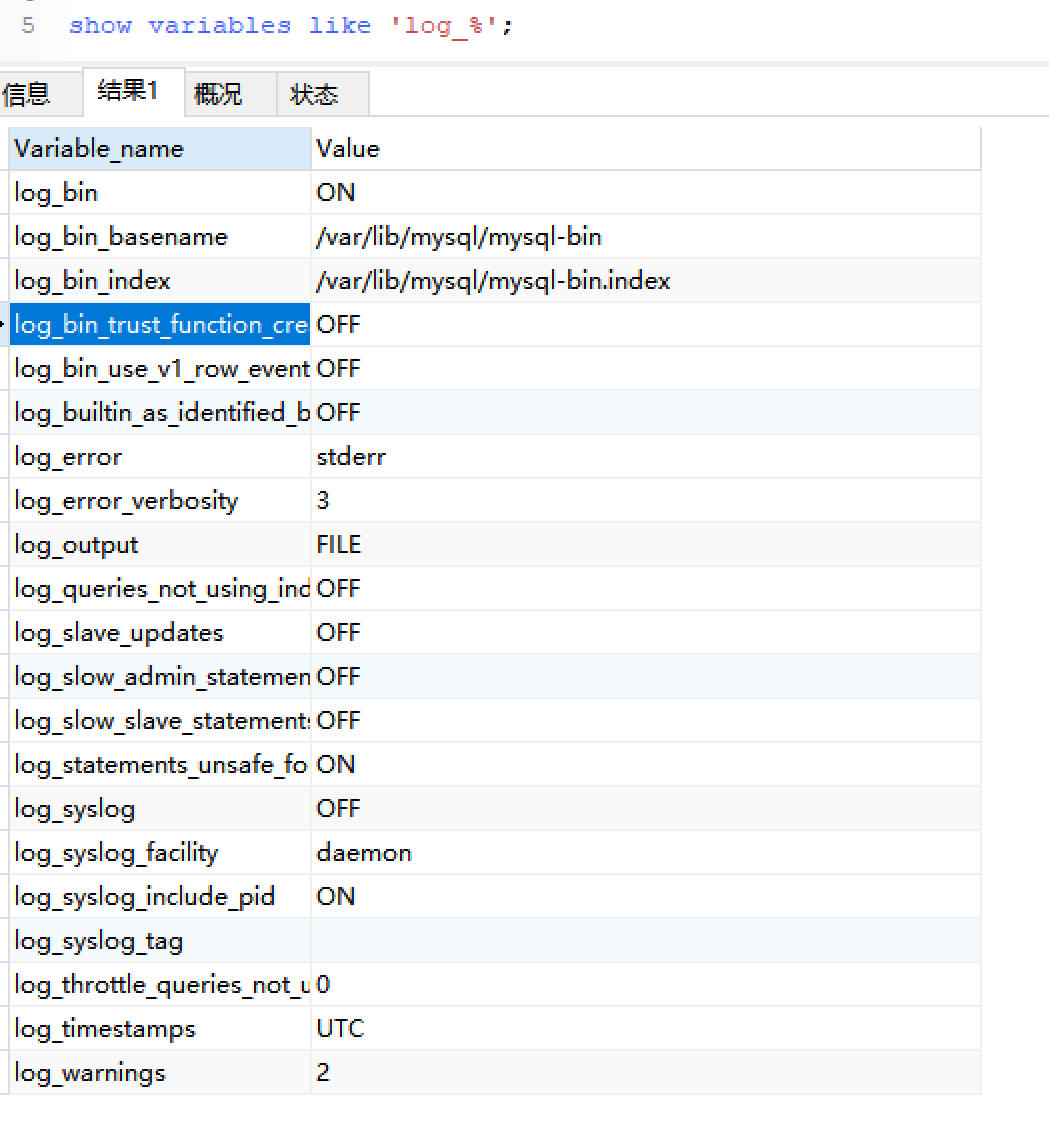

查看log_bin的开启状态

show variables like 'log_%';

2.接着配置Debezium数据连接

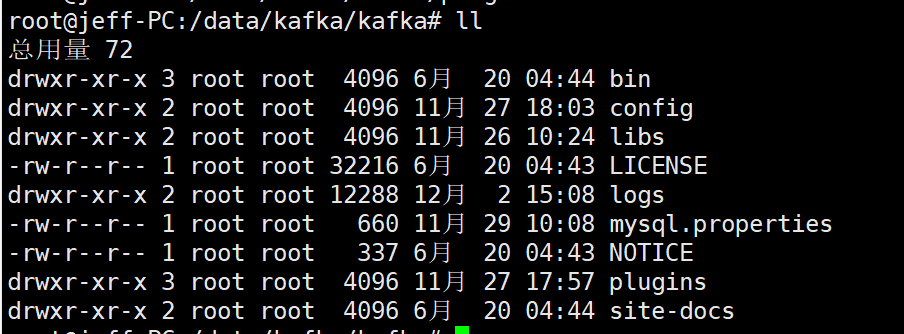

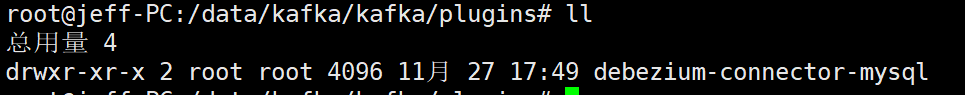

下载debezium-connector-mysql-1.0.0.Beta3-plugin.tar.gz 解压,解压后文件为debezium-connector-mysql

把解压后的文件copy到kafka的根目录的plugins下,如果没有则创建plugins包。

3.启动之后运行连接创建connect

curl -H "Content-Type:application/json" -XPUT 'http://127.0.0.1:8083/connectors/order-center-connector1/config' -d '

{

"connector.class": "io.debezium.connector.mysql.MySqlConnector",

"tasks.max": "1",

"database.hostname": "从库ip",

"database.port": "3306",

"database.user": "debezium",

"database.password": "DebezIum0109",

"database.server.id": "19991",

"database.server.name": "trade_order_0",

"database.whitelist": "tx_order",

"include.schema.changes": "false",

"snapshot.mode": "schema_only",

"snapshot.locking.mode": "none",

"database.history.kafka.bootstrap.servers": "127.0.0.1:9092",

"database.history.kafka.topic": "dbhistory.trade_order_0",

"decimal.handling.mode": "string",

"table.whitelist": "tx_order",

"database.history.store.only.monitored.tables.ddl":"true",

"database.history.skip.unparseable.ddl":"true"

}'

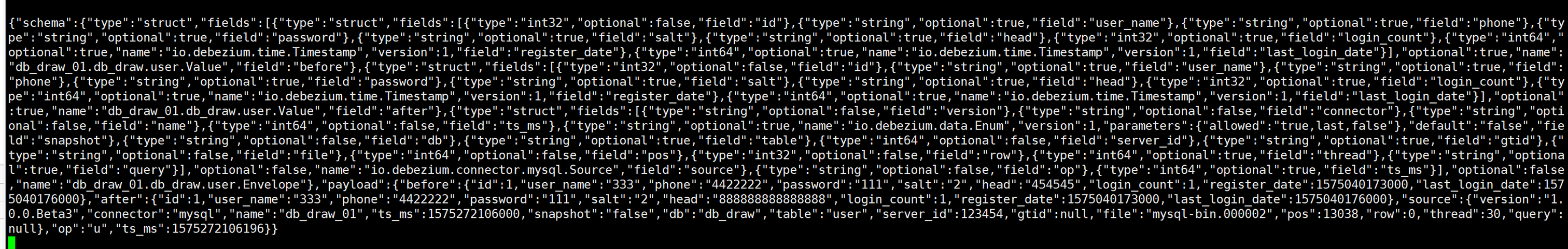

4.kafka查看数据

bin/kafka-topics.sh --list --zookeeper localhost:2181

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic db_draw_01.db_draw.user --from-beginning

查看kafka日志

5.java消费kafka消息

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>1.1.5.RELEASE</version>

</dependency>

配置kafka消费

import com.fasterxml.jackson.databind.DeserializationFeature;

import com.fasterxml.jackson.databind.ObjectMapper;

import com.fasterxml.jackson.databind.PropertyNamingStrategy;

import com.jeff.boot.controller.DebeziumEvent;

import com.jeff.boot.controller.KafkaListener;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.OffsetResetStrategy;

import org.apache.kafka.common.serialization.StringDeserializer;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.kafka.annotation.EnableKafka;

import org.springframework.kafka.config.ConcurrentKafkaListenerContainerFactory;

import org.springframework.kafka.config.KafkaListenerContainerFactory;

import org.springframework.kafka.core.ConsumerFactory;

import org.springframework.kafka.core.DefaultKafkaConsumerFactory;

import org.springframework.kafka.listener.ConcurrentMessageListenerContainer;

import org.springframework.kafka.support.serializer.JsonDeserializer;

import java.util.HashMap;

import java.util.Locale;

import java.util.Map;

import static org.springframework.kafka.listener.AbstractMessageListenerContainer.AckMode.MANUAL;

/**

* https://blog.csdn.net/russle/article/details/80296006

*

* @description:

* @author: MoXingwang 2018-11-29 21:36

**/

@Configuration

@EnableKafka

public class KafkaConsumerConfig {

@Value("${kafka.bootstrapServers}")

private String servers;

@Value("${kafka.groupId}")

private String groupId;

@Bean

KafkaListenerContainerFactory<ConcurrentMessageListenerContainer<String, DebeziumEvent>> kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, DebeziumEvent> factory = new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

factory.getContainerProperties().setAckMode(MANUAL);

factory.setBatchListener(true);

return factory;

}

@Bean

public ConsumerFactory<String, DebeziumEvent> consumerFactory() {

return new DefaultKafkaConsumerFactory<>(consumerConfigs(),

new StringDeserializer(),

new JsonDeserializer<>(DebeziumEvent.class,fooObjectMapper()));

}

@Bean

public Map<String, Object> consumerConfigs() {

Map<String, Object> propsMap = new HashMap<>();

propsMap.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, servers);

propsMap.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, false);

propsMap.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG, "100");

propsMap.put(ConsumerConfig.SESSION_TIMEOUT_MS_CONFIG, "99999");

propsMap.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

propsMap.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, JsonDeserializer.class);

propsMap.put(ConsumerConfig.GROUP_ID_CONFIG, groupId);

propsMap.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, OffsetResetStrategy.EARLIEST.toString().toLowerCase(Locale.ROOT));

return propsMap;

}

@Bean

public ObjectMapper fooObjectMapper() {

ObjectMapper map = new ObjectMapper();

map.setPropertyNamingStrategy(PropertyNamingStrategy.CAMEL_CASE_TO_LOWER_CASE_WITH_UNDERSCORES);

map.configure(DeserializationFeature.FAIL_ON_UNKNOWN_PROPERTIES, false);

return map;

}

@Bean

KafkaListener listener() {

return new KafkaListener();

}

}

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.kafka.support.Acknowledgment;

import java.util.*;

import java.util.concurrent.ExecutorService;

public class KafkaListener {

private static Logger logger = LoggerFactory.getLogger(KafkaListener.class);

private static final int THREAD_COUNT = Runtime.getRuntime().availableProcessors();

private static final int MULTITHREADING_THRESHOLD = THREAD_COUNT * 4;

private static final Map<Integer, ExecutorService> THREADS_MAP = new HashMap<>();

@org.springframework.kafka.annotation.KafkaListener(id = "listenerDbuser", topics = "db_draw_01.db_draw.user")

public void testKafka(List<ConsumerRecord<Object, DebeziumEvent>> crs, Acknowledgment acknowledgment) {

for (ConsumerRecord<Object, DebeziumEvent> cr : crs) {

DebeziumEvent debeziumEvent = cr.value();

System.out.println(debeziumEvent);

acknowledgment.acknowledge();

}

}

}

本文摘取官网的例子,全部是docker系统中安装完成。

1.安装启动zookeeper

docker run -it --rm --name zookeeper -p 2181:2181 -p 2888:2888 -p 3888:3888 debezium/zookeeper:0.9

2.安装启动kafka

docker run -it --rm --name kafka -p 9092:9092 --link zookeeper:zookeeper debezium/kafka:0.9

3.安装启动mysql

docker run -it --rm --name mysql -p 3306:3306 -e MYSQL_ROOT_PASSWORD=debezium -e MYSQL_USER=mysqluser -e MYSQL_PASSWORD=mysqlpw debezium/example-mysql:0.9

启动mysql客户端

docker run -it --rm --name mysqlterm --link mysql --rm mysql:5.7 sh -c 'exec mysql -h"$MYSQL_PORT_3306_TCP_ADDR" -P"$MYSQL_PORT_3306_TCP_PORT" -uroot -p"$MYSQL_ENV_MYSQL_ROOT_PASSWORD"'

4.启动Kafka Connect

docker run -it --rm --name connect -p 8083:8083 -e GROUP_ID=1 -e CONFIG_STORAGE_TOPIC=my_connect_configs -e OFFSET_STORAGE_TOPIC=my_connect_offsets -e STATUS_STORAGE_TOPIC=my_connect_statuses --link zookeeper:zookeeper --link kafka:kafka --link mysql:mysql debezium/connect:0.9

通过restful接口直接访问kafka connect内容

curl -H "Accept:application/json" localhost:8083/

返回结果

{"version":"2.1.1","commit":"cb8625948210849f"}

5.新增connector

curl -i -X POST -H "Accept:application/json" -H "Content-Type:application/json" localhost:8083/connectors/ -d '{ "name": "inventory-connector", "config": { "connector.class": "io.debezium.connector.mysql.MySqlConnector", "tasks.max": "1", "database.hostname": "mysql", "database.port": "3306", "database.user": "debezium", "database.password": "dbz", "database.server.id": "184054", "database.server.name": "dbserver1", "database.whitelist": "inventory", "database.history.kafka.bootstrap.servers": "kafka:9092", "database.history.kafka.topic": "dbhistory.inventory" } }'

localhost:8083/connectors/inventory-connector 查看具体connector内容:

{ "name": "inventory-connector",

"config": {

"connector.class": "io.debezium.connector.mysql.MySqlConnector",

"tasks.max": "1",

"database.hostname": "mysql",

"database.port": "3306",

"database.user": "debezium",

"database.password": "dbz",

"database.server.id": "184054",

"database.server.name": "dbserver1",

"database.whitelist": "inventory",

"database.history.kafka.bootstrap.servers": "kafka:9092",

"database.history.kafka.topic": "schema-changes.inventory"

}

}

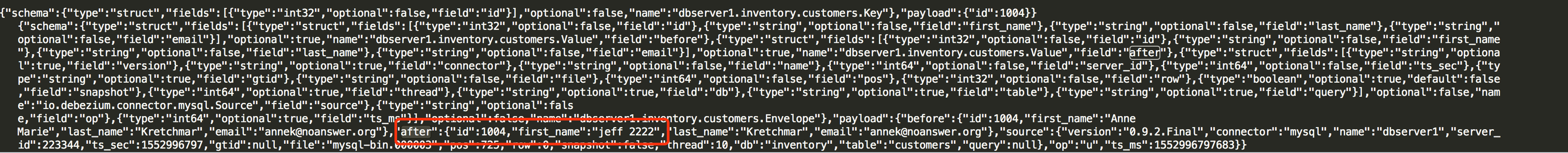

6.通过mysql客户端连接上数据库并修改数据

UPDATE customers SET first_name='jeff 2222' WHERE id=1004;

7.观察kafka中数据的

docker run -it --name watcher --rm --link zookeeper:zookeeper --link kafka:kafka debezium/kafka:0.9 watch-topic -a -k dbserver1.inventory.customers

如下图更新成功 kafka中能读取到数据库中数据的变化

查看kafka list bin/kafka-topics.sh --list --zookeeper localhost:2181

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test --from-beginning