基本思路:

- dlib库

- openCv

- shape_predictor_68_face_landmarks.dat(用于人脸68个关键点检测的dat模型库)

参考资料

参考资料

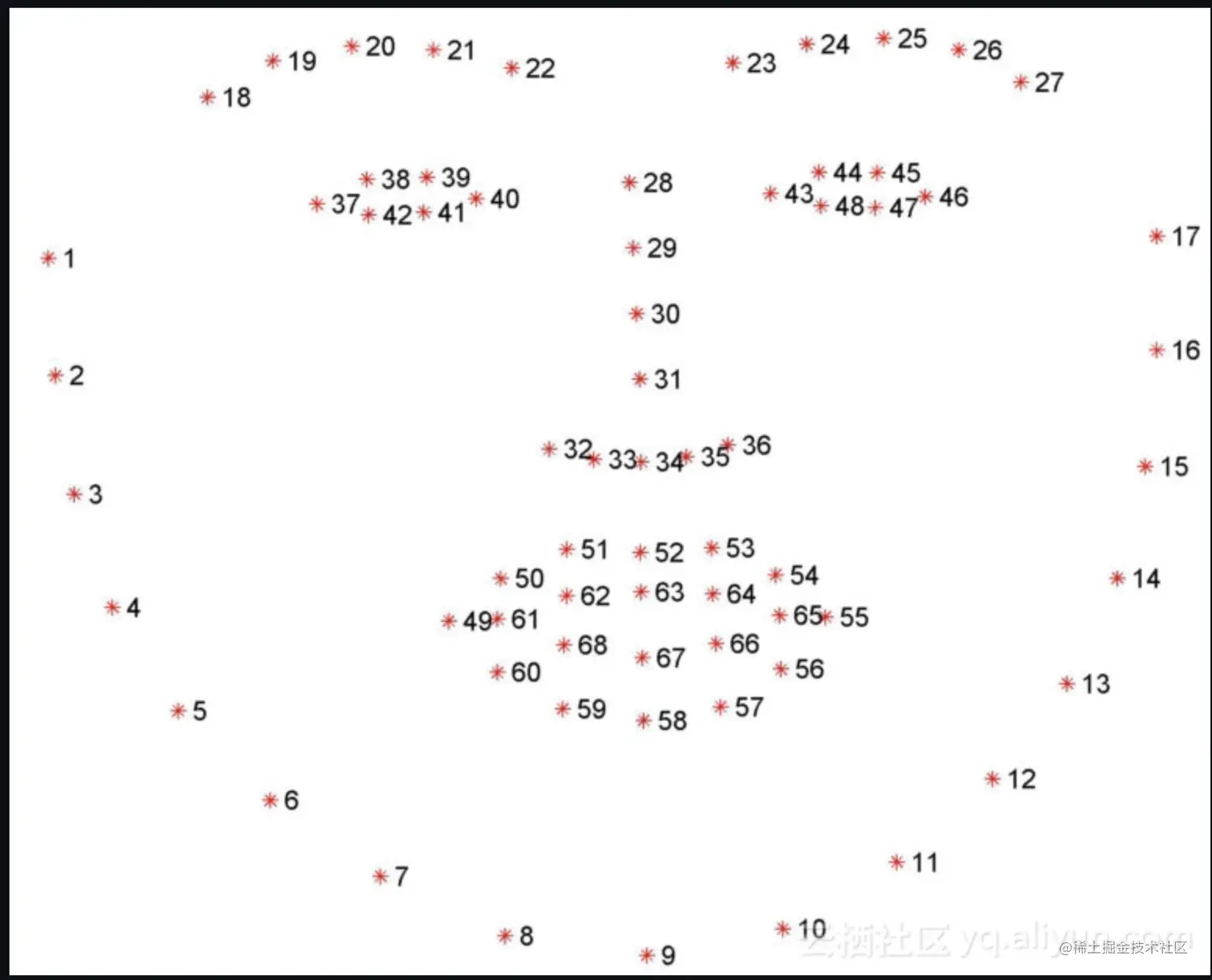

- 人脸68个监测点

from scipy.spatial import distance as dist

from imutils.video import FileVideoStream

from imutils.video import VideoStream

from imutils import face_utils

import argparse

import imutils

import time

import dlib

import cv2

import numpy as np

def eye_aspect_ratio(eye):

A = dist.euclidean(eye[1], eye[5])

B = dist.euclidean(eye[2], eye[4])

C = dist.euclidean(eye[0], eye[3])

ear = (A + B) / (2.0 * C)

return ear

def mouth_aspect_ratio(mouth):

A = np.linalg.norm(mouth[2] - mouth[9])

B = np.linalg.norm(mouth[4] - mouth[7])

C = np.linalg.norm(mouth[0] - mouth[6])

mar = (A + B) / (2.0 * C)

return mar

def nose_jaw_distance(nose, jaw):

face_left1 = dist.euclidean(nose[0], jaw[0])

face_right1 = dist.euclidean(nose[0], jaw[16])

face_left2 = dist.euclidean(nose[3], jaw[2])

face_right2 = dist.euclidean(nose[3], jaw[14])

face_distance = (face_left1, face_right1, face_left2, face_right2)

return face_distance

def eyebrow_jaw_distance(leftEyebrow, jaw):

eyebrow_left = dist.euclidean(leftEyebrow[2], jaw[0])

eyebrow_right = dist.euclidean(leftEyebrow[2], jaw[16])

left_right = dist.euclidean(jaw[0], jaw[16])

eyebrow_distance = (eyebrow_left, eyebrow_right, left_right)

return eyebrow_distance

ap = argparse.ArgumentParser()

ap.add_argument("-p", "--shape-predictor", default="shape_predictor_68_face_landmarks.dat",

help="path to facial landmark predictor")

ap.add_argument("-v", "--video", type=str, default="camera",

help="path to input video file")

ap.add_argument("-t", "--threshold", type=float, default=0.27,

help="threshold to determine closed eyes")

ap.add_argument("-f", "--frames", type=int, default=2,

help="the number of consecutive frames the eye must be below the threshold")

def main():

args = vars(ap.parse_args())

EYE_AR_THRESH = args['threshold']

EYE_AR_CONSEC_FRAMES = args['frames']

MAR_THRESH = 0.5

COUNTER_EYE = 0

TOTAL_EYE = 0

COUNTER_MOUTH = 0

TOTAL_MOUTH = 0

distance_left = 0

distance_right = 0

TOTAL_FACE = 0

nod_flag = 0

TOTAL_NOD = 0

print("[Prepare000] 加载面部界标预测器...")

detector = dlib.get_frontal_face_detector()

predictor = dlib.shape_predictor(args["shape_predictor"])

(lStart, lEnd) = face_utils.FACIAL_LANDMARKS_IDXS["left_eye"]

(rStart, rEnd) = face_utils.FACIAL_LANDMARKS_IDXS["right_eye"]

(mStart, mEnd) = face_utils.FACIAL_LANDMARKS_IDXS["mouth"]

(nStart, nEnd) = face_utils.FACIAL_LANDMARKS_IDXS["nose"]

(jStart, jEnd) = face_utils.FACIAL_LANDMARKS_IDXS['jaw']

(Eyebrow_Start, Eyebrow_End) = face_utils.FACIAL_LANDMARKS_IDXS['left_eyebrow']

print("[Prepare111] 启动视频流线程...")

print("[Prompt information] 按Q键退出...")

if args['video'] == "camera":

vs = VideoStream(src=0).start()

fileStream = False

else:

vs = FileVideoStream(args["video"]).start()

fileStream = True

time.sleep(1.0)

while True:

if fileStream and not vs.more():

break

frame = vs.read()

frame = imutils.resize(frame, width=600)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

rects = detector(gray, 0)

for rect in rects:

shape = predictor(gray, rect)

shape = face_utils.shape_to_np(shape)

leftEye = shape[lStart:lEnd]

rightEye = shape[rStart:rEnd]

leftEAR = eye_aspect_ratio(leftEye)

rightEAR = eye_aspect_ratio(rightEye)

Mouth = shape[mStart:mEnd]

mouthMAR = mouth_aspect_ratio(Mouth)

nose = shape[nStart:nEnd]

jaw = shape[jStart:jEnd]

NOSE_JAW_Distance = nose_jaw_distance(nose, jaw)

leftEyebrow = shape[Eyebrow_Start:Eyebrow_End]

Eyebrow_JAW_Distance = eyebrow_jaw_distance(leftEyebrow, jaw)

ear = (leftEAR + rightEAR) / 2.0

mar = mouthMAR

face_left1 = NOSE_JAW_Distance[0]

face_right1 = NOSE_JAW_Distance[1]

face_left2 = NOSE_JAW_Distance[2]

face_right2 = NOSE_JAW_Distance[3]

eyebrow_left = Eyebrow_JAW_Distance[0]

eyebrow_right = Eyebrow_JAW_Distance[1]

left_right = Eyebrow_JAW_Distance[2]

leftEyeHull = cv2.convexHull(leftEye)

rightEyeHull = cv2.convexHull(rightEye)

cv2.drawContours(frame, [leftEyeHull], -1, (0, 255, 0), 1)

cv2.drawContours(frame, [rightEyeHull], -1, (0, 255, 0), 1)

mouth_hull = cv2.convexHull(Mouth)

cv2.drawContours(frame, [mouth_hull], -1, (255, 0, 0), 1)

noseHull = cv2.convexHull(nose)

cv2.drawContours(frame, [noseHull], -1, (0, 0, 255), 1)

jawHull = cv2.convexHull(jaw)

cv2.drawContours(frame, [jawHull], -1, (0, 0, 255), 1)

if ear < EYE_AR_THRESH:

COUNTER_EYE += 1

else:

if COUNTER_EYE >= EYE_AR_CONSEC_FRAMES:

TOTAL_EYE += 1

COUNTER_EYE = 0

if mar > MAR_THRESH:

COUNTER_MOUTH += 1

else:

if COUNTER_MOUTH != 0:

TOTAL_MOUTH += 1

COUNTER_MOUTH = 0

if face_left1 >= face_right1+2 and face_left2 >= face_right2+2:

distance_left += 1

if face_right1 >= face_left1+2 and face_right2 >= face_left2+2:

distance_right += 1

if distance_left != 0 and distance_right != 0:

TOTAL_FACE += 1

distance_right = 0

distance_left = 0

if eyebrow_left+eyebrow_right <= left_right+3:

nod_flag += 1

if nod_flag != 0 and eyebrow_left+eyebrow_right >= left_right+3:

TOTAL_NOD += 1

nod_flag = 0

cv2.putText(frame, "Blinks: {}".format(TOTAL_EYE), (10, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.putText(frame, "Mouth is open: {}".format(TOTAL_MOUTH), (10, 60),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.putText(frame, "shake one's head: {}".format(TOTAL_FACE), (10, 90),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.putText(frame, "nod: {}".format(TOTAL_NOD), (10, 120),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.putText(frame, "Live detection: wink(5)", (300, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

if TOTAL_EYE >= 5:

cv2.putText(frame, "open your mouth(3)", (300, 60),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

if TOTAL_MOUTH >= 3:

cv2.putText(frame, "shake your head(2)", (300, 90),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

if TOTAL_FACE >= 2:

cv2.putText(frame, "nod(2)", (300, 120),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

if TOTAL_NOD >= 2:

cv2.putText(frame, "Live detection: done", (300, 150),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

if key == ord("q"):

break

cv2.destroyAllWindows()

vs.stop()

if __name__ == '__main__':

main()