反向传播概述

反向传播算法最初在 1970 年代被提及,但是人们直到 David Rumelhart、Geoffrey Hinton 和 Ronald Williams 的著名的 1986 年的论文中才认识到这个算法的重要性。

反向传播的核心是一个对代价函数 关于任何权重

和 偏置

的偏导数

的表达式。

这个表达式告诉我们在改变权重和偏置时,代价函数变化的快慢。

关于代价函数的两个假设

-

代价函数可以被写成在每一个训练样本

上的代价函数

的均值

。

-

代价函数可以写成神经网络输出的函数。

需要假设1的原因是,反向传播实际上是对一个独立的训练样本计算了 和

。然后通过在所有训练样本上进行平均化获得

和

。

需要假设2的原因是,要把代价函数与神经网络输出联系起来,进而与神经网络的参数联系起来。

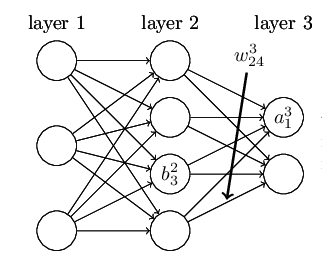

符号定义

是从

层的第

个神经元到

层的第

个神经元的权重。

是第

层的第

个神经元的偏置。

是第

层的第

个神经元的激活值。

是激活函数。

把上面的符号向量化

是权重矩阵,第

行

列的元素是

。

例如第二层与第三层之间的权重矩阵是

是偏置向量。第

行的元素是

。

例如第二层的偏置向量是

有了这些表示 层的第

个神经元的激活值

就和

层的激活值通过方程关联起来了

把上面式子向量化

例如第三层的激活向量是

是激活向量。第

行的元素是

。

定义

则

表示第第

层的带权输入。第

个元素是

。

是第

层的第

个神经元的带权输入。

反向传播的核心是一个对代价函数 关于任何权重

和 偏置

的偏导数

的表达式。为了计算这些值,引入一个中间量

,表示在

层的第

个神经元的误差。

定义

是误差向量,

的第

个元素是

。

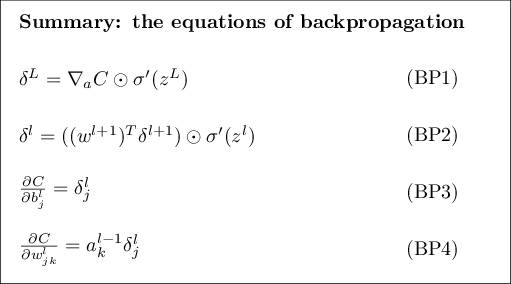

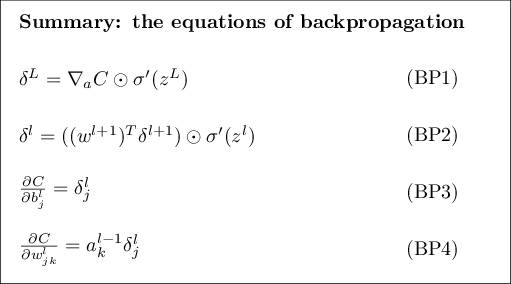

反向传播的四个基本方程

是求梯度运算符,

结果是一个向量,其元素是偏导数

。

是按元素乘积的运算符,

,例如

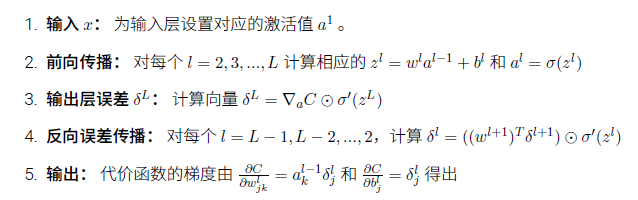

反向传播算法

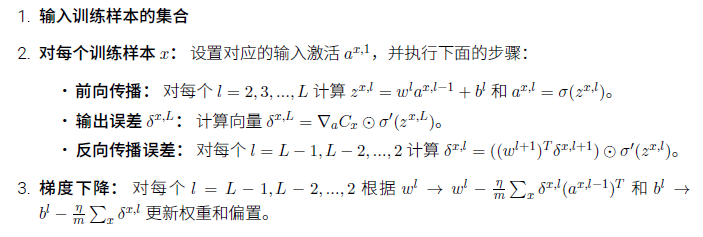

正如我们上面所讲的,反向传播算法对一个训练样本计算代价函数的梯度,。在实践

中,通常将反向传播算法和诸如随机梯度下降这样的学习算法进行组合使用,我们会对许多训

练样本计算对应的梯度。特别地,给定一个大小为 m 的小批量数据,下面的算法在这个小批量

数据的基础上应用梯度下降学习算法:

反向传播算法与小批量随机梯度下降算法结合的一个示意代码,完整代码参看 network.py

def backprop(self, x, y):

"""Return a tuple ``(nabla_b, nabla_w)`` representing the

gradient for the cost function C_x. ``nabla_b`` and

``nabla_w`` are layer-by-layer lists of numpy arrays, similar

to ``self.biases`` and ``self.weights``."""

nabla_b = [np.zeros(b.shape) for b in self.biases]

nabla_w = [np.zeros(w.shape) for w in self.weights]

# feedforward

activation = x

activations = [x] # list to store all the activations, layer by layer

zs = [] # list to store all the z vectors, layer by layer

for b, w in zip(self.biases, self.weights):

z = np.dot(w, activation)+b

zs.append(z)

activation = sigmoid(z)

activations.append(activation)

# backward pass

delta = self.cost_derivative(activations[-1], y) * \

sigmoid_prime(zs[-1])

nabla_b[-1] = delta

nabla_w[-1] = np.dot(delta, activations[-2].transpose())

# Note that the variable l in the loop below is used a little

# differently to the notation in Chapter 2 of the book. Here,

# l = 1 means the last layer of neurons, l = 2 is the

# second-last layer, and so on. It's a renumbering of the

# scheme in the book, used here to take advantage of the fact

# that Python can use negative indices in lists.

for l in range(2, self.num_layers):

z = zs[-l]

sp = sigmoid_prime(z)

delta = np.dot(self.weights[-l+1].transpose(), delta) * sp

nabla_b[-l] = delta

nabla_w[-l] = np.dot(delta, activations[-l-1].transpose())

return (nabla_b, nabla_w)

def cost_derivative(self, output_activations, y):

"""Return the vector of partial derivatives \partial C_x /

\partial a for the output activations."""

return (output_activations-y)

def sigmoid_prime(z):

"""Derivative of the sigmoid function."""

return sigmoid(z)*(1-sigmoid(z))

四个基本方程的证明

我们现在证明这四个基本的方程(BP)-(BP4)。所有的这些都是多元微积分的链式法则的推论。

证明)

从方程(BP1)开始,它给出了误差 的表达式。根据定义

根据关于代价函数的两个假设2 “代价函数可以写成神经网络输出的函数”,应用链式法测可知可先对神经网络输出求偏导 再对带权输出求偏导

。

看起来上面式子很复杂,但是由于第 个神经元的输出激活值

只依赖于 当下标

时第

个神经元的输入权重

。所有当

时

消失了。结果我们可以简化上一个式子为

又因为 所以

可以写成

,方程变为

这就是分量形式的(BP1),再根据 是求梯度运算符,

结果是一个向量,其元素是偏导数

。方程可以写成向量形式

(BP1) 得到证明。

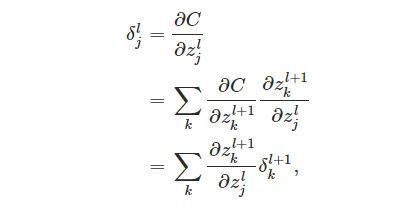

证明 %5ET%20%5Cdelta%5E%7Bl%2B1%7D)%20%5Codot%20%5Csigma'(z%5El))

证明(BP2),它个给出以下一层误差 的形式表示误差

。为此,要以

的形式重写

,

和

通过

和

联系起来,应用链式法测

根据 的定义有

对

做偏微分,得到

注意虽然 和

所在的两层神经元连接错综复杂,但两层之间任意一对神经元(同一层内不连接)只有一条连接,即

和

之间只通过

连接。所以

对

做偏微分的结果很简单,只是

。把这个结果带入

中

这正是以分量形式写的(BP2)。

写成向量形式

举例

(BP2) 得到证明。

证明

根据 定义

和 定义

因此

又因为

所以

即

写成向量形式

(BP3) 得到证明。

证明

根据 定义

和 定义

又因为

所以

把式子向量化

举例

(BP4) 得到证明。

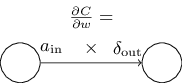

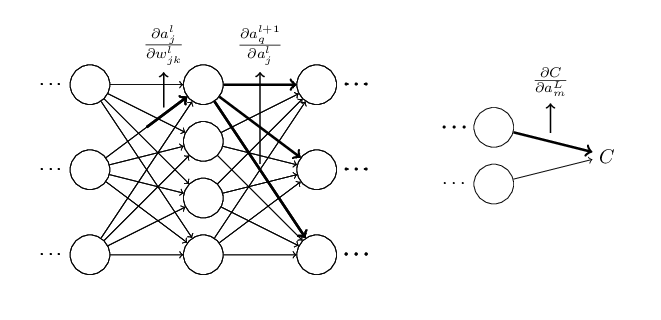

一个直观的图:

到此关于反向传播的四个方程已经全部证明完毕。

其他学者反向传播四个方程的证明(他写的更简明扼要些):CSDN: oio328Loio

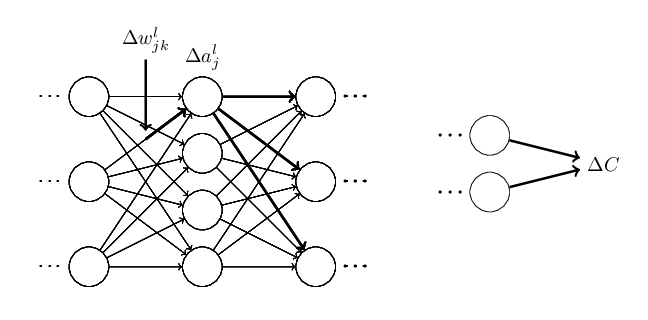

反向传播:全局观

如上图所示,假设我们对 做一点微小的扰动

, 这个扰动会沿着神经网络最终影响到代价函数

, 代价函数的

改变和

按照下面公式联系起来

可以想象影响代价函数的一条路径是

为了计算 的全部改变,我们需要对所有可能的路径进行求和,即

因为

根据上面的三个式子可知

上面的公式看起来复杂,这里有一个相当好的直觉上的解释。我们用这个公式计算 关于网络中一个权重的变化率。而这个公式告诉我们的是:两个神经元之间的连接其实是关联于一个变化率因子,这仅仅是一个神经元的激活值相对于其他神经元的激活值的偏导数。路径的变化率因子就是这条路径上众多因子的乘积。整个变化率

就是对于所有可能从初始权重到最终输出的代价函数的路径的变化率因子的和。针对某一路径,这个过程解释如下,

如果用矩阵运算对上面式子所有的情况求和,然后尽可能化简,最后你会发现,自己就是在做反向传播!可以将反向传播想象成一种计算所有可能路径变化率求和的方式。或者,换句话说,反向传播就是一种巧妙地追踪权重和偏置微小变化的传播,抵达输出层影响代价函数的技术。

如果你尝试用上面的思路来证明反向传播,会比本文的反向传播四个方程证明复杂许多,因为按上面的思路来证明有许多可以简化的地方。其中可以添加一个巧妙的步骤,上面方程的偏导对象是类似 的激活值。巧妙之处是改用加权输入,例如

,作为中间变量。如果没想到这个主意,而是继续使用激活值

,你得到的证明最后会比前文给出的证明稍稍复杂些。

其实最早的证明的出现也不是太过神秘的事情。因为那只是对简化证明的艰辛工作的积累!

参考文献

[1] Michael Nielsen. CHAPTER 2 How the backpropagation algorithm works[DB/OL]. neuralnetworksanddeeplearning.com/chap2.html, 2018-06-21.

[2] Zhu Xiaohu. Zhang Freeman.Another Chinese Translation of Neural Networks and Deep Learning[DB/OL]. github.com/zhanggyb/nn…, 2018-06-21.

[3] oio328Loio. 神经网络学习(三)反向(BP)传播算法(1)[DB/OL]. blog.csdn.net/hoho1151191…, 2018-06-25.